AI agent frameworks are software libraries and foundational architectures that enable developers to build, orchestrate, and deploy autonomous artificial intelligence agents. These frameworks provide essential abstractions for connecting large language models (LLMs) with external tools, memory management, and multi-agent reasoning capabilities.

The Evolution of Agentic AI and Orchestration

The transition from stateless, single-turn large language model interactions to autonomous, goal-oriented systems represents a fundamental shift in artificial intelligence engineering. Initially, developers relied on manual prompt engineering and static chaining to extract value from LLMs. However, as business requirements grew more complex, the need for systems that could dynamically plan, iteratively execute tools, and correct their own errors became apparent. This is precisely the domain of AI agent frameworks.

AI agent frameworks abstract the immense underlying complexity of building agentic systems. When an AI agent is deployed, it does not simply generate text; it enters an autonomous loop. It perceives a goal, formulates a multi-step execution plan, invokes external APIs, evaluates the results of those API calls against its original plan, and adjusts its behavior accordingly. Without a dedicated framework, engineering such a system requires writing highly brittle boilerplate code to manage context windows, parse unstructured model outputs into executable JSON, and handle API rate limiting. Modern AI agent frameworks standardize these patterns, offering robust, production-ready primitives for state management, tool execution, and multi-agent network topologies.

Understanding AI Agent Frameworks vs. AI Agent Platforms

When architecting an autonomous system, engineering teams often face a critical build-versus-buy decision: utilizing open-source AI agent frameworks versus adopting managed AI agent platforms. Understanding the technical boundaries between these two paradigms is critical for designing scalable infrastructure.

AI agent frameworks are code-level libraries. They are highly extensible, language-specific (predominantly Python or TypeScript), and require developers to manage their own infrastructure, deployment, and LLM endpoint provisioning. They offer maximum granular control over the agent’s reasoning loop, prompt templating, and memory persistence strategies. Conversely, AI agent platforms (such as Google Cloud’s Vertex AI Agent Builder or Microsoft Agent Framework) are managed, enterprise-grade services. They sit higher on the abstraction stack, often providing graphical user interfaces, built-in governance, managed vector databases, and seamless integration with corporate identity and access management (IAM) systems.

| Feature Dimension | AI Agent Frameworks | AI Agent Platforms |

|---|---|---|

| Abstraction Level | Low to Medium. Requires writing custom code to define agent loops, tool bindings, and state management. | High. Often low-code/no-code, utilizing managed services and graphical workflow builders. |

| Customization & Flexibility | Extremely high. Developers can alter the underlying routing algorithms, inference logic, and memory types. | Constrained by the platform’s API limitations and pre-built module offerings. |

| Infrastructure Management | Developer-managed. You must provision your own compute, memory storage, and API gateways. | Fully managed. The vendor handles scaling, high availability, and database provisioning. |

| Governance & Security | Requires manual implementation of guardrails, logging, and access controls. | Built-in compliance, data loss prevention (DLP), and enterprise identity integrations. |

| Examples | LangChain, Microsoft AutoGen, LlamaIndex, CrewAI. | Vertex AI Agent Builder, Microsoft Copilot Studio, AWS Bedrock Agents. |

Core Components of an AI Agent Framework

To effectively utilize AI agent frameworks, software engineers must understand the underlying modules that constitute an agentic architecture. A robust framework decomposes the monolithic complexity of an autonomous system into modular, interchangeable components. This separation of concerns allows developers to swap out the LLM engine, upgrade the memory backend, or introduce new tools without rewriting the core orchestration logic.

The Reasoning Engine (Brain)

The reasoning engine is the cognitive core of the agent, powered by an LLM. However, raw LLMs are inherently stateless next-token predictors. The framework wraps the LLM in a cognitive architecture that enforces structured thinking. The most prevalent paradigm implemented by AI agent frameworks is ReAct (Reasoning and Acting).

In a ReAct loop, the framework prompts the model to generate a “Thought” (internal reasoning about the current state), followed by an “Action” (selecting a tool and formatting its parameters), and waits for an “Observation” (the result of the tool execution). The framework handles the parsing of these specific keywords and orchestrates the loop until the model outputs a designated “Final Answer.”

Tool Calling and Function Binding

Tools are the sensory and interactive organs of the agent. They allow the deterministic execution of code, such as querying a SQL database, invoking a REST API, or executing a Python script in a sandboxed environment. AI agent frameworks provide standard interfaces to define tools. They automatically serialize Python functions or API documentation into JSON schema, inject this schema into the LLM’s system prompt, and reliably parse the LLM’s generated response back into executable code blocks.

State Management and Memory Systems

Agents require memory to maintain context across prolonged execution loops. Frameworks categorize memory into three distinct architectural patterns:

- Short-Term Memory (Context): The rolling history of the immediate conversation and active thought loops. Frameworks manage token limits by automatically summarizing older interactions or dropping the oldest messages.

- Long-Term Memory: Persistent storage of facts, user preferences, or past task outcomes. Frameworks interface with Vector Databases (like Pinecone or Milvus) to chunk, embed, and semantically retrieve relevant historical context using algorithms like k-nearest neighbors (k-NN).

- Working Memory (Scratchpad): A localized, temporary state matrix where the agent stores intermediate variables during a complex multi-step task before formulating the final response.

Popular AI Agent Frameworks Evaluated

The landscape of open-source AI agent frameworks is rapidly consolidating around a few dominant paradigms. Choosing the right framework dictates the architectural pattern of your application. Below is a deep technical evaluation of the industry’s most widely adopted frameworks.

LangChain and LangGraph

LangChain is arguably the most recognized framework in the generative AI ecosystem. While LangChain initially gained traction for its simplified chains and document loaders, it evolved to support agentic behaviors. However, standard LangChain agents execute in linear, sometimes unpredictable loops. To solve the need for complex, reliable agentic behavior, the creators introduced LangGraph.

LangGraph is built on the mathematical concept of Directed Acyclic Graphs (DAGs) and state machines. Instead of relying on a single hidden prompt loop, LangGraph allows engineers to define agents, tools, and reasoning steps as discrete nodes in a graph. Edges define the conditional routing logic between these nodes. This stateful architecture provides immense benefits for cyclic operations. State is explicitly passed from node to node, enabling “human-in-the-loop” approval processes, fault tolerance, and the ability to pause and resume multi-agent workflows.

Microsoft AutoGen

Developed by Microsoft Research, AutoGen is a framework uniquely designed around the paradigm of multi-agent conversations. Instead of a single master agent delegating tasks, AutoGen treats every entity (including the human user) as a “ConversableAgent.”

In AutoGen, computation and reasoning occur through message passing. You might instantiate a UserProxyAgent (which acts as the human interface and executes code locally) and an AssistantAgent (the LLM-backed reasoning engine). When a task is given, the Assistant generates Python code to solve the problem, passes the code as a message to the Proxy, the Proxy executes it in a Docker container, and sends the terminal output back to the Assistant. AutoGen excels in scenarios requiring complex code generation, debugging, and adversarial multi-agent setups (e.g., a “Generator” agent supervised by a “Critic” agent).

LlamaIndex

While natively known as a premier data framework for Retrieval-Augmented Generation (RAG), LlamaIndex has expanded robustly into the agentic space with “Data Agents.” LlamaIndex frameworks shine when the primary bottleneck of your agent is information retrieval and synthesis over vast enterprise datasets.

LlamaIndex provides specialized routing agents that can dynamically choose between different query engines (e.g., deciding whether to run a semantic vector search over an unstructured PDF or a text-to-SQL query over a structured PostgreSQL database). Their agent abstraction tightly couples tool calling with advanced data indexing techniques, making it the superior choice for RAG-heavy enterprise search applications.

CrewAI

CrewAI focuses on production-oriented, role-based multi-agent collaboration. It operates on the analogy of a corporate team. Developers define “Agents” (each with a specific role, backstory, and goal), “Tasks” (specific deliverables expected from the agents), and a “Crew” (the overarching structure that binds agents and tasks together).

CrewAI sits a layer above the raw complexity of LangChain (though it relies on LangChain tools under the hood), offering a highly declarative syntax. It supports sequential, hierarchical, and consensual processing topologies out-of-the-box. It is highly favored by engineering teams that need to quickly prototype complex business processes—such as automated market research, content supply chains, or lead generation—without writing verbose graph-routing code.

Architecting Multi-Agent Workflows

As the complexity of enterprise tasks scales, a single monolithic agent quickly degrades in performance. Context windows become saturated, system prompts become convoluted, and the probability of hallucination increases. AI agent frameworks solve this via multi-agent workflows, distributing cognitive load across specialized, narrow-scope agents.

Implementing multi-agent workflows requires careful consideration of network topology. If you simply allow multiple agents to converse freely without constraint, the system may fall into an infinite loop or deviate from the objective. AI agent frameworks provide specific orchestration patterns to govern these interactions:

- Sequential Pipelines: The output of Agent A becomes the exact input of Agent B. This is highly deterministic. (e.g., Researcher Agent -> Writer Agent -> Editor Agent).

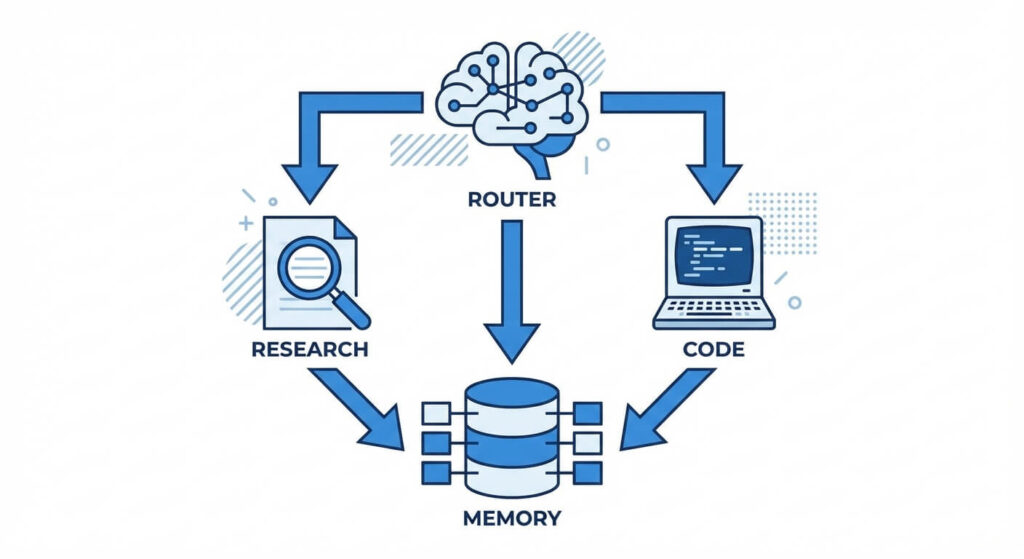

- Hierarchical Delegation: A “Manager” or “Router” agent receives the user’s prompt, decomposes it into sub-tasks, and delegates those sub-tasks to subordinate “Worker” agents. The Manager aggregates the responses.

- Joint Collaboration (Peer-to-Peer): Agents operate in a shared environment (a simulated chat room) and broadcast messages. A framework-level consensus mechanism determines when the task is complete.

When designing these workflows, developers must manage the mathematical boundaries of token consumption. Let the maximum context window of an LLM be denoted as W, the number of sequential agent reasoning steps as S, and the average token consumption per step as T. A successful multi-agent framework ensures that the state propagation mechanism limits memory overhead such that W is strictly greater than S * T, utilizing techniques like memory compression and selective context dropping.

Debugging and Optimizing Agent Performance

Building a prototype with AI agent frameworks is straightforward; pushing them to production is notoriously difficult. Because agents operate non-deterministically, standard debugging tools (like line-by-line step-through debuggers) are insufficient.

To debug and optimize agent performance, developers must leverage specialized observability practices:

Tracing and Observability

Frameworks typically integrate with observability platforms (like LangSmith, AgentOps, or Arize Phoenix) to capture traces. A trace represents a single invocation of the agentic loop. Developers must monitor the exact prompts sent to the LLM, the JSON payloads returned, the latency of each tool execution, and the total token cost. Tracing allows engineers to identify precisely where an agent deviated from its intended path—for instance, if an agent repeatedly called a database tool with incorrect SQL syntax, resulting in a continuous error loop.

Handling Infinite Loops and Failures

Left unchecked, an autonomous agent can enter an infinite loop of failed tool calls, rapidly consuming API credits. Robust AI agent frameworks enforce strict execution boundaries. Developers must configure parameters such as max_iterations (capping the total number of ReAct loop cycles) and execution_timeout. Furthermore, robust error handling must be injected into the tool definitions. Instead of allowing a Python exception to crash the application, the framework should catch the exception, format it as a string, and pass it back to the agent as an observation, effectively telling the agent: “Your last action failed with this error. Think of a new approach.”

Evaluation Metrics

Unit testing deterministic code relies on boolean assertions. Testing AI agents requires probabilistic evaluation. Frameworks support “Trajectory Evaluation,” where a separate, powerful LLM acts as an evaluator. The evaluator reviews the agent’s intermediate steps (its trajectory) and scores it on metrics such as:

- Tool selection accuracy: Did the agent choose the right API for the sub-task?

- Reasoning trace logicality: Was the agent’s chain of thought coherent?

- Goal completion: Did the final answer resolve the initial user prompt?

Factors to Consider When Choosing an AI Agent Framework

Selecting the right framework is foundational to the success of your artificial intelligence deployment. Engineering leaders should evaluate frameworks based on several technical vectors:

- Language Ecosystem: Python dominates the AI ecosystem. Frameworks like AutoGen and LangChain have their most robust feature sets in Python. However, if your enterprise backend is primarily TypeScript/Node.js or C# (.NET), you must evaluate the framework’s parity in those languages. Microsoft’s Semantic Kernel, for example, offers deep native support for C# integrations.

- State Control vs. Abstraction: If your application requires strict compliance, fault-tolerance, and state persistence across human approvals, frameworks based on DAGs (LangGraph) are mandatory. If you are building a rapid prototype of conversational agents, high-abstraction frameworks (CrewAI) will yield a faster time-to-market.

- Tool Ecosystem: Assess the out-of-the-box integrations. Does the framework have pre-built tool bindings for your specific database, SaaS applications (like Jira or Slack), or code execution environments? Building custom tool wrappers is possible but adds to the maintenance burden.

- Latency Requirements: Multi-agent workflows inherently compound latency. If an interaction requires four agents to execute sequentially, the user may wait 20-30 seconds for a response. Frameworks that natively support asynchronous execution and streaming output are essential for user-facing applications.

An Implementation Strategy for Enterprise AI Agents

Transitioning from a proof-of-concept to an enterprise-grade agent requires a phased implementation strategy.

First, begin with deterministic workflows. Before introducing an AI agent framework, map out your existing processes. Identify tasks that are rule-based but currently require human cognitive overhead to parse unstructured text.

Second, start with a Single-Agent Architecture heavily augmented with RAG. Ensure that your core retrieval mechanism is highly accurate. An agent is only as intelligent as the data it is allowed to access. Implement tight guardrails around tool execution. For example, if an agent interacts with a database, restrict its SQL permissions to read-only (SELECT statements) to prevent accidental data mutation.

Third, introduce Multi-Agent Orchestration. Once the single agent hits a complexity ceiling—usually manifested by the LLM forgetting instructions or hallucinating tool arguments—refactor the architecture. Decompose the single agent into specialized roles using a framework like LangGraph or AutoGen.

Finally, secure the deployment. Autonomous systems interacting with external environments introduce novel security vectors, such as Prompt Injection attacks. If a malicious user inputs a prompt that instructs the agent to execute a harmful shell command, the framework must have safeguards. Utilize isolated Docker containers or WebAssembly sandboxes (like E2B) for any code execution tools, and employ guardrail models (like NVIDIA NeMo Guardrails) to classify and filter user inputs before they reach the agent’s reasoning engine.

Frequently Asked Questions (FAQ)

What is the difference between ReAct and standard prompt chaining? Standard prompt chaining is predetermined and static. The developer defines a strict sequence: prompt A output feeds into prompt B. ReAct (Reasoning and Acting) is dynamic. The LLM decides at runtime how many steps to take, which tools to use, and dynamically evaluates its own progress until the goal is achieved.

How do AI agents handle token limits during long workflows? AI agent frameworks manage token limits via memory modules. They implement strategies such as a sliding window (keeping only the last N messages), dynamic summarization (periodically prompting the LLM to summarize the conversation history into a dense paragraph), and semantic retrieval (offloading context to a vector database and retrieving it only when mathematically relevant).

What are the security risks of allowing AI agents to execute code? Code execution tools introduce severe Remote Code Execution (RCE) vulnerabilities. If an LLM writes and runs Python code autonomously, a prompt injection attack could trick the agent into executing malicious scripts, accessing environment variables, or exfiltrating data. This is mitigated by executing all agent-generated code in strictly sandboxed, ephemeral environments with restricted network access.

Can multiple agents built on different frameworks work together? Natively, different frameworks (e.g., an AutoGen agent talking to a CrewAI agent) do not share state protocols. However, they can interoperate via standard RESTful APIs. You can wrap one agent in an API endpoint and provide that endpoint as a custom “tool” to an agent running in a completely different framework.