An AI agent is an autonomous software system that perceives its environment, processes information using a core cognitive model (such as a Large Language Model), makes reasoning-based decisions, and executes actions through specific tools or APIs to achieve predefined goals.

Introduction to Artificial Intelligence Agents

The landscape of artificial intelligence has transitioned rapidly from static, predictive models to dynamic, autonomous systems capable of executing complex workflows. Historically, traditional machine learning models operated as passive oracles: a user provided an input, and the model generated a localized output based on its training distribution. While this paradigm revolutionized natural language processing and computer vision, it required human intervention to parse the output and execute any subsequent actions. Modern AI agents represent a paradigm shift in this architecture. They encapsulate large language models (LLMs) with robust memory management, sophisticated planning algorithms, and deterministic tool execution environments. By acting as the central reasoning engine, the LLM orchestrates complex chains of thought, interfaces with external environments via APIs, and continuously reflects on its state to correct errors. Understanding what an AI agent fundamentally represents requires shifting our perspective from viewing AI as a simple text generator to viewing it as a stateful, iterative state machine capable of autonomous agency.

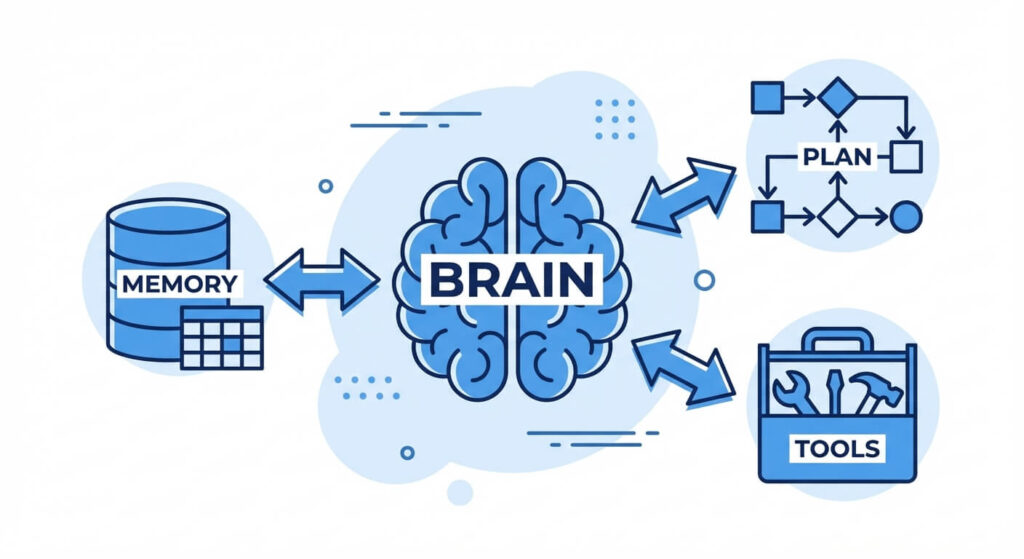

Core Architecture of an AI Agent

The architecture of a modern AI agent is modular, segregating the cognitive processing layer from memory and execution environments. At the center of this architecture is the large language model, which serves as the controller or “brain.” However, a bare LLM cannot function as an agent on its own; it requires surrounding infrastructure to handle state persistence, environment perception, and physical or digital execution. This orchestrator pattern breaks down into four primary components: the perception module, the reasoning engine, the memory stores, and the action interfaces. When an agent receives an initial prompt, it cycles through these components iteratively—often referred to as the Agent Loop—evaluating its current state against its target objective. This systematic orchestration allows the agent to break down monolithic tasks into manageable sub-tasks, retrieve necessary historical context, and execute code or API calls to manipulate external states.

The Brain (Reasoning Engine)

The core controller of an AI agent is typically an LLM (such as GPT-4, Claude 3, or Llama 3) instructed through meta-prompting to behave as a reasoning engine. The brain is responsible for processing inputs, decomposing complex problems into sequential steps, and deciding which external tools to invoke. It leverages zero-shot and few-shot learning capabilities to understand context without requiring task-specific fine-tuning.

Memory Systems

Agents require both transient and persistent memory to maintain context over long autonomous runs.

- Short-Term Memory: This relies on the LLM’s context window. It stores the immediate history of the current interaction, including recent tool outputs and step-by-step reasoning traces.

- Long-Term Memory: Implemented using vector databases (like Pinecone, Milvus, or FAISS), long-term memory allows the agent to store and retrieve massive amounts of data across multiple sessions. Information is converted into high-dimensional vector embeddings, and retrieval is performed using similarity metrics such as cosine similarity. The mathematical representation for cosine similarity is: similarity(A, B) = (A · B) / (||A|| * ||B||), where A and B are the embedding vectors.

Planning and Task Decomposition

To achieve complex goals, an agent must formulate a structured plan. Planning involves dissecting a primary objective into a Directed Acyclic Graph (DAG) of sub-tasks. Frameworks like Chain of Thought (CoT) and Tree of Thoughts (ToT) enable the agent to explore multiple reasoning paths, evaluate the validity of each path, and select the most optimal sequence of operations. Modern agents also incorporate self-reflection mechanisms, where the agent reviews its previous actions and modifies its plan if an error or infinite loop is detected.

Tools and Actuators

Tools are the deterministic functions that an agent can invoke to interact with the external world. While an LLM’s internal knowledge is frozen at its training cutoff, tools allow it to browse the internet, execute Python code, query SQL databases, or trigger webhook events. The agent communicates with these tools through strictly typed JSON payloads, a process formally known as Function Calling.

Types of AI Agents

In classical artificial intelligence literature, particularly within the framework established by Stuart Russell and Peter Norvig, intelligent agents are categorized based on their degree of perceived intelligence, autonomy, and state management. While the modern industry focuses heavily on LLM-based autonomous agents, understanding the fundamental theoretical classifications is critical for engineering robust systems. These classifications dictate how an agent maps its percepts (inputs from the environment) to its actions (outputs). Ranging from highly deterministic, rule-based scripts to probabilistic, self-learning networks, the architectural choice of an agent type directly impacts its computational complexity, deployment cost, and failure domains. By analyzing these traditional models alongside contemporary generative AI implementations, software engineers can design hybrid systems that leverage the predictability of reflex agents with the dynamic reasoning capabilities of utility-based LLM architectures.

Simple Reflex Agents

Simple reflex agents operate entirely on condition-action rules (if-then logic). They do not maintain an internal state or memory of past interactions. Their decision-making process is limited strictly to the current percept. Because they lack historical context, they are highly computationally efficient but incapable of handling partially observable environments. A common example is a basic load balancer routing traffic based strictly on immediate server health ping results.

Model-Based Reflex Agents

Model-based agents maintain an internal state that depends on the percept history, allowing them to track parts of the environment they cannot currently observe. They utilize an internal model of how the world works and how their actions affect the environment. For example, a robotic vacuum cleaner uses a model-based reflex architecture to remember which parts of a room it has already cleaned, preventing it from indefinitely cleaning the same observable area.

Goal-Based Agents

Goal-based agents expand upon model-based agents by incorporating target objectives. Instead of merely reacting to an environment, these agents evaluate multiple potential actions and calculate which sequence of actions will transition their current state into the desired goal state. This requires search algorithms and planning mechanisms (such as A* search or Dijkstra’s algorithm) to navigate the state space effectively.

Utility-Based Agents

While goal-based agents only distinguish between “goal state” and “non-goal state,” utility-based agents measure the quality of a state. They utilize a utility function that maps a state to a real number, representing the degree of satisfaction or efficiency. In a scenario with multiple valid paths to a goal, a utility-based agent will select the path that maximizes the expected utility (e.g., the fastest, cheapest, or safest route).

Autonomous LLM Agents

The modern iteration of AI agents utilizes an LLM as the cognitive core. Unlike classical agents constrained by hardcoded rules or specific reinforcement learning environments, LLM agents use natural language processing to generalize across virtually infinite domains. They dynamically parse ambiguous instructions, generate their own utility evaluations via self-prompting, and write dynamic code to achieve objectives.

Frameworks for Agentic Reasoning

The ability of an AI agent to execute complex tasks relies entirely on the reasoning framework governing its execution loop. Without a structured methodology for processing thoughts and actions, an LLM will default to standard autoregressive text generation, often leading to hallucinations, skipped steps, or logic errors. Reasoning frameworks force the underlying model to externalize its cognitive process, breaking down monolithic queries into observable, sequential states. This externalization is not merely a formatting trick; it alters the probabilistic distribution of the generated tokens, making accurate and logical outputs significantly more likely. By employing advanced prompting strategies and orchestrator loops, software engineers can bind the non-deterministic nature of generative AI into a highly predictable, stateful workflow. These frameworks transform static language models into dynamic engines capable of hypothesis generation, empirical testing, and self-correction.

Chain of Thought (CoT)

Chain of Thought prompting is the foundational reasoning framework for modern LLMs. It forces the model to articulate its intermediate reasoning steps before providing a final answer. By generating tokens that represent logical deductions, the model effectively utilizes its context window as a scratchpad, which significantly improves performance on algorithmic and mathematical tasks.

ReAct (Reasoning and Acting)

The ReAct framework interweaves reasoning traces with task-specific actions. In a standard ReAct loop, the agent cycles through three distinct phases:

- Thought: The agent evaluates the current state and reasons about what to do next.

- Action: The agent selects a tool and provides the required input parameters (e.g.,

Search("latest API documentation")). - Observation: The external tool executes and returns the result to the agent. This loop continues iteratively until the agent determines that it has gathered enough information to synthesize a final response.

Plan-and-Solve Strategy

Unlike ReAct, which interleaves planning and execution step-by-step, the Plan-and-Solve strategy requires the agent to map out the entire execution pipeline before taking any action. The agent drafts a multi-step plan, reviews it for logical consistency, and then sequentially executes the tools. This approach reduces API calls and computational overhead but requires robust error-handling if a downstream step fails.

Traditional AI vs. Agentic AI Comparison

When designing enterprise architectures, distinguishing between traditional software, conversational AI, and true autonomous agents is crucial for selecting the right technology stack. The following table delineates the core technical differentiators across these systems, focusing on state management, execution autonomy, and underlying data structures.

| Feature | Traditional Software | Conversational AI (Standard LLM) | Autonomous AI Agent |

|---|---|---|---|

| Execution Flow | Deterministic, hardcoded control flow | Reactive, single-turn or simple multi-turn generation | Proactive, dynamic loops (e.g., ReAct) |

| State Management | Relational databases, memory heaps | Stateless or relies entirely on sliding context windows | Vector databases (RAG), hierarchical memory buffers |

| Tool Integration | Explicit API bindings via code | None (frozen knowledge cutoff) | Dynamic function calling and JSON schema parsing |

| Error Handling | Try/Catch blocks, static retries | Requires human user to reprompt | Self-reflection, autonomous prompt correction, and tool retry |

| Primary Output | Structured data, UI changes | Natural language text | Orchestrated actions, state mutations, and text |

Building a Simple AI Agent in Python

Implementing an AI agent requires establishing a robust environment where the LLM can parse instructions, map them to specific tool signatures, and execute a reasoning loop. In this section, we will construct a programmatic ReAct agent using Python. The underlying mechanics involve defining deterministic Python functions (tools), converting their docstrings and parameter types into JSON schemas via the OpenAI Function Calling API, and orchestrating a while loop that handles the Thought-Action-Observation cycle. This code abstracts the complexities of direct API state management, illustrating how a developer can bind a mathematical calculation tool to a language model. Understanding this implementation is vital for software engineers looking to bridge the gap between static natural language generation and executable, environment-altering agentic behavior.

Setting up the Environment

To begin, you need to install the necessary libraries. We will utilize the core OpenAI Python SDK. Ensure you have your API key configured in your environment variables.

pip install openai python-dotenv

Python Implementation

The following code demonstrates a basic loop where the LLM decides when to use a tool, extracts the arguments, and waits for the tool’s execution result before proceeding.

import json

import os

from openai import OpenAI

from dotenv import load_dotenv

load_dotenv()

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

# 1. Define the deterministic tool (Actuator)

def calculate_expression(expression: str) -> str:

"""Evaluates a mathematical expression."""

try:

# NOTE: eval() is used here for simplicity.

# In production, use a safe AST parser or math library.

result = eval(expression)

return str(result)

except Exception as e:

return f"Error evaluating expression: {e}"

# Map the function for programmatic access

available_tools = {

"calculate_expression": calculate_expression

}

# 2. Define the tool schema for the LLM

tools_schema = [

{

"type": "function",

"function": {

"name": "calculate_expression",

"description": "Evaluates a mathematical expression and returns the result.",

"parameters": {

"type": "object",

"properties": {

"expression": {

"type": "string",

"description": "The mathematical expression to evaluate, e.g., '25 * 4 + 10'",

}

},

"required": ["expression"],

},

}

}

]

def run_agent(user_prompt: str):

messages = [

{"role": "system", "content": "You are a mathematical agent. Use tools to solve math problems. Do not guess."}

]

messages.append({"role": "user", "content": user_prompt})

# The Agent Loop

while True:

response = client.chat.completions.create(

model="gpt-4-turbo",

messages=messages,

tools=tools_schema,

tool_choice="auto"

)

response_message = response.choices[0].message

# Check if the LLM wants to call a tool

if response_message.tool_calls:

# Append the assistant's request to call a tool to the conversation

messages.append(response_message)

for tool_call in response_message.tool_calls:

function_name = tool_call.function.name

function_to_call = available_tools[function_name]

function_args = json.loads(tool_call.function.arguments)

print(f"[Agent Action] Executing: {function_name} with {function_args}")

# Execute the tool

function_response = function_to_call(

expression=function_args.get("expression")

)

# Return the observation back to the LLM

messages.append(

{

"tool_call_id": tool_call.id,

"role": "tool",

"name": function_name,

"content": function_response,

}

)

else:

# No tool calls needed; the agent has finished reasoning

final_answer = response_message.content

print(f"[Agent Final Output] {final_answer}")

break

# 3. Test the Agent

if __name__ == "__main__":

run_agent("What is the result of 1523 multiplied by 4, and then divided by 2?")

Understanding the Code Flow

In this architecture, the LLM does not perform the mathematical calculation itself, which prevents standard autoregressive arithmetic hallucinations. Instead, the model outputs a JSON payload containing the arguments (1523 * 4 / 2). The Python execution thread intercepts this JSON, routes it to the calculate_expression function, and appends the computed observation back to the message array under the tool role. The LLM then reads this observation and synthesizes the final natural language response.

Formalizing the Agent Environment Interface

To fully comprehend the mathematical underpinnings of advanced AI agents, particularly those utilizing reinforcement learning from human feedback (RLHF) or Q-learning principles, we must define the problem space using Markov Decision Processes (MDP). An AI agent operates within a stochastic environment where its actions mutate the environment’s state. Understanding this formalization allows engineers to mathematically tune the reward structures and transition probabilities of complex, multi-agent networks. The underlying objective is always the optimization of a defined policy function, mapping the state space to an optimal action space over time. This formal mathematical abstraction bridges the gap between empirical software engineering and theoretical machine learning.

A Markov Decision Process for an AI agent is formally defined as a tuple (S, A, P, R, γ), where:

- S (State Space): A set of all possible states the environment can exist in. For an LLM agent, this includes the current context window, the retrieved vector embeddings, and the state of external APIs.

- A (Action Space): A set of all valid actions the agent can take. This includes generating text, invoking a specific tool, or terminating the session.

- P (Transition Probability Matrix): P(s’ | s, a) denotes the probability of transitioning to state s’ given the current state s and action a.

- R (Reward Function): R(s, a, s’) is the immediate numerical reward received after transitioning from state s to s’ via action a.

- γ (Discount Factor): A value between 0 and 1 that determines the present value of future rewards, ensuring the agent prioritizes efficient, rapid task completion over infinite loops.

The agent’s goal is to learn an optimal policy, denoted as π*, which maximizes the expected cumulative discounted reward: Σ (from t=0 to ∞) of γ^t * R_t. By formulating agent planning in this manner, developers can build heuristic evaluations that automatically terminate agents if they enter a state loop with diminishing returns.

Challenges and Limitations of AI Agents

Despite the rapid advancement in language models, building production-ready AI agents introduces a myriad of technical complexities. Because agents operate autonomously in loops, minor anomalies in prompt interpretation or API latency can cascade into catastrophic system failures. Engineers must meticulously design fail-safes to prevent autonomous systems from executing destructive actions or accumulating infinite cloud computing costs. The shift from deterministic code to probabilistic reasoning requires a fundamental change in how software is tested, monitored, and deployed. Understanding these core limitations is crucial for implementing robust guardrails and ensuring that agentic systems behave predictably within enterprise environments.

Hallucinations and Grounding Failures

The most prevalent challenge in LLM-based agents is hallucination—the generation of plausible but factually incorrect information. In an agentic loop, a hallucination is particularly dangerous because the agent might pass a fabricated argument into an external API. For instance, if an agent hallucinates a non-existent database table name and executes a SQL query, the query will fail. If the error handling is poorly designed, the agent may hallucinate further trying to fix the non-existent issue. Mitigating this requires rigorous grounding techniques, strictly typed JSON schemas, and low-temperature sampling.

Infinite Loops and Token Exhaustion

Because frameworks like ReAct rely on a while loop architecture, an agent can get stuck in an infinite Thought-Action-Observation loop if a tool consistently returns an unhelpful error message. The agent will continue reasoning and retrying, rapidly consuming API tokens and driving up infrastructure costs. Production systems must implement hard iteration caps (e.g., maximum 5 tool calls per run) and computational budget monitors to forcefully terminate looping agents.

Latency and Context Window Bottlenecks

Every step in an agent’s reasoning loop requires a full forward pass through the LLM. If an agent takes 10 steps to solve a problem, the user must wait for 10 sequential API responses. Furthermore, as the conversation history grows with each step, the context window fills up, increasing both latency and cost. Developers must implement advanced memory summarization techniques and utilize hierarchical RAG architectures to maintain performance at scale.

Frequently Asked Questions (FAQ)

What is the difference between an AI model and an AI agent? An AI model (like a base LLM) is a passive mathematical function that predicts the next sequence of tokens based on an input prompt. An AI agent wraps an AI model with an orchestration loop, memory management, and tool integration, allowing it to autonomously plan and execute actions in an environment to achieve a goal.

How does an AI agent use tools? AI agents use tools via Function Calling. The developer provides the agent with a JSON schema describing available functions and their required parameters. When the agent decides to use a tool, it outputs a JSON string matching that schema. The application code parses this JSON, executes the actual code (like a Python script or REST API call), and feeds the result back to the agent.

What is RAG in the context of AI agents? RAG stands for Retrieval-Augmented Generation. It is a technique used to give agents long-term memory. Instead of relying solely on the LLM’s pre-trained knowledge, the agent queries a vector database containing domain-specific documents. The retrieved context is appended to the agent’s prompt, reducing hallucinations and grounding the agent in factual data.

What causes an AI agent to hallucinate a tool call? Tool call hallucinations typically occur when the tool descriptions (docstrings or schemas) provided to the LLM are ambiguous, overlapping, or overly complex. If the LLM does not clearly understand the boundaries of a tool, it may invent parameters or call the wrong function entirely. High temperature settings can also increase the non-determinism, leading to hallucinated tool usage.

How do you prevent an AI agent from doing something dangerous? Security in AI agents relies on the Principle of Least Privilege. Agents should never have direct, unauthenticated access to destructive operations (like DROP TABLE or DELETE). Tools must execute in sandboxed environments (like Docker containers), and critical actions should require a “Human-in-the-Loop” (HITL) authorization step before the agent is permitted to proceed.