Generative AI vs Predictive AI: What’s the Difference?

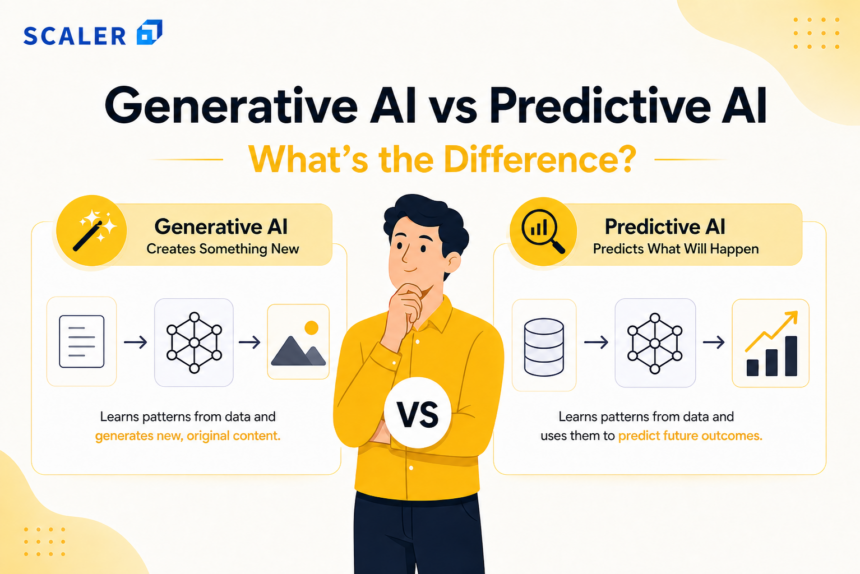

Generative AI creates new, original content like text, code, or images based on learned data patterns. Conversely, predictive AI analyzes historical data to forecast future outcomes, classify information, or identify trends. While generative models synthesize novel outputs, predictive models extrapolate and categorize existing information.

Introduction to Modern Artificial Intelligence Paradigms

In the rapidly evolving landscape of artificial intelligence, software engineers and data scientists are continually tasked with selecting the correct machine learning roadmap and architecture to solve specific business problems. The modern AI ecosystem has largely bifurcated into two primary paradigms based on statistical objectives: generating novel data and predicting outcomes from existing data. Understanding the deep technical distinctions between these two methodologies is critical for designing scalable, efficient, and cost-effective MLOps roadmap integrated machine learning pipelines.

Historically, artificial intelligence in the enterprise was synonymous with predictive modeling. The industry focused on optimizing discriminative functions that could map high-dimensional input vectors to discrete or continuous output variables. Today, the rise of foundation models has introduced generative architectures capable of modeling complex multidimensional probability distributions. To architect effective solutions, following an AI engineer roadmap helps engineers look beyond high-level definitions and understand the underlying mathematics, algorithmic structures, and computational requirements that separate these two branches of artificial intelligence.

What is Predictive AI?

Predictive AI represents a class of machine learning systems designed to forecast future events, classify data points, or identify underlying patterns based on historical data inputs. From a mathematical perspective, predictive AI relies on discriminative modeling. The objective is to learn the conditional probability distribution P(Y|X)—the probability of a specific target output (Y) given a set of input features (X).

This approach forms the backbone of traditional machine learning and statistical modeling. Rather than attempting to understand how the underlying data was generated, predictive AI draws boundary lines in high-dimensional space to separate different classes (classification) or fits a mathematical function to minimize the error between predicted and actual values (regression). Predictive models are heavily optimized for accuracy, low-latency inference, and computational efficiency, making them the standard choice for risk assessment, algorithmic trading, recommendation engines, and anomaly detection.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Core Mechanics and Algorithms

Predictive AI utilizes a variety of supervised, unsupervised, and semi-supervised algorithms. The most prominent include:

- Linear and Logistic Regression: The foundational algorithms for predicting continuous values and binary classifications, respectively. Linear regression attempts to find a set of weights (w) and a bias (b) to satisfy the equation: y = w1x1 + w2x2 + … + wnxn + b.

- Decision Trees and Ensemble Methods: Algorithms like Random Forest and Gradient Boosting (e.g., XGBoost, LightGBM) build complex predictive models by combining multiple weak learners. These tree-based models excel at handling tabular data, non-linear relationships, and feature interactions without requiring extensive data scaling.

- Support Vector Machines (SVM): SVMs map data to a high-dimensional feature space to find the optimal hyperplane that maximizes the margin between different classification classes.

- Discriminative Neural Networks: Multi-Layer Perceptrons (MLPs) and Convolutional Neural Networks (CNNs) are used for complex predictive tasks. For classification, the final layer typically employs a Softmax function to output a probability distribution across discrete classes.

Code Example: Predictive AI Implementation

Below is a Python implementation utilizing Scikit-Learn to build a predictive Random Forest Classifier. This model analyzes structured data to predict a binary outcome.

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import accuracy_score, classification_report

# 1. Load hypothetical structured dataset

# Features (X): User age, account tenure, login frequency, support tickets

# Target (y): Will the user churn next month? (1 = Yes, 0 = No)

data = {

'age': [25, 45, 31, 52, 23, 40],

'tenure_months': [12, 48, 6, 60, 2, 24],

'logins_per_week': [5, 2, 7, 1, 14, 3],

'support_tickets': [0, 2, 0, 3, 1, 0],

'churn': [0, 1, 0, 1, 0, 0]

}

df = pd.DataFrame(data)

X = df.drop('churn', axis=1)

y = df['churn']

# 2. Split data into training and testing sets

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

# 3. Initialize and train the predictive model

model = RandomForestClassifier(n_estimators=100, max_depth=5, random_state=42)

model.fit(X_train, y_train)

# 4. Predict outcomes on unseen data

predictions = model.predict(X_test)

# 5. Evaluate the model

print(f"Accuracy: {accuracy_score(y_test, predictions)}")

print("Classification Report:")

print(classification_report(y_test, predictions))

Benefits and Common Use Cases

Predictive AI is deeply embedded in modern software infrastructure due to its reliability and low computational overhead during inference.

- Predictive Maintenance: Analyzing IoT sensor data (vibration, temperature) to forecast machinery failures before they occur.

- Fraud Detection: Real-time evaluation of transaction metadata to assign a probability score for fraudulent activity.

- Demand Forecasting: Utilizing time-series forecasting (e.g., ARIMA or LSTMs) to predict inventory requirements based on historical sales data, seasonality, and market variables.

- Algorithmic Trading: Processing market signals to predict price movements and execute high-frequency trades.

What is Generative AI?

Generative AI refers to machine learning architectures designed to synthesize new, original data that reflects the statistical properties of the training dataset. Unlike predictive models that learn the boundary between classes, generative AI relies on generative modeling. The mathematical objective is to learn the joint probability distribution P(X, Y) or the underlying distribution of the data itself P(X). By mastering this distribution, the model can sample from it to create entirely new instances of data (text, images, audio, or code) that are indistinguishable from the real data.

The evolution of generative AI is largely attributed to advancements in deep learning and a structured generative AI syllabus, specifically the utilization of self-supervised learning on massive, unstructured datasets. These models map input data to a latent space—a compressed mathematical representation of the data’s underlying features. By interpolating within this latent space and applying decoding mechanisms, generative AI can produce complex, high-dimensional outputs. This requires significantly more computational power, memory bandwidth, and architectural complexity compared to standard predictive tasks.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Core Mechanics and Algorithms

Generative AI is powered by specialized deep learning architectures capable of handling sequential and high-dimensional synthesis:

- Transformer Networks: The architecture behind Large Language Models (LLMs) like GPT-4 and Llama. Transformers utilize the self-attention mechanism to process sequences of data in parallel, understanding the contextual relationship of elements (tokens) across long distances. The core mathematical operation of scaled dot-product attention is defined as: Attention(Q, K, V) = softmax((Q · K^T) / √d_k) · V, where Q (Query), K (Key), and V (Value) are weight matrices.

- Generative Adversarial Networks (GANs): A dual-network architecture where a Generator creates synthetic data, and a Discriminator evaluates it against real data. The two networks train simultaneously in a zero-sum game, optimizing a minimax loss function until the Generator produces hyper-realistic outputs.

- Variational Autoencoders (VAEs): Networks that encode input data into a probabilistic distribution in the latent space, and then decode samples from this distribution back into the original data format, allowing for controlled generation.

- Diffusion Models: Models that learn to generate data by gradually reversing a stochastic noise process. They start with pure Gaussian noise and iteratively denoise it to synthesize high-quality images or audio, heavily utilized by systems like Midjourney and Stable Diffusion.

Code Example: Generative AI Implementation

Below is a conceptual Python snippet utilizing the transformers library by Hugging Face to implement a generative AI model for text synthesis.

from transformers import pipeline

# 1. Initialize a text-generation pipeline using a pre-trained Transformer model

# We are using GPT-2 as a lightweight example of a generative architecture

generator = pipeline('text-generation', model='gpt2')

# 2. Provide an initial prompt (the context for the generative model)

prompt = "The future of artificial intelligence in software engineering will"

# 3. Generate novel text based on the learned probability distribution

# max_length controls the output size, num_return_sequences controls how many variations to generate

generated_outputs = generator(

prompt,

max_length=50,

num_return_sequences=1,

temperature=0.7, # Controls randomness/creativity

truncation=True

)

# 4. Output the synthesized text

print("Generated Output:")

for output in generated_outputs:

print(output['generated_text'])

Benefits and Common Use Cases

Generative AI excels in scenarios requiring creativity, a specialized NLP roadmap for language understanding, and content generation.

- Code Generation and Assistance: Tools like GitHub Copilot vs Gemini Code Assist utilize LLMs to synthesize boilerplate code, write unit tests, and assist in refactoring based on natural language prompts.

- Content Creation: Generating marketing copy, technical documentation, and dynamic conversational agents that pass the Turing test in limited contexts.

- Synthetic Data Generation: Creating realistic but entirely synthetic datasets (e.g., medical records, financial histories) to train other machine learning models without violating data privacy regulations.

- Drug Discovery: Generating novel molecular structures that fit specific biological targets by exploring the latent space of known chemical compounds.

Generative AI vs Predictive AI: A Comprehensive Technical Comparison

When navigating the primary keyword “generative ai vs predictive ai”, it is crucial to recognize that the core divergence lies in their objective functions and data representations.

Predictive AI seeks to compress information down to a single decision, probability, or value. It is reductive by nature. Generative AI, conversely, seeks to expand upon a prompt or random noise vector to produce complex, multi-dimensional data structures. It is expansive by nature.

Below is a strict technical comparison across various architectural and operational parameters.

| Technical Parameter | Predictive AI | Generative AI |

|---|---|---|

| Core Objective | Decision making, forecasting, and classification. | Synthesis and creation of novel data instances. |

| Mathematical Foundation | Discriminative Modeling: Learns P(Y|X) (Conditional probability). | Generative Modeling: Learns P(X, Y) or P(X) (Joint or underlying probability). |

| Input Data Types | Primarily structured, tabular data, or pre-processed vectorized features. | Primarily unstructured data (raw text, images, audio, video). |

| Model Architecture | Random Forests, XGBoost, SVMs, MLPs, Logistic Regression. | Transformers, GANs, Diffusion Models, VAEs. |

| Output Modality | Scalars, discrete class labels, or probability scores (low dimensionality). | Sequences of text tokens, pixel arrays, audio waveforms (high dimensionality). |

| Loss Functions | Mean Squared Error (MSE), Cross-Entropy Loss, Hinge Loss. | Kullback-Leibler (KL) Divergence, Contrastive Loss, Minimax Loss. |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, RMSE, ROC-AUC. | BLEU, ROUGE, Fréchet Inception Distance (FID), Perplexity. |

| Computational Cost (Inference) | Very low. Easily deployable on edge devices and standard CPUs. | Very high. Typically requires specialized hardware (GPUs/TPUs) and high memory bandwidth. |

Data Processing and Objectives

Predictive AI requires heavily pre-processed, labeled datasets. The success of a predictive model hinges heavily on feature engineering—the process of domain experts manually selecting and transforming data variables to make them more digestible for the algorithm.

Generative AI bypasses traditional feature engineering by leveraging deep neural networks capable of automatic feature extraction. These models are typically pre-trained on massive corpora of unstructured, unlabeled data using self-supervised learning techniques (like next-token prediction or masked language modeling). The objective is broad representation learning rather than narrow task optimization.

Computational Footprint and Deployment

Deploying predictive AI in production is typically lightweight. A trained XGBoost model might be only a few megabytes in size and can execute inferences in microseconds on a standard CPU, making it ideal for real-time systems like credit card fraud detection.

Generative AI introduces massive infrastructure challenges. Deploying a model like Llama 3 with 70 billion parameters requires hundreds of gigabytes of VRAM just to hold the model weights in memory. Inference involves complex matrix multiplications at every token generation step, resulting in high latency and requiring distributed GPU clusters. Software engineers must utilize techniques like quantization (reducing weight precision from FP16 to INT8 or INT4) and hardware-accelerated inferencing engines (like vLLM or TensorRT) to make generative AI economically viable in production.

What is the Difference Between Generative AI and Predictive AI in Practice?

To fully understand “what is the difference between generative ai and predictive ai”, we must examine how they function within a modern software architecture. Let us consider an enterprise e-commerce platform that wishes to maximize customer retention and sales. Both forms of AI can be deployed, but they handle completely different parts of the user journey.

The Predictive AI Implementation:

The engineering team trains a gradient boosting model on historical user data (click-through rates, purchase history, time spent on page). When a user logs in, the predictive AI runs an inference. The model outputs a probability score: User A has an 85% probability of purchasing a mechanical keyboard in the next 7 days. Furthermore, a recommendation engine (another predictive model utilizing collaborative filtering) predicts which three specific keyboards the user is most likely to click on. The predictive AI has successfully reduced the massive product catalog down to a few highly probable discrete choices.

The Generative AI Implementation:

Knowing the user wants a mechanical keyboard is not enough; the platform needs to convert the sale. The engineering team passes the predictive model’s output (the user’s intent and product IDs) to an LLM via an API. The generative AI model utilizes this context to instantly write a highly personalized, dynamic email: “Hi Alex, noticed you’ve been eyeing tactile switches! We just restocked the Keychron Q1, and we know you prefer hot-swappable boards…” Furthermore, a generative diffusion model creates a custom thumbnail image blending the keyboard with a background that matches the user’s previously expressed aesthetic preferences.

In practice, predictive AI navigates the “who, what, and when” by analyzing the past, while generative AI handles the “how to communicate and create” by synthesizing novel assets in the present.

Limitations and Risks

Understanding the limitations of both paradigms is essential for robust system design.

Risks of Predictive AI

- Concept Drift: Predictive models assume that the future will resemble the past. If the underlying data distribution changes over time (e.g., sudden shifts in consumer behavior due to a global event), the model’s accuracy will degrade rapidly, requiring retraining.

- Oversimplification: By forcing high-dimensional data into discrete categories, predictive AI can strip away important contextual nuance.

- Bias Amplification: If the historical training data contains human biases, the predictive algorithm will learn and automate those biases, leading to skewed classifications in areas like loan approval or resume screening.

Risks of Generative AI

- Hallucinations: Because generative models operate probabilistically, they can output plausible-sounding but entirely factually incorrect information. An LLM predicting the next most likely token does not inherently possess a mechanism for factual verification.

- Non-Determinism: In software engineering, deterministic behavior (the same input always produces the same output) is highly valued. Generative AI is inherently stochastic; asking a model the same question twice will yield different results, complicating unit testing and automated QA processes.

- Data Privacy and IP Constraints: Because generative models memorize statistical representations of their training data, there is a risk of them regurgitating copyrighted material or sensitive personal data that was inadvertently included in the training corpus.

How Generative AI and Predictive AI Work Together

The most advanced enterprise architectures today do not choose between these paradigms; they combine them into hybrid pipelines. By chaining predictive and generative models together, engineers can offset the weaknesses of one with the strengths of the other.

Retrieval-Augmented Generation (RAG)

One of the most prominent hybrid architectures is Retrieval-Augmented Generation (RAG). RAG solves the generative AI problem of “hallucinations” by grounding the model in factual data retrieved by a predictive model.

- Predictive Phase (Retrieval): A user submits a query. A predictive model converts the query into a high-dimensional vector embedding. It then performs a similarity search against a vector database (predicting which stored documents most closely match the user’s semantic intent).

- Generative Phase (Generation): The retrieved, highly accurate documents are appended to the user’s original prompt. The generative LLM then reads the prompt and the retrieved context, synthesizing a natural language answer based strictly on the factual, predicted context.

Intelligent Autonomous Agents

AI agents use predictive AI for state evaluation and routing, while using generative AI for execution. For example, an automated customer support agent receives an angry email. A predictive NLP model (like a fine-tuned BERT) classifies the sentiment of the email as “Negative” and predicts the intent as “Refund Request”. It routes the ticket to the refund logic flow. A generative AI model is then triggered to write a polite, empathetic, and customized apology email to the customer, incorporating the specific details of their purchase.

Choosing Between Predictive AI and Generative AI for Your Tech Stack

When designing a new feature, software engineers should follow a strict decision matrix based on the business requirements, computational budget, and required output.

Choose Predictive AI when:

- The objective is to make a binary decision (Yes/No, Spam/Not Spam).

- You need to forecast numerical values (stock prices, inventory volume).

- The application requires microsecond latency and high throughput.

- Compute resources are limited, or the model must run locally on edge devices/mobile phones.

- Interpretability and explainability of the model’s decision-making process are strictly required for regulatory compliance.

Choose Generative AI when:

- The objective is to create entirely new digital assets (code, text, images, video).

- The application involves complex natural language interactions, summarization, or translation.

- The system must adapt to unstructured prompts rather than rigid tabular inputs.

- The computational budget allows for GPU allocation or API calls to managed foundation models.

- You are operating in creative, exploratory, or augmentation domains where minor variations in output are acceptable or desired.

Frequently Asked Questions (FAQ)

Q: Can predictive AI be considered a subset of generative AI?

No. They are fundamentally different statistical approaches. Predictive AI models conditional probability to classify or forecast (discriminative modeling), while generative AI models joint probability to synthesize new data. They are distinct branches under the broader umbrella of Machine Learning.

Q: Which type of AI requires more computational power?

Generative AI requires exponentially more computational power. Training a state-of-the-art predictive model might take hours or days on standard hardware. Training a large generative model requires thousands of GPUs running for months. Furthermore, inference in generative AI requires massive memory bandwidth to process and generate high-dimensional sequences.

Q: Can I use Generative AI for predictive tasks?

Yes, but it is generally highly inefficient. You can prompt an LLM to act as a classifier (e.g., “Read this text and output whether it is positive or negative”). However, using a 70-billion parameter generative model for a simple binary classification is computationally wasteful, slower, and often less accurate on niche data than a lightweight predictive model (like logistic regression) trained specifically for that task.

Q: How do evaluation metrics differ between the two?

Predictive AI relies on mathematically rigorous, objective metrics. If a model predicts “True” and the actual label is “False”, the error is absolute, measurable via Accuracy, Precision, Recall, and F1-score. Generative AI evaluation is far more subjective. Determining if a generated summary is “good” or an image is “realistic” requires subjective, algorithmic approximations like BLEU (for translation overlap), ROUGE (for summarization recall), or computationally expensive human-in-the-loop evaluations.