Career Essentials in Generative AI: Skills, Courses & Opportunities

The career essentials in generative AI encompass a multi-faceted skill set combining deep learning fundamentals, proficiency with advanced ML frameworks, and a nuanced understanding of model architectures like Transformers. Professionals must master prompt engineering, model fine-tuning, and the MLOps lifecycle to build, deploy, and maintain robust AI systems.

Generative Artificial Intelligence has transcended its status as a niche academic pursuit to become a transformative force across industries. From code generation and content creation to drug discovery and complex system design, the capabilities of models like GPT-4, DALL-E 3, and Stable Diffusion are redefining professional roles and creating unprecedented demand and competitive AI engineer salary packages for specialized talent. For software engineers and computer science students, navigating this new landscape requires a strategic approach to skill acquisition. This guide provides a comprehensive generative AI roadmap to the core competencies, learning pathways, and career trajectories that define a successful career in this dynamic field.

The Foundational Pillars of Generative AI

Before engaging with high-level frameworks and APIs, a robust understanding of the underlying principles is non-negotiable for any serious practitioner. Generative AI is not magic; it is a product of decades of research in machine learning and neural networks. A firm grasp of these fundamentals is what separates a model user from a model builder and innovator. It enables professionals to diagnose model failures, optimize performance, and adapt existing architectures to novel problems, which are critical capabilities in a production environment.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Understanding Deep Learning and Neural Networks

At the heart of every generative model lies a deep neural network. A comprehensive understanding of its core mechanics is the bedrock of any AI career. This includes:

- Neural Network Architecture: A solid grasp of multi-layer perceptrons (MLPs), activation functions (e.g., ReLU, Sigmoid, Tanh), and the role of hidden layers.

- The Training Process: Deep familiarity with concepts like gradient descent, backpropagation, loss functions (e.g., cross-entropy for classification), and optimizers (e.g., Adam, SGD).

- Regularization Techniques: Knowledge of methods like Dropout and L1/L2 regularization to prevent overfitting and improve model generalization.

- Convolutional Neural Networks (CNNs): Essential for understanding the foundations of many image generation models, including concepts like kernels, pooling, and convolutional layers.

- Recurrent Neural Networks (RNNs): While largely superseded by Transformers for many NLP tasks, understanding RNNs, LSTMs, and GRUs provides crucial context for the evolution of sequence modeling.

Mastery of Transformer Architectures

The introduction of the Transformer architecture in the 2017 paper “Attention Is All You Need” was a watershed moment for AI. Its parallel processing capabilities and sophisticated context handling made it the de facto standard for Large Language Models (LLMs) and, increasingly, for vision and multimodal tasks. Mastery requires understanding:

- The Self-Attention Mechanism: The core innovation of the Transformer. One must understand how the model computes Query (Q), Key (K), and Value (V) vectors to weigh the importance of different tokens in a sequence, enabling it to capture long-range dependencies.

- Encoder-Decoder Structure: Differentiating between encoder-only (e.g., BERT), decoder-only (e.g., GPT), and full encoder-decoder models (e.g., T5) and their respective use cases.

- Positional Encodings: Understanding how the model, which lacks an inherent sense of sequence, is provided with information about token order.

- Multi-Head Attention: The concept of running the attention mechanism multiple times in parallel to allow the model to focus on different aspects of the input simultaneously.

Differentiating Key Generative Models

The “generative” family of models is diverse, with different architectures suited to different tasks. A skilled professional must know which tool to use for a given problem.

| Model Architecture | Core Mechanism | Primary Use Case | Key Characteristics |

|---|---|---|---|

| Generative Adversarial Networks (GANs) | A zero-sum game between a Generator network (creates samples) and a Discriminator network (tries to distinguish real from fake). | High-fidelity image generation, style transfer, and data augmentation. | Can produce highly realistic outputs but are notoriously difficult and unstable to train. |

| Variational Autoencoders (VAEs) | An Encoder maps input data to a latent space distribution, and a Decoder samples from this space to generate new data. | Image generation, anomaly detection, and learning compressed data representations. | More stable to train than GANs but can produce blurrier, less sharp images. Provides an explicit probabilistic model of the data. |

| Diffusion Models | A process of systematically adding noise to data and then training a model to reverse the process (denoising) to generate new samples. | State-of-the-art for high-quality, diverse image and audio generation (e.g., Stable Diffusion, DALL-E 2). | Computationally intensive during inference (requires many denoising steps) but offers high sample quality and diversity. |

| Autoregressive Models (Transformer-based) | Generates a sequence one element at a time, conditioning each new element on the previously generated ones. | Text generation, code completion, and conversational AI (e.g., GPT series). | Excellent at coherent, context-aware sequence generation. Inference is sequential and can be slower for very long outputs. |

Core Technical Skills for Generative AI Professionals

Theoretical knowledge must be complemented by hands-on technical proficiency. The generative AI skills for professionals today revolve around a specific ecosystem of programming languages, libraries, and operational practices designed to handle the scale and complexity of modern models.

Advanced Python and Machine Learning Libraries

Python remains the lingua franca of machine learning due to its extensive library support and ease of use.

- Core Libraries: Mastery of NumPy for numerical operations, Pandas for data manipulation, and Matplotlib/Seaborn for visualization is assumed.

- Deep Learning Frameworks: Deep proficiency in either PyTorch or TensorFlow/Keras is mandatory. PyTorch is often favored in research for its flexibility, while TensorFlow has a strong ecosystem for production deployment.

- The Hugging Face Ecosystem: This is arguably the most critical toolset. Competence with

transformers(for accessing pre-trained models),diffusers(for diffusion models),datasets(for data handling), andaccelerate(for distributed training) is essential. - Orchestration and Interaction Frameworks: Knowledge of libraries like LangChain and LlamaIndex is crucial for building applications on top of LLMs, particularly for tasks involving Retrieval-Augmented Generation (RAG).

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Here is a simple example demonstrating the power of the Hugging Face pipeline for a zero-shot classification task:

from transformers import pipeline

# Initialize the zero-shot classification pipeline

classifier = pipeline("zero-shot-classification", model="facebook/bart-large-mnli")

# Define the sequence and candidate labels

sequence_to_classify = "Apple just announced the new M4 chip for its iPad Pro."

candidate_labels = ["technology", "politics", "finance", "sports"]

# Get the classification results

result = classifier(sequence_to_classify, candidate_labels)

print(result)

# Expected Output:

# {'sequence': 'Apple just announced the new M4 chip for its iPad Pro.',

# 'labels': ['technology', 'finance', 'politics', 'sports'],

# 'scores': [0.98..., 0.01..., 0.00..., 0.00...]}

Prompt Engineering and LLM Interaction

Interacting with foundation models is a skill in itself. Advanced prompt engineering goes beyond simple questions and involves structuring inputs to elicit precise, reliable, and structured outputs.

- Prompting Techniques: Understanding and applying techniques like Few-Shot Prompting (providing examples in the prompt), Chain-of-Thought (CoT) (asking the model to “think step by step”), and Zero-Shot CoT.

- Retrieval-Augmented Generation (RAG): This is a critical pattern for building knowledge-intensive applications. It involves retrieving relevant documents from an external knowledge base (e.g., a vector database) and providing them as context to the LLM in the prompt to reduce hallucinations and use up-to-date information.

- Structured Outputs: Forcing LLMs to generate outputs in a specific format, such as JSON or XML, often through prompting techniques or by using model features like OpenAI’s “JSON mode.”

Fine-Tuning and Model Adaptation

While foundation models are powerful, they often require adaptation for specific domains or tasks to achieve optimal performance.

- Full Fine-Tuning: The process of retraining all the weights of a pre-trained model on a smaller, domain-specific dataset. This is computationally expensive but can yield the best performance.

- Parameter-Efficient Fine-Tuning (PEFT): A suite of techniques designed to reduce the computational cost of fine-tuning. Instead of updating all model parameters, PEFT methods modify only a small subset. Low-Rank Adaptation (LoRA) is a prominent example, where small, trainable “adapter” matrices are injected into the model architecture. This drastically reduces memory requirements and training time.

MLOps for Generative AI

The principles outlined in an MLOps roadmap are essential for deploying and managing generative AI systems in production. However, generative models introduce unique challenges.

- Model and Prompt Versioning: Keeping track of which model version was used with which prompt template is critical for reproducibility.

- Evaluation: Evaluating generative models is notoriously difficult. Metrics go beyond simple accuracy and include human evaluation, BLEU/ROUGE scores for text, and Fréchet Inception Distance (FID) for images.

- Scalable Inference: Deploying large models requires specialized hardware (GPUs/TPUs) and infrastructure optimized for low-latency, high-throughput inference.

- Cost Management: Inference with large models can be expensive. MLOps practices must include robust monitoring and optimization strategies like model quantization and efficient batching.

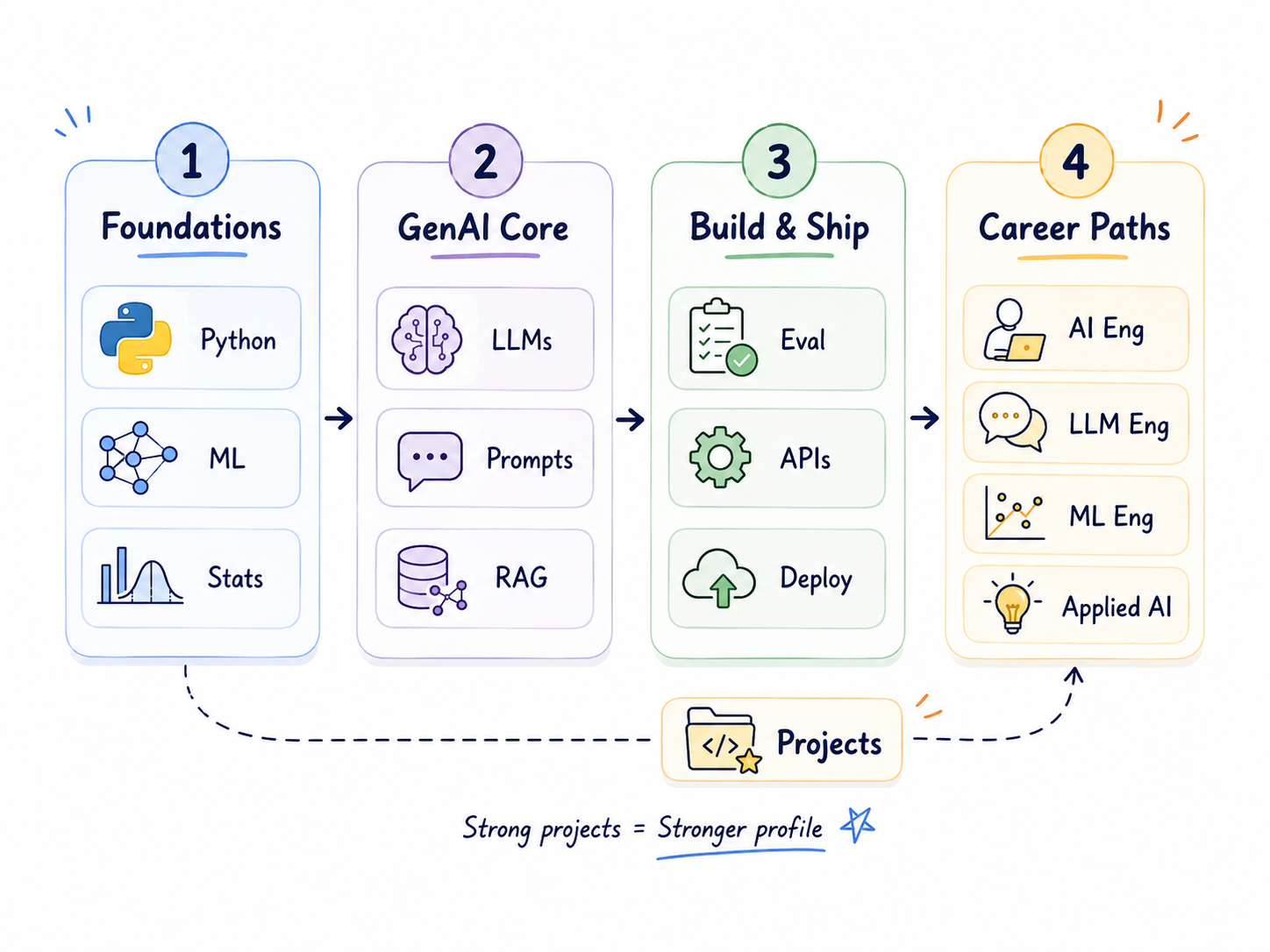

Building a Career: Strategic Learning Pathways and Certifications

Acquiring these skills requires a deliberate and structured AI engineer roadmap for professional mastery. A combination of formal education, hands-on projects, and continuous learning is the most effective strategy for building a robust career in generative AI.

Structured Learning: Courses and Specializations

The best AI courses provide an excellent framework for learning the fundamentals and staying current with the latest advancements.

- Foundational Courses: The “Career Essentials in Generative AI by Microsoft and LinkedIn” is a suitable starting point for gaining a high-level, conceptual overview. It is designed for a broad audience and introduces key terms and impacts of the technology.

- Technical Deep Dives: For engineers, more rigorous programs are necessary. The DeepLearning.AI Generative AI Specialization on Coursera (taught by Andrew Ng) offers a more technical look at the mechanics of LLMs, diffusion models, and their applications.

- University-Level Courses: For those seeking the deepest understanding, materials from courses like Stanford’s CS224n (NLP with Deep Learning) and CS236 (Deep Generative Models) are often available online and provide unparalleled depth.

The Role of Certifications

Certifications can validate your knowledge, particularly in cloud-based MLOps. While a specific “Generative AI” certificate may be less established, cloud platform certifications are highly regarded by employers.

- AWS Certified Machine Learning – Specialty: Demonstrates expertise in designing, building, and deploying ML models on AWS, including services like Amazon SageMaker.

- Google Cloud Professional Machine Learning Engineer: Validates skills in using Google Cloud services like Vertex AI to build and productionize ML systems.

- Microsoft Certified: Azure AI Engineer Associate: Focuses on implementing AI solutions using Azure Cognitive Services, Azure Machine Learning, and Knowledge Mining.

The primary value of these certifications lies in demonstrating your ability to operate within a specific production ecosystem, a crucial skill for any MLOps-focused role.

The Power of a Portfolio: Demonstrating Your Skills

In the field of software engineering, a strong portfolio of generative AI projects is often more compelling than any certificate. Practical application is the ultimate proof of competence.

- Build a RAG-Based Chatbot: Create a question-answering system over a specific set of documents (e.g., a company’s documentation or a technical textbook). This demonstrates skills in vector databases, embedding models, and prompt engineering.

- Fine-Tune a Model: Take a smaller open-source LLM (like Llama 3 8B or Mistral 7B) and fine-tune it using LoRA on a niche dataset (e.g., financial reports, legal texts, or a specific coding style).

- Develop an Image Generation Application: Use the Stable Diffusion API or an open-source model to build a simple web application that allows users to generate images from text prompts.

Host these projects on GitHub with clear documentation, a well-structured codebase, and a README explaining the problem, your approach, and the results.

Navigating Career Opportunities in the Generative AI Landscape

The demand for generative AI talent has created several specialized roles, each requiring a unique blend of the skills discussed.

Key Job Roles and Responsibilities

- AI/ML Engineer (Generative AI Focus): This is the most common role. These professionals build, fine-tune, deploy, and maintain generative models. They need strong software engineering skills, MLOps knowledge, and deep learning expertise.

- Generative AI Scientist/Researcher: Often requiring an advanced degree (Master’s or Ph.D.), these roles focus on developing novel model architectures, training algorithms, and advancing the state-of-the-art.

- Prompt Engineer: A specialized role focused on designing, testing, and refining prompts to optimize LLM performance for specific tasks. This requires a deep, intuitive understanding of model behavior.

- AI Product Manager: This role bridges the gap between technical teams and business needs, defining the product vision for AI-powered features and ensuring they align with user requirements and ethical guidelines.

Essential Soft Skills for Collaboration and Innovation

Technical prowess alone is insufficient. The most effective professionals also possess critical soft skills.

- Problem Decomposition: The ability to break down a vague business problem (“we need an AI assistant”) into a concrete technical plan (e.g., “we will implement a RAG system using a vector database and a fine-tuned LLM”).

- Ethical Judgment: A deep understanding of the ethical implications of generative AI, including bias, fairness, transparency, and the potential for misuse.

- Clear Communication: The capacity to explain complex technical concepts, model limitations, and performance trade-offs to non-technical stakeholders, such as product managers and executives.

The Future Trajectory: What’s Next in Generative AI?

The field of generative AI is evolving at an unprecedented pace. Staying relevant requires a commitment to continuous learning and an awareness of emerging trends.

Multimodality and Agentic AI

The future is multimodal. Models are increasingly being trained to understand and generate not just text, but a seamless combination of text, images, audio, and video. Concurrently, the rise of AI agents—systems that can reason, plan, and execute multi-step tasks to achieve a goal—promises to move beyond simple generation to autonomous problem-solving.

Efficiency and On-Device Deployment

A major focus of current research is on making models smaller, faster, and more efficient without sacrificing performance. Techniques like quantization (reducing the precision of model weights) and architectural innovations are enabling powerful models to run directly on edge devices like smartphones and laptops, unlocking new possibilities for privacy-preserving and low-latency applications.

Frequently Asked Questions (FAQ)

Q1: Do I need a Ph.D. to work in Generative AI?

For research scientist roles aimed at creating new model architectures, a Ph.D. is often required. However, for the vast majority of AI/ML Engineer roles focused on applying, fine-tuning, and deploying existing models, a Bachelor’s or Master’s degree in Computer Science combined with a strong project portfolio is sufficient.

Q2: What is the most important programming language for Generative AI?

Python is unequivocally the most important language due to its dominant machine learning ecosystem, including libraries like PyTorch, TensorFlow, and Hugging Face. Strong fundamentals in Python are a prerequisite for any serious practitioner.

Q3: How can I gain practical experience without a dedicated AI job?

Start with personal projects. Participate in Kaggle competitions focused on generative tasks. Contribute to open-source generative AI projects on GitHub. These activities not only build skills but also create a portfolio that demonstrates your capabilities to potential employers.

Q4: Is prompt engineering a long-term career?

While the role of a dedicated “Prompt Engineer” may evolve, the underlying skill of effectively communicating with and controlling AI models will remain critical. It will likely become an essential competency for a wide range of roles, from software developers and data analysts to product managers and content creators, rather than remaining a standalone profession.