AI agents are autonomous software systems driven by large language models (LLMs) that perceive their environment, make logic-based decisions, and execute actions using external tools to achieve specific goals. Common ai agents examples include autonomous coding assistants, automated cybersecurity responders, and complex financial analysis bots.

The Evolution from Generative AI to Agentic AI

The transition from standard generative AI to agentic AI represents a fundamental shift in how engineers design software systems. While traditional generative models act as passive oracles—accepting a prompt and returning a static text or image output—agentic systems possess autonomy, state management, and the ability to interact with external environments. This evolution requires moving away from single-turn request-response architectures toward continuous, cyclic processing loops.

In an agentic workflow, the model is embedded within an orchestration layer that allows it to reason about a problem, break it down into sub-tasks, delegate those tasks to specialized tools, and evaluate the results. If a tool returns an error, an intelligent agent does not simply fail; it reads the traceback, adjusts its approach, and attempts a new solution. This iterative feedback loop transforms large language models from conversational interfaces into functional computing engines capable of executing multi-step enterprise operations.

The Core Architecture of Autonomous AI Agents

To fully understand modern ai agents examples, it is necessary to examine the underlying cognitive architecture that powers them. An autonomous agent is not a single algorithm, but rather a composite system integrating natural language processing, deterministic tool execution, and stateful memory management.

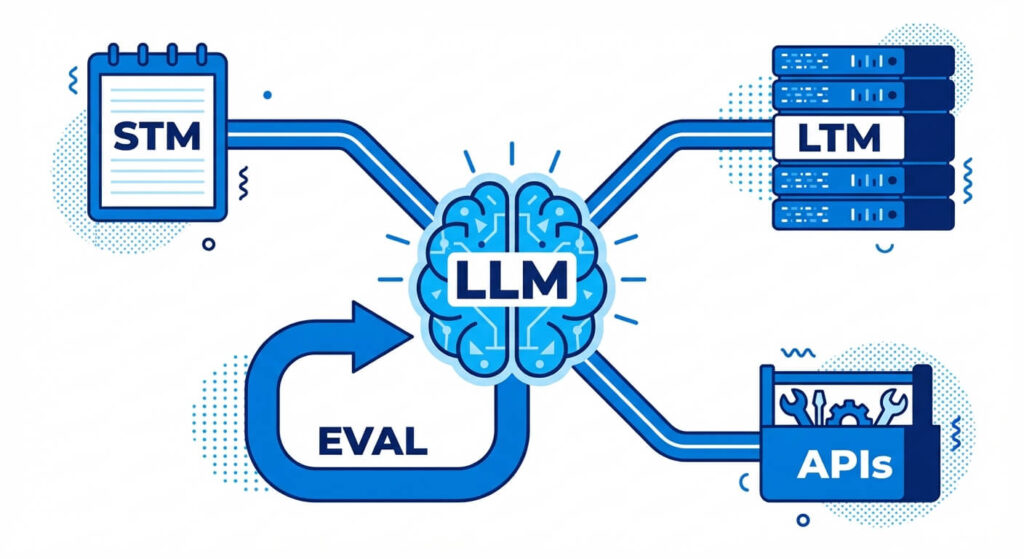

At the foundational level, agentic architecture consists of four distinct pillars: the Brain, Memory, Planning, and Tools (Actuators).

- The Brain (LLM Controller): The central processing unit of the agent. This is typically an advanced LLM (like GPT-4, Claude 3.5 Sonnet, or Llama 3) configured to output structured data (such as JSON or XML). The brain handles semantic reasoning, parses user intent, and determines the sequence of API calls required to solve a problem.

- Memory Systems: Agents require context to function autonomously over long periods.

- Short-Term Memory: Implemented via in-context learning, this maintains the history of the current execution thread, allowing the agent to remember tool outputs from previous steps.

- Long-Term Memory: Facilitated by Vector Databases (e.g., Pinecone, Milvus, pgvector), this allows the agent to retrieve historical data, past user interactions, or technical documentation using semantic similarity search (k-nearest neighbors).

- Planning and Reasoning (ReAct): Agents utilize paradigms like ReAct (Reason + Act) or Chain-of-Thought (CoT). Before executing a tool, the agent generates an internal reasoning trace. It asks itself: What is the current state? What do I need to achieve? Which tool gets me closer to that state?

- Tools and Actuators: These are the functional endpoints the agent uses to manipulate its environment. Tools are deterministically coded Python functions, REST APIs, GraphQL queries, or bash terminals that the LLM invokes by outputting specific function-calling schemas.

Fundamental Types of AI Agents

In computer science, AI agent classification historically stems from the models defined by Russell and Norvig. When applied to modern LLM-driven architectures, these classifications dictate how complex an agent’s decision-making routing is and what environments it can successfully navigate.

1. Simple Reflex Agents

Reflex agents operate strictly on current percepts, ignoring historical context. They function on condition-action rules. In software engineering, an example is an automated GitHub bot that triggers a specific formatting script whenever a developer opens a Pull Request with a specific label. It possesses no memory and does not reason about the content of the PR; it simply maps input A to output B.

2. Model-Based Reflex Agents

These agents maintain an internal state that tracks aspects of the environment that are not currently visible. They understand how the world evolves independently of their actions and how their actions affect the world. An intelligent load-balancing agent is a prime example: it monitors network traffic, maintains a model of server capacities, and dynamically spins up or terminates Kubernetes pods based on predictive demand patterns.

3. Goal-Based Agents

Goal-based agents expand on model-based agents by incorporating objective functions. They evaluate multiple possible sequences of actions to determine which path leads to the desired state. An AI testing agent operates under this paradigm. Given a repository and a goal (“achieve 90% code coverage”), the agent iteratively explores the codebase, generates unit tests, runs the test suite, and refines its generated tests until the goal condition is met.

4. Utility-Based Agents

While goal-based agents only care about achieving a binary state (success/failure), utility-based agents attempt to maximize a continuous performance measure. They weigh tradeoffs. For instance, a cloud infrastructure agent might have a goal to ensure zero downtime, but its utility function forces it to achieve this while minimizing AWS compute costs. It must calculate probabilities and expected values to select the most efficient resource allocation.

5. Learning Agents

Learning agents possess mechanisms to improve their performance over time. Through mechanisms like Reinforcement Learning from Human Feedback (RLHF) or self-reflection protocols, these agents evaluate the success of past actions and update their internal weights, prompt templates, or memory stores to avoid repeating mistakes in future execution loops.

AI Agents Examples: Real-World Technical Use Cases

The theoretical architecture of AI agents has rapidly translated into production-grade enterprise solutions. By coupling language models with specialized toolchains, organizations are automating highly complex, multi-stage workflows that previously required significant human intervention.

Below are highly technical ai agents examples demonstrating how this paradigm is implemented across various engineering and operational domains.

Software Engineering and Autonomous Development

The most disruptive ai agents examples in the software industry are autonomous coding agents like Devin or open-source equivalents like SWE-agent. These systems extend far beyond inline code completion (e.g., standard GitHub Copilot). They are designed to resolve entire GitHub issues autonomously.

When provided with a bug report, a software engineering agent initializes a secure sandbox environment. It clones the repository, searches through the file system using commands like grep or abstract syntax tree (AST) parsers to locate the faulty logic, and writes a patch. Crucially, the agent then utilizes terminal tools to execute the test suite. If a test fails due to a syntax error or a logical regression, the agent reads the traceback from the standard error (stderr), reasons about the failure, modifies its patch, and re-runs the tests. This recursive ReAct loop continues until the tests pass, at which point the agent pushes the branch and opens a Pull Request.

IT Operations and Site Reliability Engineering (SRE)

In modern microservices architectures, incident response is a high-latency process. AI agents in the SRE domain act as automated Level-1 and Level-2 responders.

Consider an agent integrated with PagerDuty, Datadog, and AWS. When a high-CPU-utilization alert is triggered, an SRE agent is invoked. Instead of simply forwarding the alert to a human, the agent autonomously queries the Datadog API for the last 15 minutes of metric telemetry. It accesses the application’s logs in Elasticsearch to identify anomalous stack traces. If the agent determines the issue is a known memory leak in a specific Docker container, it can execute an authorized webhook to restart the failing pod or roll back the deployment to the previous stable git commit. This reduces Mean Time to Resolution (MTTR) from hours to minutes.

Cybersecurity and Threat Hunting

Cybersecurity necessitates analyzing massive volumes of network telemetry—a task highly suited for autonomous agents. AI agents examples in cybersecurity include automated Security Operations Center (SOC) analysts.

When a Security Information and Event Management (SIEM) system flags an anomalous login, a threat-hunting agent takes over. The agent can take the flagged IP address, query threat intelligence databases (like VirusTotal or CrowdStrike Falcon), cross-reference the user’s Active Directory baseline behavior, and parse recent firewall logs. If the agent correlates these data points and mathematically determines a high probability of a credential stuffing attack, it can proactively interact with the Identity and Access Management (IAM) API to revoke the user’s session tokens and append the malicious IP to the firewall’s deny-list, isolating the threat at machine speed.

Financial Engineering and Algorithmic Trading

Quantitative finance requires processing unstructured data and executing deterministic mathematical models. Financial AI agents bridge this gap.

A modern algorithmic trading agent does not rely solely on moving averages. Instead, it continuously monitors SEC Edgar feeds for new 10-K filings or earnings reports. Upon detecting a new document, the agent parses the unstructured text, extracting forward-looking revenue projections. It then queries a financial API (like Bloomberg or Yahoo Finance) for real-time asset pricing, feeds the extracted data into a predefined Python Monte Carlo simulation to project risk, and finally executes a buy/sell order via the brokerage API (e.g., Interactive Brokers) if the calculated utility exceeds a strict algorithmic threshold.

Enterprise Data Analysis and Business Intelligence

Traditional data pipelines require data engineers to translate business requirements into SQL queries, build dashboards, and export reports. Data-centric AI agents automate the entire pipeline.

A “Text-to-SQL” agent operates within a data warehouse environment like Snowflake or BigQuery. A user asks, “What was our month-over-month churn rate in the EMEA region for Q3?” The agent fetches the database schema (metadata), reasons about table joins, writes the exact SQL dialect required, and executes the query. If the query throws a syntax error, the agent debugs its own SQL and retries. Once successful, it passes the raw tabular data to a secondary Python execution agent, which utilizes pandas and matplotlib to generate an analytical chart, delivering a finalized, data-backed report to the user.

Traditional Automation vs. Agentic AI

To appreciate the capabilities of these systems, engineers must differentiate between traditional Robotic Process Automation (RPA) and modern Agentic AI. RPA relies on rigid, rule-based heuristics. If an RPA script encounters an unexpected UI change or an undefined error code, execution halts completely.

Conversely, agentic workflows introduce probabilistic reasoning into the control flow, allowing the system to handle edge cases, adapt to dynamic environments, and synthesize novel solutions without explicit, step-by-step programming.

| System Characteristic | Traditional Automation (RPA / Scripts) | Autonomous AI Agents |

|---|---|---|

| Execution Model | Deterministic. Follows a strict, linear progression of predefined rules (If-This-Then-That). | Probabilistic. Uses heuristic reasoning to determine the optimal sequence of actions dynamically. |

| Data Processing | Requires highly structured inputs (e.g., CSVs, strictly formatted JSON, fixed database schemas). | Thrives on unstructured data (e.g., raw text logs, messy codebases, natural language emails). |

| Error Handling | Fragile. Fails immediately upon encountering unhandled exceptions or unexpected data types. | Resilient. Capable of reading error traces, modifying its approach, and retrying autonomously. |

| State Management | Stateless or relies on rigid, pre-allocated memory buffers and databases. | Maintains complex contextual state via episodic memory and vector similarity retrieval. |

| Primary Use Case | High-volume, repetitive tasks with zero variation (e.g., invoice scraping, basic ETL pipelines). | Complex, multi-step problem solving requiring cognitive synthesis and adaptability (e.g., code debugging, threat analysis). |

Frameworks and Tools to Build AI Agents in 2025

Building robust AI agents from scratch using raw API calls requires handling massive amounts of boilerplate code for prompt formatting, state tracking, and output parsing. Consequently, the open-source community has developed powerful orchestration frameworks designed specifically for agentic development.

LangChain and LangGraph

LangChain popularized the concept of chaining LLM components together. However, traditional Directed Acyclic Graph (DAG) structures proved insufficient for agentic loops, which require cyclic behavior (the ability to revisit previous nodes). LangGraph solves this by allowing developers to define agents as state machines. Nodes represent execution steps (LLM reasoning or tool execution), and edges define the conditional logic connecting them, enabling the creation of complex, cyclic ReAct architectures with built-in state persistence.

Microsoft AutoGen

When a task becomes too complex for a single agent, multi-agent orchestration is required. Microsoft AutoGen enables the creation of distinct, specialized agents that converse with one another to solve a problem. For example, a developer can instantiate a “Coder Agent” and a “Code Reviewer Agent.” The Coder writes the script, passes it to the Reviewer, and the two agents iterate back and forth until the code passes strict quality thresholds, entirely without human intervention.

CrewAI

CrewAI builds upon the multi-agent paradigm by introducing strict role-playing and task delegation. It operates like a virtual corporate hierarchy. Developers define agents with specific roles, backstories, and goals, and assign them discrete tasks. A Manager agent can break down a large project and delegate sub-tasks to Researcher and Writer agents, orchestrating the workflow to ensure the final output is cohesive and accurate.

Implementing a Function-Calling Agent in Python

To ground these concepts, let us examine a technical implementation. The core mechanism behind any AI agent is “Function Calling” (or Tool Binding). This is the process of providing an LLM with the schema of a Python function, allowing the model to decide when and how to execute it based on user input.

The following Python code snippet demonstrates how to instantiate a basic agent using the LangChain framework, bind a mathematical tool to it, and execute a query.

import os

from langchain_openai import ChatOpenAI

from langchain_core.tools import tool

from langchain.agents import create_tool_calling_agent, AgentExecutor

from langchain_core.prompts import ChatPromptTemplate

# 1. Define a deterministic tool (Actuator)

@tool

def calculate_network_latency(distance_km: float, medium: str) -> str:

"""

Calculates the theoretical minimum network latency for a given distance

and medium (fiber or copper).

"""

speed_of_light_vacuum = 299792 # km/s

if medium.lower() == "fiber":

speed = speed_of_light_vacuum * 0.68 # Light in fiber optic

elif medium.lower() == "copper":

speed = speed_of_light_vacuum * 0.95 # Electricity in copper

else:

return "Error: Unknown medium."

# Calculate round trip time in milliseconds

latency_ms = (distance_km * 2 / speed) * 1000

return f"Theoretical RTT: {latency_ms:.2f} ms"

# 2. Initialize the Tools and the Brain (LLM)

tools = [calculate_network_latency]

llm = ChatOpenAI(model="gpt-4-turbo", temperature=0)

# 3. Define the System Prompt

prompt = ChatPromptTemplate.from_messages([

("system", "You are a senior network engineering AI agent. Use the provided tools to assist the user."),

("human", "{input}"),

("placeholder", "{agent_scratchpad}"),

])

# 4. Bind tools and create the Agent Executor loop

agent = create_tool_calling_agent(llm, tools, prompt)

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True, max_iterations=5)

# 5. Execute the Agent

response = agent_executor.invoke({

"input": "We are provisioning a new database replica 3000 km away over a fiber link. What is the absolute lowest latency we can expect?"

})

print(response["output"])

In this architecture, the LLM parses the natural language prompt, identifies that it needs to calculate latency, extracts the variables distance_km=3000 and medium="fiber", invokes the Python function, and formulates a final response based on the deterministic output of the tool.

Overcoming Engineering Challenges in Agentic Workflows

While ai agents examples demonstrate immense potential, deploying them in production introduces unique distributed systems challenges that engineers must mitigate.

First, infinite loops are a significant risk. Because agents operate on recursive ReAct loops, a model that consistently hallucinates incorrect tool arguments can enter a continuous cycle of failing, retrying, and failing again. Engineers must implement strict max_iterations limits and hard timeouts to prevent resource exhaustion and unbounded API costs.

Second, context window degradation occurs when agents generate massive amounts of intermediate logging data or tool outputs. As the context length grows, the LLM’s attention mechanism degrades, leading to “lost in the middle” phenomena where the agent forgets its original objective. Implementing semantic routing, chunking tool outputs, and utilizing hierarchical memory summaries are necessary to maintain reasoning integrity over long execution sessions.

Finally, security and sandboxing are paramount. Giving an autonomous agent access to execute bash commands or SQL queries inherently creates an attack vector for Prompt Injection. If a malicious user commands a database agent to DROP TABLE users, the agent might blindly comply. Production environments must execute agent operations within ephemeral Docker containers, enforce Principle of Least Privilege (PoLP) on API keys, and place humans-in-the-loop (HITL) for destructive actions.

Conclusion

The evolution of software development is moving rapidly toward agentic workflows. As demonstrated by the various ai agents examples across software engineering, cybersecurity, and quantitative finance, these autonomous systems are fundamentally altering how we interact with computational environments. By combining the vast semantic knowledge of large language models with the deterministic reliability of programmatic tools and rigorous state management, engineers can build systems that do not merely generate text, but actively solve complex, multi-layered problems. Mastering the architecture, frameworks, and limitations of AI agents is no longer optional for modern technical professionals; it is the prerequisite for building the next generation of scalable, intelligent software.

Frequently Asked Questions (FAQs)

How do multi-agent systems resolve conflicts in reasoning? In frameworks like AutoGen or CrewAI, conflict resolution is managed through hierarchical routing or consensus mechanisms. A “Manager” agent evaluates the conflicting outputs of subordinate agents against the original objective function and either mathematically weights their confidence scores or requests a revised generation based on cross-critique.

What is the algorithmic difference between Zero-Shot learning and a ReAct Agent? Zero-shot learning requires the model to generate a final answer in a single forward pass through the neural network based purely on its pre-trained weights. A ReAct (Reason + Act) agent executes multiple iterations, actively querying external APIs to fetch missing information, injecting that new data into its context window, and reasoning over it before arriving at a final state.

How is state mathematically represented in an agent’s long-term memory? Text and execution logs are passed through an embedding model (like text-embedding-ada-002) to generate dense numerical vectors, typically represented as continuous values in a high-dimensional space (e.g., d = 1536). When the agent needs to recall data, it computes the Cosine Similarity or Euclidean distance between the user query vector and the stored memory vectors to retrieve the most contextually relevant information.

Can an AI agent run entirely locally without cloud APIs? Yes. By utilizing quantized open-weights models (like Llama-3 8B or Mistral) running on local inference engines (such as Ollama or vLLM), developers can build fully air-gapped agentic workflows. This guarantees data privacy and eliminates latency introduced by network trips, though it is constrained by local GPU VRAM limitations.