AI Agents: Complete Guide

What is an AI Agent? When having AI agents explained, the core definition is clear: An AI agent is an autonomous software system that perceives its environment, processes data using artificial intelligence, makes independent decisions, and takes actions to achieve specific goals without continuous human intervention. They utilize reasoning, memory, and tool-calling to execute complex, multi-step tasks.

Introduction to Autonomous AI Agents

The transition from standard Large Language Models (LLMs) to autonomous AI agents represents a paradigm shift in artificial intelligence—moving from reactive text generation to proactive task execution. Standard LLMs act as passive inference engines; they require human prompts to generate a response and lack the ability to interact with external systems. AI agents, however, embed these foundational models within a broader cognitive architecture.

When seeking AI agents explained at a technical level, it is critical to understand that the LLM serves merely as the "brain" of the agent. Surrounding this brain are sophisticated frameworks that allow the system to ingest multimodal data, retain context across sessions, formulate sequential plans, and execute code or API calls. This enables businesses and developers to automate highly complex pipelines, ranging from autonomous code generation and deployment to dynamic data analysis. Understanding the mechanics of these agents requires a deep dive into their cognitive architecture, orchestration patterns, and operational lifecycle.

Core Components of an AI Agent

To function autonomously, an AI agent relies on a modular architecture that bridges the gap between raw compute and environmental interaction. Modern agentic frameworks divide this architecture into four distinct components: perception, memory, reasoning, and action. Each component plays a distinct role in processing state transitions and minimizing the error between the current state and the goal state.

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

Perception and Input Handling

Perception is the mechanism by which an AI agent receives data from its environment. In software environments, sensors are typically API endpoints, event listeners, webhooks, or direct user inputs. For multimodal AI agents, perception extends to computer vision models (like CNNs or Vision Transformers) processing image streams, or audio models transcribing speech to text. The agent continuously monitors the environment state (S_t) and normalizes these disparate data types into a structured format (usually JSON or highly structured text) that the underlying reasoning engine can process.

Memory

Memory allows an agent to maintain state context over time, solving the strict context window limitations inherent in LLMs. Agentic memory is bifurcated into two types:

- Short-Term Memory: This acts as the agent's immediate context window. It stores the recent history of the current interaction, intermediate thoughts, and temporary variables generated during task execution.

- Long-Term Memory: This provides persistent storage across multiple sessions. Technically, this is implemented using Vector Databases (such as Pinecone, Milvus, or pgvector). Information is converted into high-dimensional vector embeddings. When the agent encounters a new query, it performs a k-nearest neighbors (k-NN) or cosine similarity search against the vector database to retrieve relevant historical context, effectively utilizing Retrieval-Augmented Generation (RAG).

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

Reasoning and Decision-Making

Reasoning is the cognitive engine of the agent. When an agent receives an input, it must decompose the primary goal into a series of actionable steps. This is achieved through prompting techniques such as Chain-of-Thought (CoT), Tree of Thoughts (ToT), or the ReAct (Reasoning and Acting) framework. The agent calculates the optimal path by evaluating the transition probabilities of different actions. It assesses the current state (S_t), predicts the outcome of a potential action (A_t), and evaluates the expected reward (R) to choose a sequence that maximizes the likelihood of goal completion.

Action and Tool Calling

While reasoning dictates what to do, the action component dictates how to do it. Actuators in an AI agent take the form of tool-calling capabilities. The agent is provided with a registry of external functions—such as SQL query executors, Python read/eval/print loops (REPLs), web search APIs, or file system access. When the reasoning engine determines a specific action is required, it outputs a meticulously formatted payload (e.g., an API request with specific parameters). The agent framework executes this call, captures the return value, and feeds it back into the agent's perception module as new environmental data.

The AI Agent Lifecycle

The AI agent lifecycle defines the continuous, iterative loop through which an agent processes tasks. Unlike standard procedural code that executes linearly, an agentic workflow operates as a state machine. It continuously evaluates its environment, adjusts its strategy based on new data, and iterates until its termination condition (goal completion or failure threshold) is met.

- Goal Initialization: The lifecycle begins with an objective. This can be a user-defined prompt (e.g., "Analyze last month's AWS billing data and find anomalies") or an automated system trigger. The agent registers this goal and establishes the parameters for success.

- Observation & State Formulation: The agent polls its environment to understand the current state. It retrieves relevant context from its long-term vector memory and analyzes the initial inputs provided.

- Deliberation & Planning (Task Decomposition): The reasoning engine takes over. The agent breaks the overarching goal into a Directed Acyclic Graph (DAG) of sub-tasks. For example, it plans to first authenticate via the AWS API, retrieve the CSV data, write a Python script to parse the data, and finally format the output.

- Execution (Action): The agent executes the first sub-task by invoking the appropriate tool or API. It passes the necessary arguments and awaits the response.

- Evaluation & Adaptation: The agent receives the output of its action (e.g., the API returns a 200 OK with data, or a 403 Forbidden). It evaluates this response against its expected outcome. If an error occurs, the agent updates its state, forms a new sub-plan to bypass the error, and re-executes. This loop continues until the overarching goal is resolved.

AI Agents vs. AI Assistants vs. Bots

A common point of confusion among engineers and product managers is the distinction between bots, AI assistants, and autonomous AI agents. While the underlying models may share similarities, their architecture, autonomy, and execution scopes differ significantly.

| Feature | Traditional Bots (e.g., Chatbots) | AI Assistants (e.g., ChatGPT, Copilot) | AI Agents (e.g., AutoGPT, Devin) |

|---|---|---|---|

| Core Architecture | Rule-based decision trees, regex parsing, or basic NLP intent classification. | Large Language Models (LLMs) with conversational fine-tuning and basic RAG. | LLMs embedded within orchestration frameworks featuring memory, planning, and external APIs. |

| Autonomy | Zero. Follows strict, pre-programmed execution paths. | Low. Requires human-in-the-loop for every discrete step (prompt-response). | High. Capable of looping, self-prompting, and adapting without human intervention. |

| Reasoning | None. Execution relies entirely on static conditional logic (If/Else). | Inference-based reasoning constrained to a single conversational turn. | Multi-step reasoning (ReAct, Chain of Thought), capable of correcting its own errors. |

| Tool Utilization | Hardcoded API calls mapped to specific user intents. | Limited to predefined plugins (e.g., web search) triggered by direct prompts. | Dynamic tool-calling; can write, test, and execute custom code to solve unforeseen problems. |

Types of AI Agents

To engineer effective agentic workflows, software engineers categorize agents based on their decision-making sophistication and state awareness. This classification is deeply rooted in classic artificial intelligence theory, specifically the formalization of rational agents.

Simple Reflex Agents

These are the most rudimentary forms of agents. They operate solely on the current percept (the immediate input) and ignore any historical context or memory. Their decision-making is based entirely on condition-action rules (If State S = X, then Action A = Y). Because they lack memory, simple reflex agents are only viable in fully observable, highly constrained environments where past states have no bearing on future actions.

Model-Based Reflex Agents

Model-based agents introduce the concept of internal state. They maintain a representation of the unobserved aspects of their environment, utilizing a transition model that tracks how the environment evolves independently of the agent, and how the agent's actions impact the environment. By keeping track of the historical state (S_t-1) and the previous action (A_t-1), these agents can function effectively in partially observable environments.

Goal-Based Agents

Expanding upon model-based architecture, goal-based agents are equipped with specific target objectives. They do not just react to their environment; they proactively evaluate multiple possible action sequences to determine which path leads to the goal state. This requires advanced search algorithms and planning capabilities (like A* search or Monte Carlo Tree Search) to project future states and select optimal trajectories.

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

Utility-Based Agents

While a goal-based agent only cares about binary success (goal achieved vs. not achieved), a utility-based agent optimizes for the "best" path. It utilizes a mathematical utility function to measure the desirability of a state. If multiple paths lead to a goal, a utility-based agent will calculate the expected utility of each path—weighing factors like time complexity, computational cost, or safety—and select the most efficient route.

Learning Agents

Learning agents are capable of improving their performance over time. They consist of four sub-components: a learning element (responsible for making improvements), a performance element (responsible for selecting actions), a critic (which evaluates how well the agent is doing against a fixed performance standard), and a problem generator (which suggests exploratory actions). Through mechanisms like Reinforcement Learning from Human Feedback (RLHF) or Proximal Policy Optimization (PPO), these agents adapt their internal weights and heuristics based on past successes and failures.

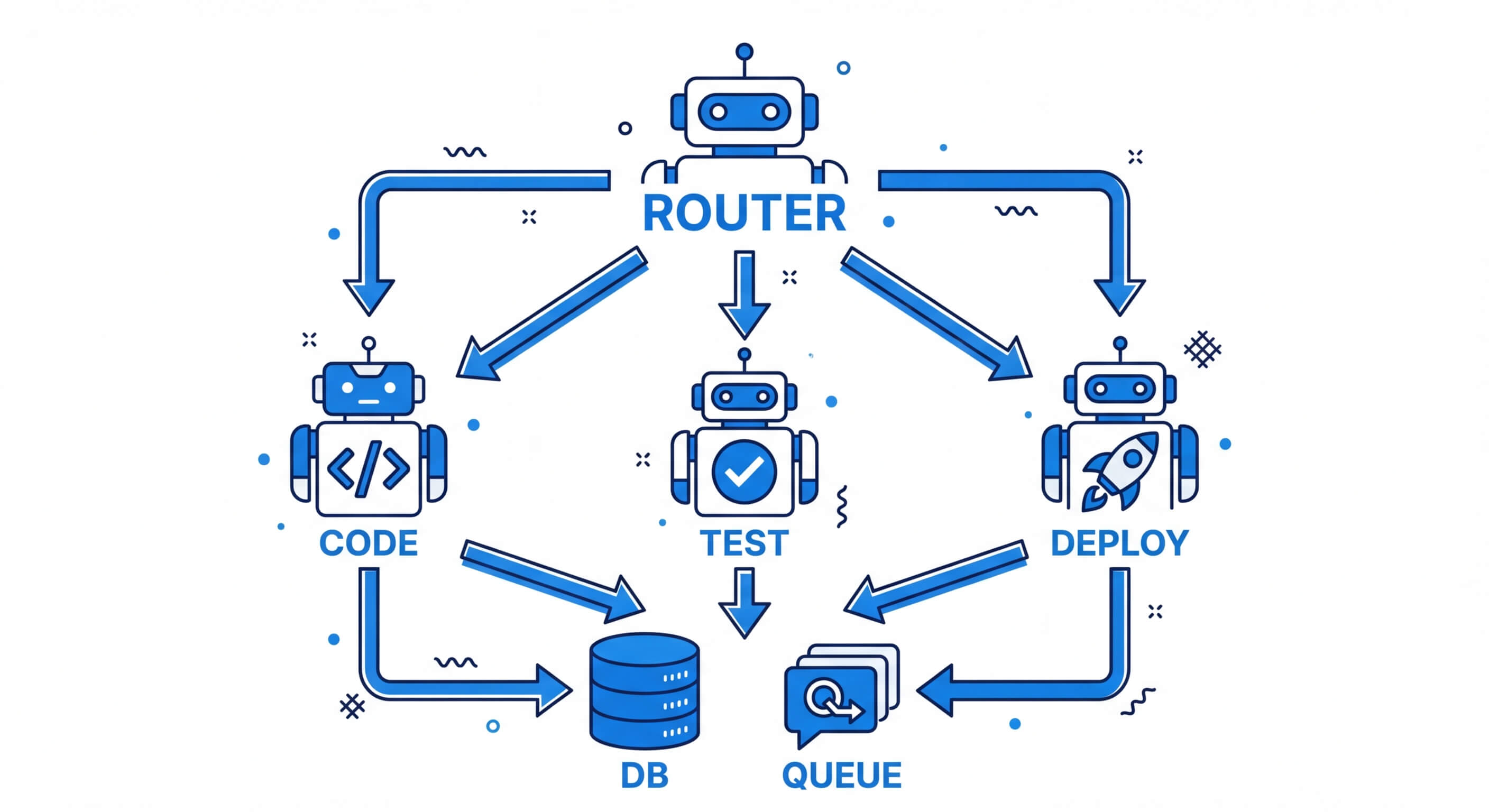

AI Agent Orchestration and Frameworks

As tasks become more complex, relying on a single AI agent becomes inefficient. The industry standard has shifted toward Multi-Agent Systems (MAS) and orchestration patterns. In these architectures, complex systems are divided among highly specialized, narrow-scope agents, all coordinated by a central routing agent or orchestration framework.

Orchestration frameworks provide the infrastructure necessary for agents to communicate, share memory, and execute tools securely.

- LangChain & LangGraph: LangChain provides standard interfaces for connecting LLMs to tools and memory. LangGraph extends this by allowing developers to build cyclic graphs, essential for the continuous loops required in the AI agent lifecycle.

- AutoGPT & BabyAGI: These are experimental, open-source architectures that demonstrated the viability of autonomous task execution. They rely heavily on self-prompting loops where the output of one iteration becomes the input of the next.

- Microsoft AutoGen & CrewAI: These frameworks specialize in multi-agent orchestration. They allow developers to instantiate multiple agents with distinct personas (e.g., "Senior Developer," "QA Engineer," "Product Manager") that debate, review each other's work, and collaboratively solve complex engineering problems.

Agentic AI in Enterprise Applications

Agentic AI refers to the deployment of these multi-agent systems at an enterprise scale, integrated deeply into business logic and operational pipelines. Unlike experimental agents, enterprise Agentic AI requires high determinism, strict security boundaries, and robust error handling.

Software Engineering and CI/CD

In modern DevOps pipelines, AI agents go far beyond code auto-completion. Autonomous coding agents (like Devin or specialized AutoGen setups) can receive a GitHub issue, clone the repository, read the existing codebase via vector search, write the patch, write unit tests, execute the tests in an isolated sandbox, debug any failures, and submit a pull request.

Cybersecurity Operations

Security Operations Centers (SOCs) utilize AI agents for automated threat hunting and incident response. When a SIEM (Security Information and Event Management) system flags an anomaly, an AI agent can autonomously query server logs, isolate affected IP addresses, update firewall rules, and draft an incident report. This drastically reduces the Mean Time to Respond (MTTR) to zero-day vulnerabilities.

Autonomous Data Analytics

Instead of static dashboards, data teams deploy utility-based agents connected to enterprise data warehouses (like Snowflake or BigQuery). A business user can prompt, "Why did user retention drop in Q3?" The agent will autonomously write SQL queries, execute them, analyze the resulting dataframes using Python libraries (like Pandas), generate statistical visualizations, and produce a comprehensive markdown report.

Challenges and Risks of Agentic AI

Despite their profound capabilities, integrating autonomous agents into production environments presents significant engineering and security challenges.

- Hallucinations in Task Planning: While LLMs have improved, they still hallucinate. In an agentic loop, a hallucinated variable or false assumption in step one will cascade exponentially through subsequent steps, leading to catastrophic failure of the AI agent lifecycle.

- Infinite Loops and Token Exhaustion: If an agent lacks a strict termination condition or fails to recognize that a specific tool is broken, it may continuously attempt the same failed action. This results in infinite loops that rapidly consume API tokens, leading to massive, unexpected infrastructure costs.

- Security and Prompt Injection: Allowing an AI system to execute arbitrary code or API calls is inherently dangerous. If a malicious user performs a prompt injection attack (e.g., instructing a customer service agent to drop a database table), the agent might blindly execute it. Mitigating this requires strict sandboxing (e.g., Docker containers for code execution), principle of least privilege for API keys, and robust human-in-the-loop (HITL) authorization for destructive actions.

- State Drift: Over long operational lifecycles, the context window can become polluted with irrelevant intermediate thoughts. Managing context length effectively—knowing when to summarize short-term memory and when to rely on vector databases—remains a complex optimization problem.

Frequently Asked Questions (FAQ)

What is the ReAct framework in AI agents?

ReAct (Reasoning and Acting) is a prompting paradigm that interleaves reasoning traces with task-specific actions. Instead of simply predicting the next word, the agent generates a "Thought" (evaluating the state), selects an "Action" (invoking a tool), and receives an "Observation" (the tool's output). This loop forces the agent to ground its reasoning in real-world environmental feedback, significantly reducing hallucinations.

How does an AI agent differ from an LLM?

An LLM (Large Language Model) is simply a predictive text engine; it takes a string of text and calculates the probability of the next sequence of words. An AI agent uses the LLM as its reasoning core but surrounds it with a cognitive architecture including memory systems, task decomposition logic, loop execution capabilities, and access to external software tools.

How do AI agents handle long-term memory?

AI agents use Vector Databases for long-term memory. When an agent experiences an event or generates data, that text is passed through an embedding model (like OpenAI's text-embedding-ada-002) to create a high-dimensional array of numbers (a vector). When the agent needs to recall past events, it embeds its current query and performs a mathematical similarity search against the database to retrieve the most contextually relevant historical information.

What is the "human-in-the-loop" (HITL) concept in agent orchestration?

Human-in-the-loop is a safety and alignment mechanism where an autonomous agent operates independently until it reaches a critical decision point—such as executing a financial transaction, deleting a file, or pushing code to production. At this node, the agent pauses its lifecycle, summarizes its intended action, and waits for explicit human authorization before proceeding.