Alpha Beta pruning

Overview

Alpha Beta Pruning is an optimization technique of the Minimax algorithm. This algorithm solves the limitation of exponential time and space complexity in the case of the Minimax algorithm by pruning redundant branches of a game tree using its parameters Alpha() and Beta().

Pre-requisites

- Basic knowledge of space and time complexity

- Minimax algorithm

Introduction

In AI, an agent takes various decisions in an environment to maximize its gains/rewards. Adversarial search is a paradigm wherein multiple agents are competing against each other in the same environment to maximize their gains while minimizing that of others. The Minimax algorithm is an adversarial search algorithm that involves a Depth-First-Search(DFS) on the game tree.

However, as the depth of the game tree increases, the number of states increases exponentially, leading to high time and space complexity. This is solved by the Alpha Beta Pruning algorithm, an optimization technique of the Minimax algorithm.

The following sections present the Alpha Beta Pruning algorithm details for the case when two competing agents take alternate turns. One agent is a maximizer who wants to maximize the utility, whereas the other agent is a minimizer who wants to minimize the utility. The maximizer gets the first turn. Our goal is to search for an optimal sequence of actions for the maximizer. The following concepts can be extrapolated for other multi-agent environments as well.

Interesting Fact: The literal meaning of pruning refers to the discarding of unwanted branches of a tree in gardening. Alpha Beta Pruning in AI is named as such because it involves pruning the unnecessary branches of a game tree by using two parameters, Alpha() and Beta(), described in the next section.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

Parameters of the Alpha Beta Pruning Algorithm

-

Alpha(): The best choice(highest utility/value) found till the current state on the path traversed by the maximizer.

-

Beta(): The best choice(lowest utility/value) found till the current state on the path traversed by the minimizer.

Salient Properties and Conditions of the Alpha Beta Pruning Algorithm

The critical points of Alpha Beta Pruning in AI are as follows.

- The initialization of the parameters

- Alpha() is initialized with .

- Beta() is initialized with .

- Updating the parameters

- Alpha() is updated only by the maximizer at its turn.

- Beta() is updated only by the minimizer at its turn.

- Passing the parameters

- Alpha() and Beta() are passed on to only child nodes.

- While backtracking the game tree, the node values are passed to parent nodes.

- Pruning Condition

- The child sub-trees, which are not yet traversed, are pruned if the condition holds.

In the next section, we conglomerate all these properties and conditions into the pseudo-code of Alpha Beta Pruning in AI.

Pseudocode for Alpha Beta Pruning in AI

Keeping all the pointers stated previously, we present the following pseudo-code for the Alpha Beta Pruning algorithm.

Working on Alpha Beta Pruning Algorithm

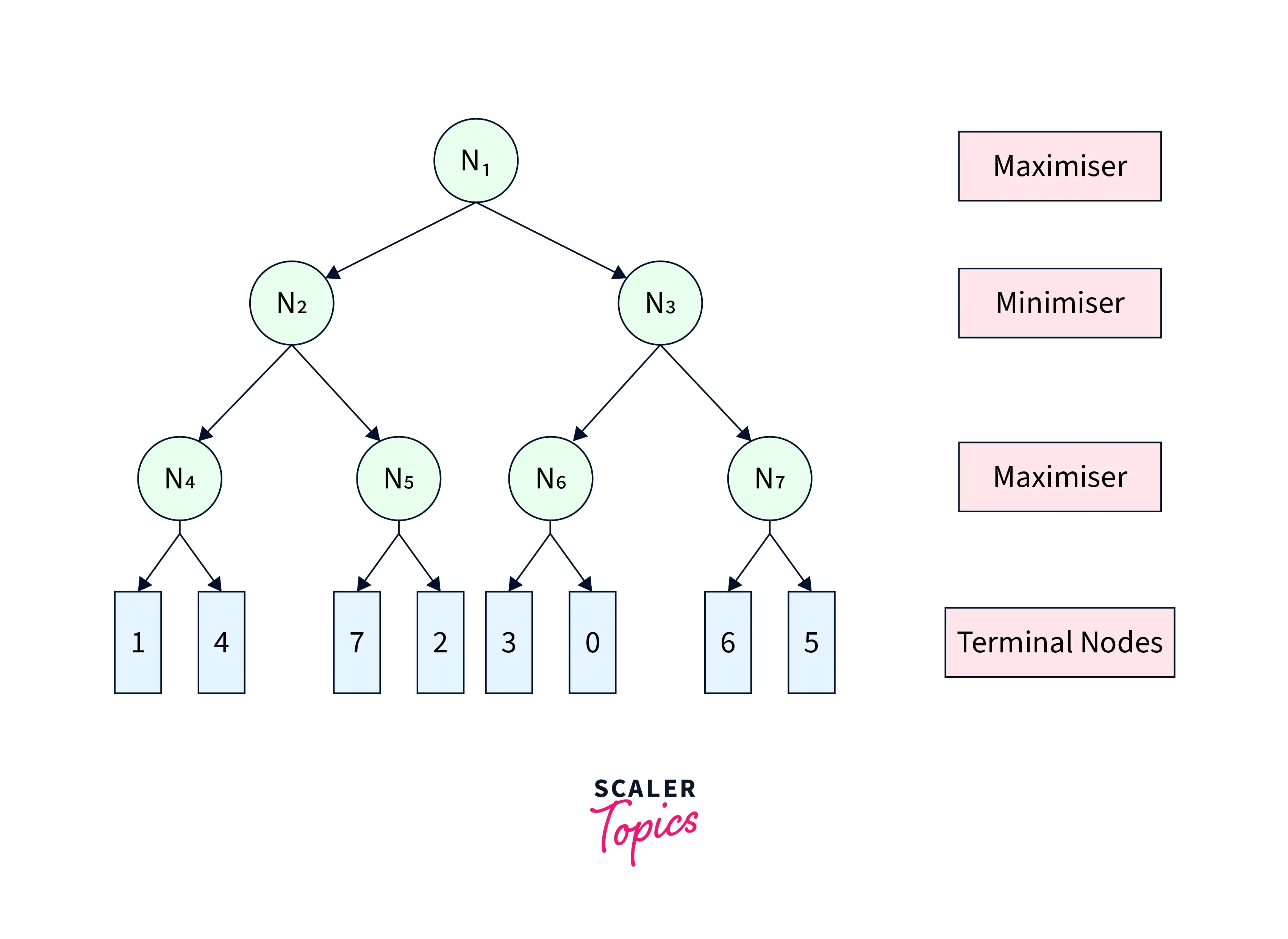

To illustrate the working of Alpha Beta Pruning in AI, we use an example game tree shown in Figure-1. The numbers shown in terminal nodes represent their respective utilities.

|

|---|

| Figure-1 |

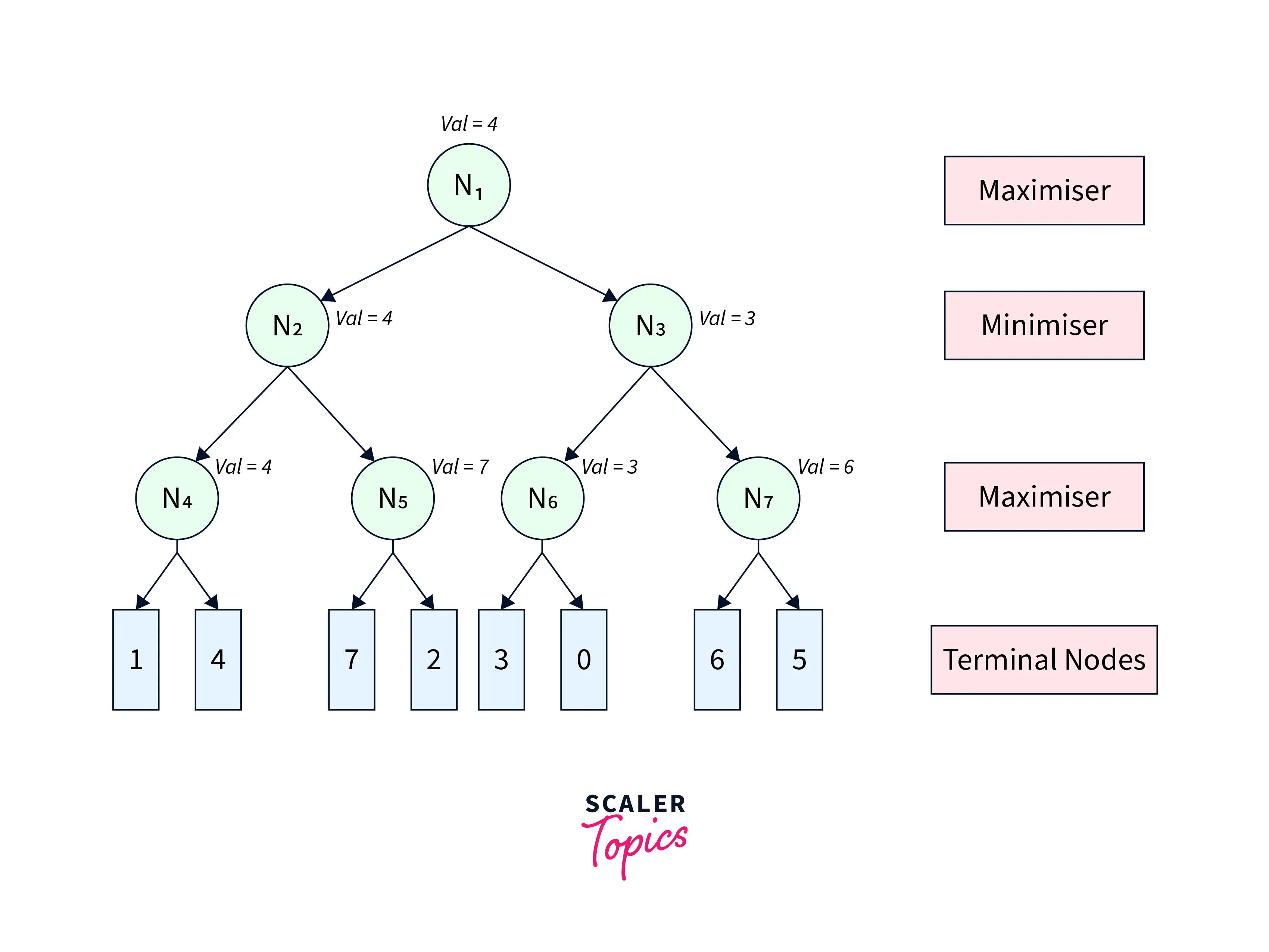

The Minimax algorithm would have traversed the complete game tree leading to the game tree shown in Figure-2.

|

|---|

| Figure-2 |

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

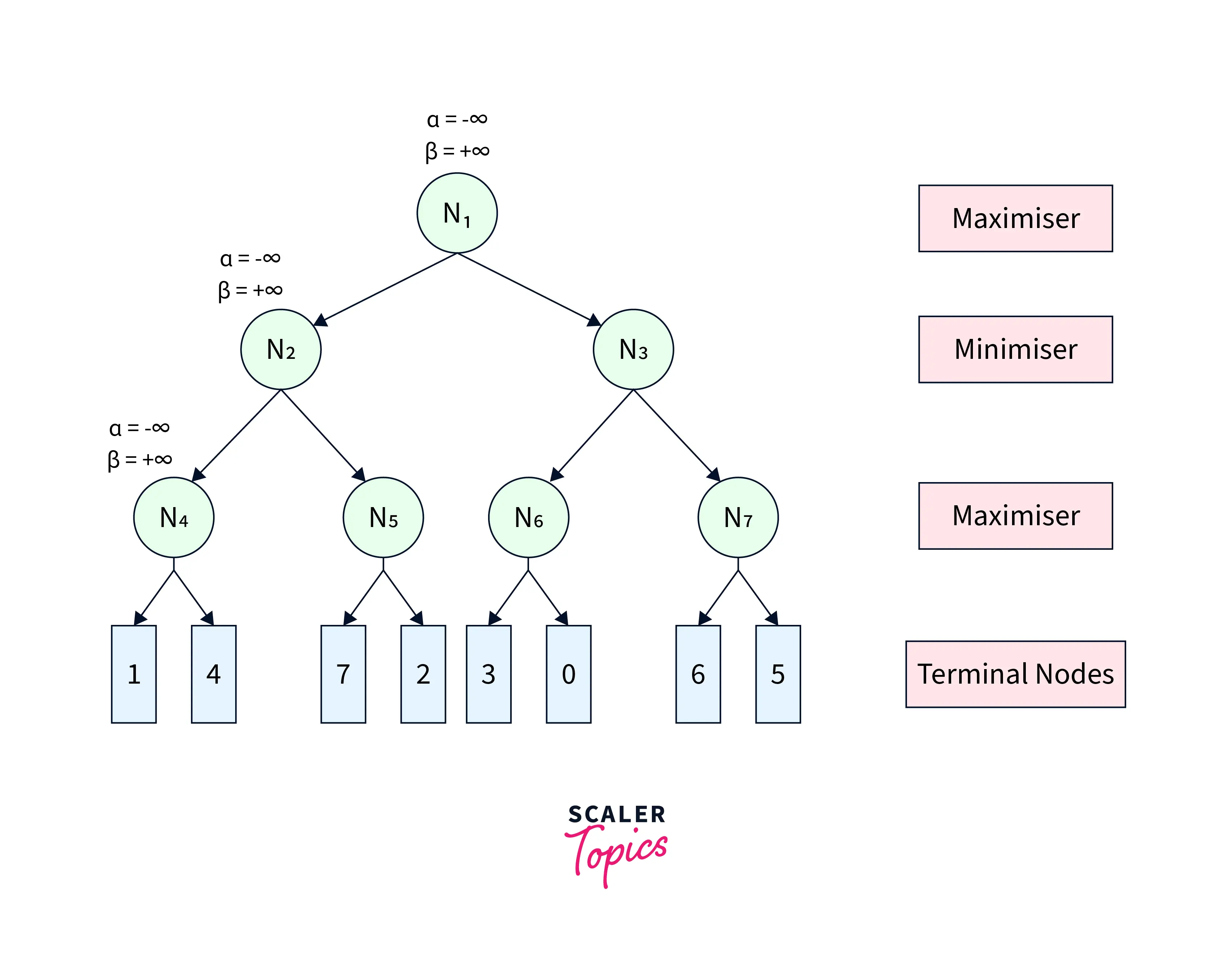

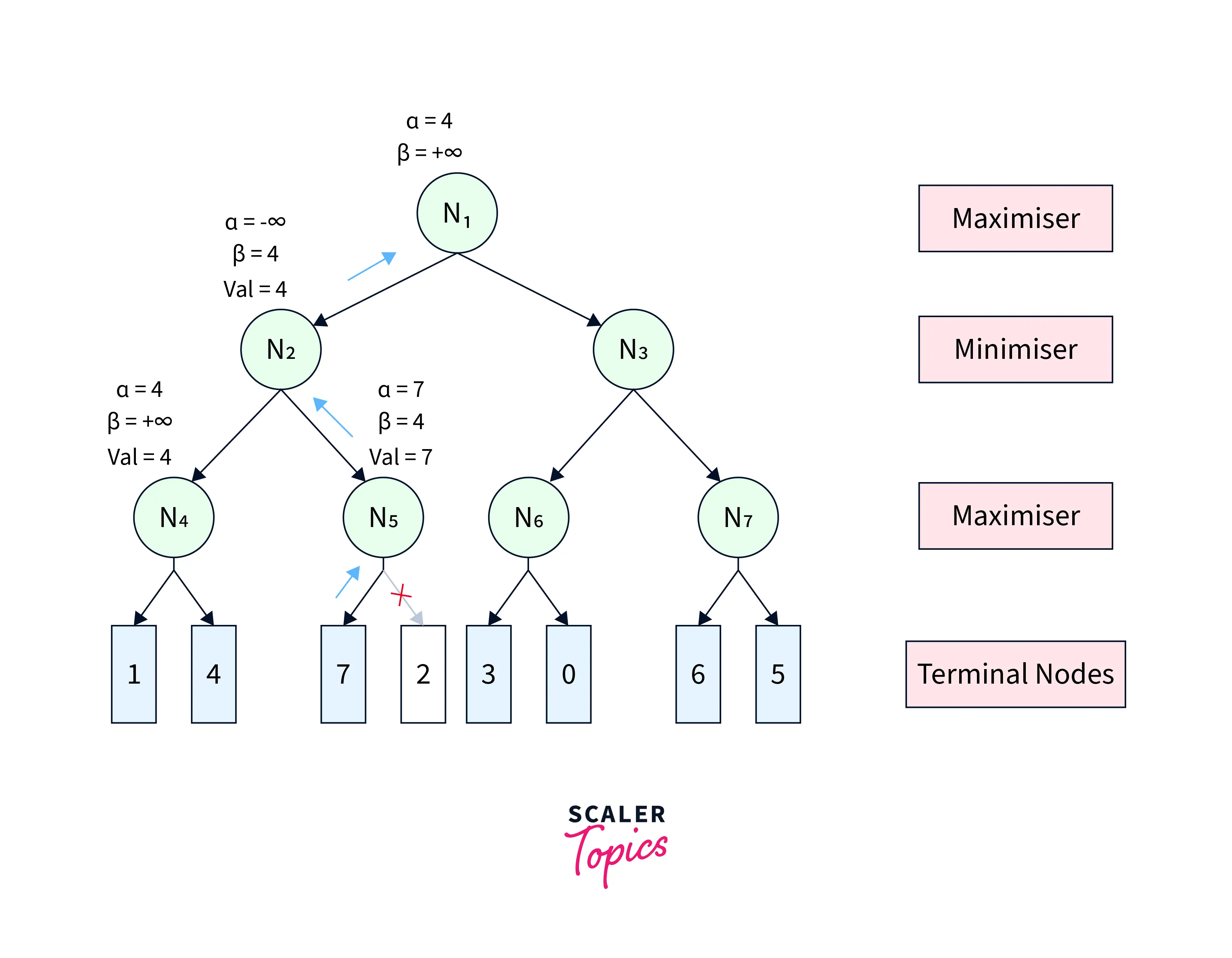

Alpha Beta Pruning algorithm restricts the agent from visiting redundant sub-trees leading to faster average search time complexity. To visualize its working, we refer to the same game tree in Figure-1. We start from node with and . From , we move to its child node with the same and . Similarly, we move from to its child , as shown in Figure-3.

|

|---|

| Figure-3 |

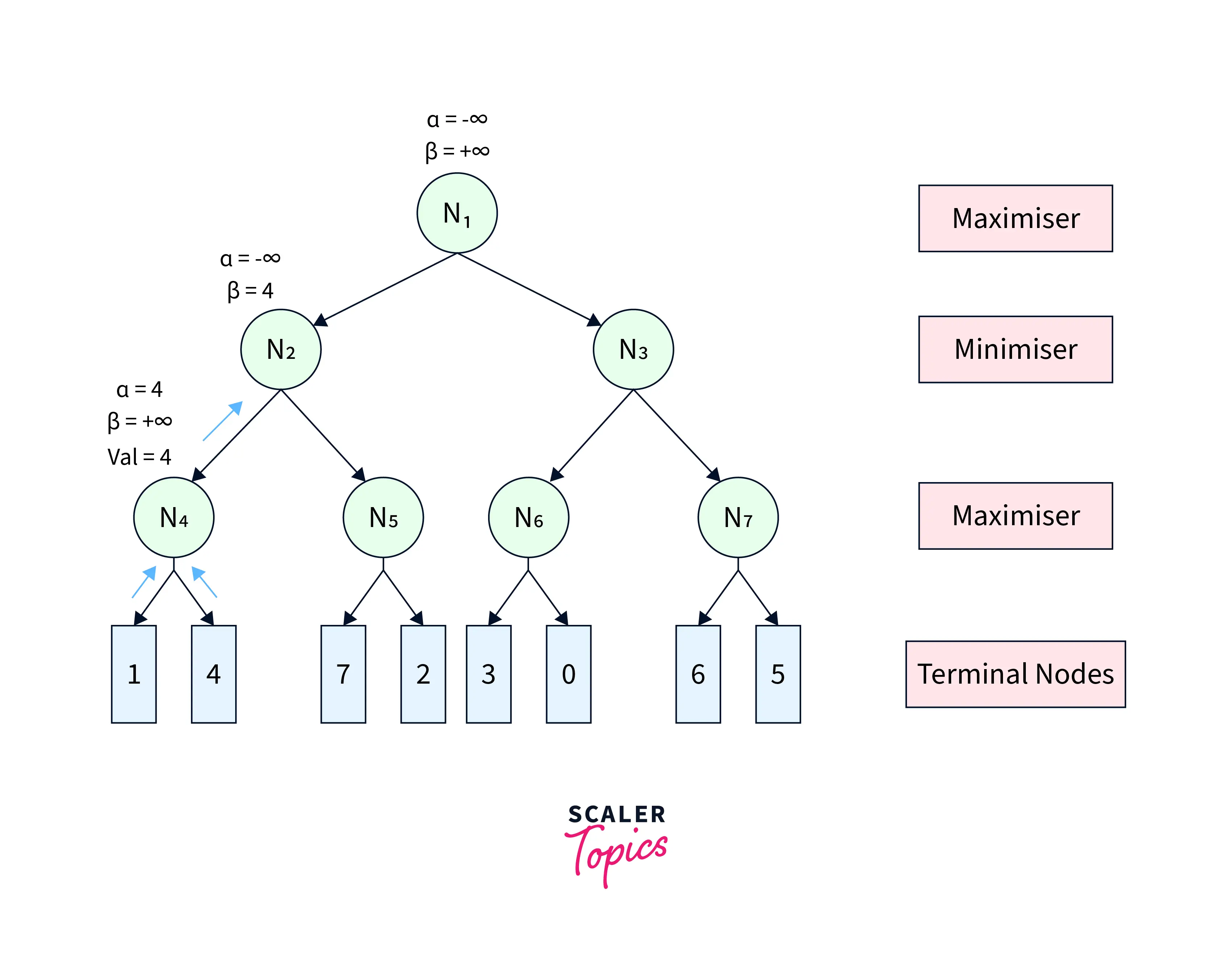

From , we move to its first terminal child and get back its utility as . Since is a maximiser, we update . The pruning condition remains unsatisfied. Therefore, we move to the second terminal child of and get its utility as . Further, we update . After this step, we get the node value of as and return it to its parent . Since is a minimiser, it updates . The game tree after this step is shown in Figure-4.

|

|---|

| Figure-4 |

At , the pruning condition is still unsatisfied. Therefore, we visit its next child node with and . At , we get the utility of its first terminal child as . Since is a maximiser, we update . Now, the pruning condition gets satisfied , leading to the pruning of the other child node. After that, the node utility of is further sent back to the parent node . updates . Further, the node value of is returned to its parent . At , we update . After this step, the game tree looks like Figure-5.

|

|---|

| Figure-5 |

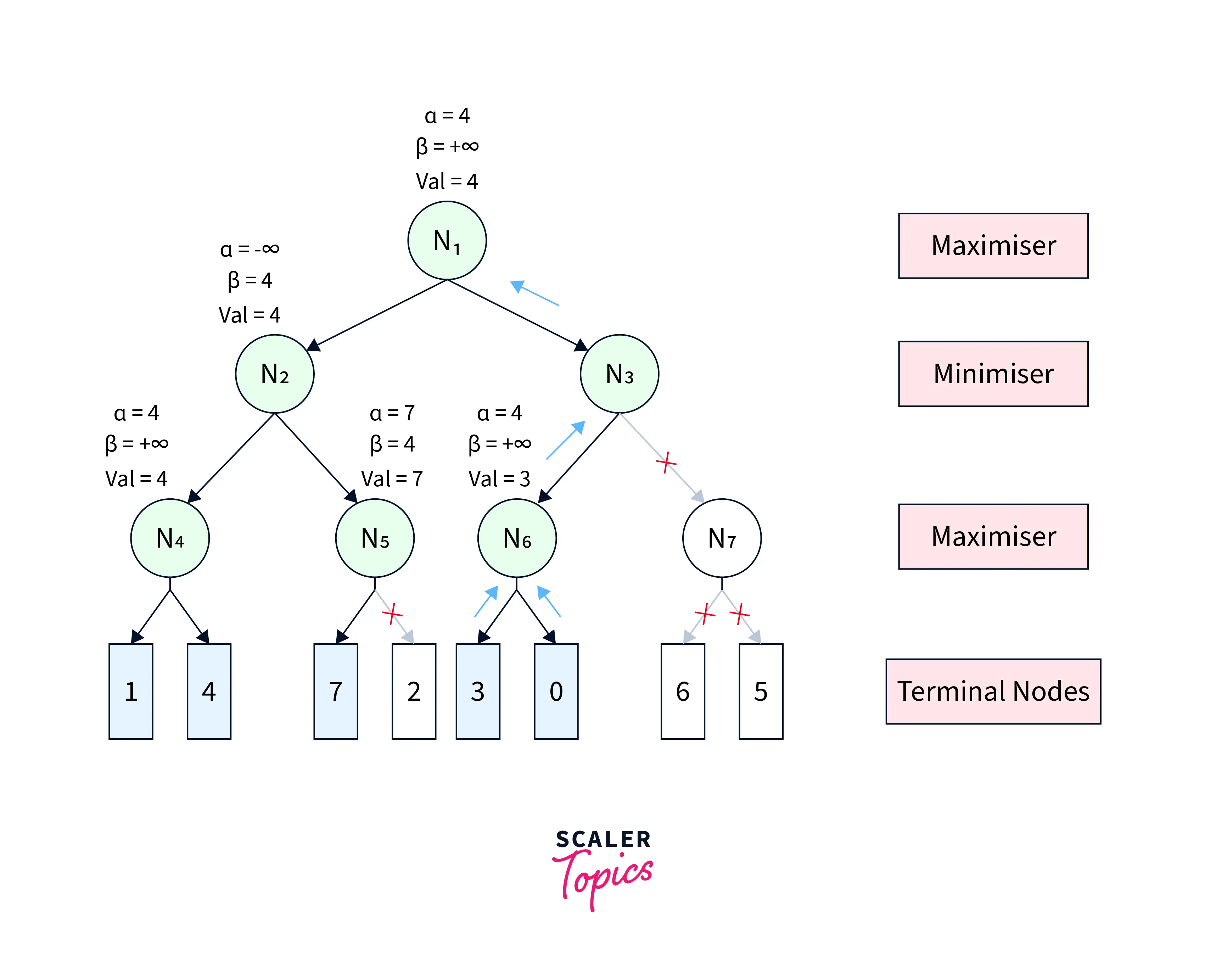

The pruning condition is still unsatisfactory at . Therefore, we continue towards the next child , with the same values of and . Similarly, we propagate from to its first child . At , we get back the utility of its first terminal child as and update . Since the pruning condition is still unsatisfied, we get the utility of the second terminal child as and update . After this, the node value of , is returned to the parent . Since is a minimiser, we update . Now, the pruning condition gets satisfied . Therefore, the other child sub-tree(rooted at ) of is pruned, and the node value of becomes , which is returned to parent . At , we again update and the final utility of becomes . All these steps are shown in Figure-6.

|

|---|

| Figure-6 |

Figure-6 depicts the final game tree of the Alpha Beta Pruning algorithm. As evident from the figure, Alpha Beta Pruning in AI yields the same result as the Minimax algorithm by visiting fewer states.

Become the Ai engineer who can design, build, and iterate real AI products, not just demos with an IIT Roorkee CEC Certification

Move Ordering in Alpha Beta pruning in AI

The performance of the Alpha Beta Pruning algorithm is highly dependent on the order in which the nodes are traversed. For instance,

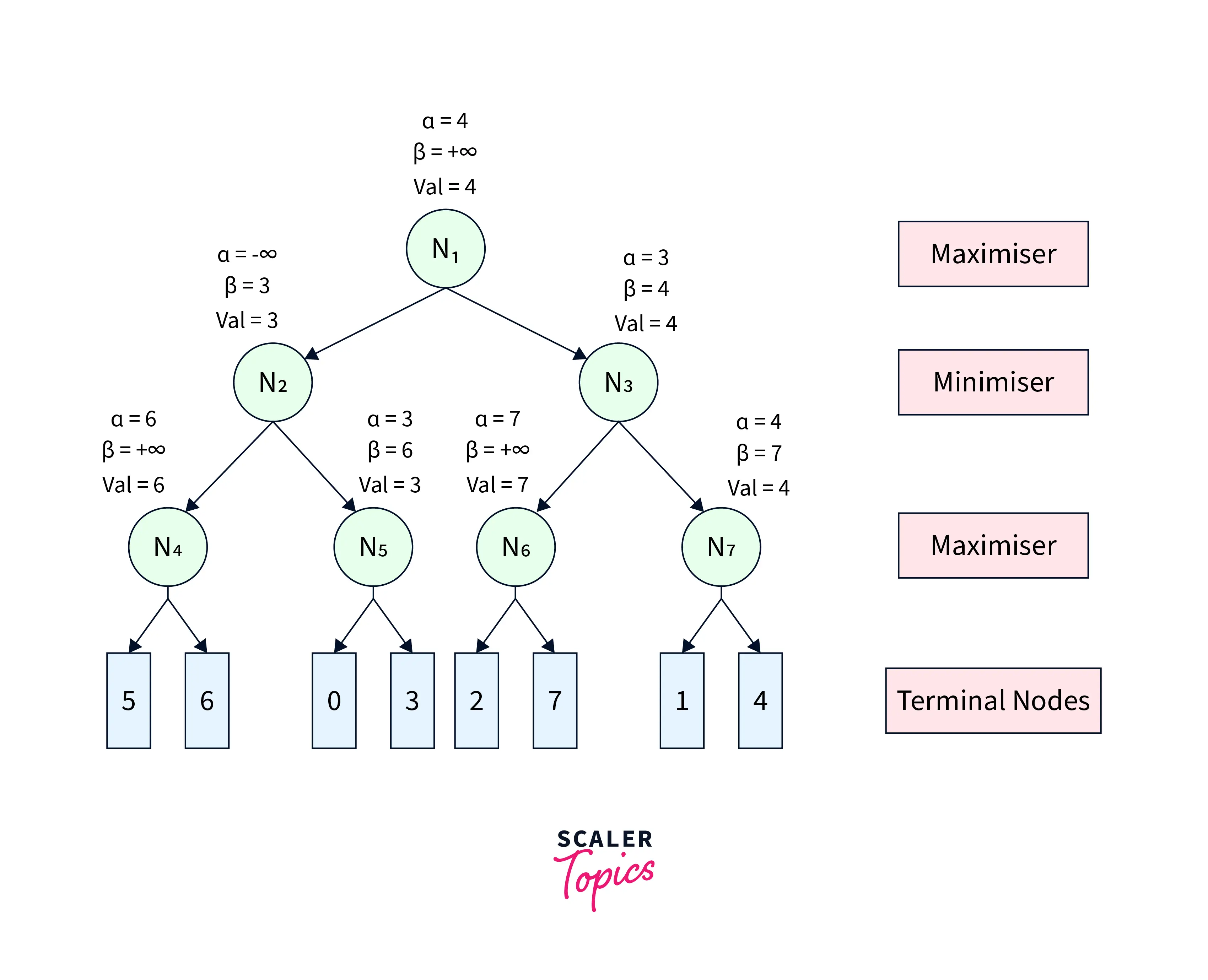

Case-1(Worst Performance): If the best sequence of actions is on the right of the game tree, then there will be no pruning, and all the nodes will be traversed, leading to even worse performance than the Minimax algorithm because of the overhead of computing and . An example game tree can be seen in Figure-7.

|

|---|

| Figure-7 |

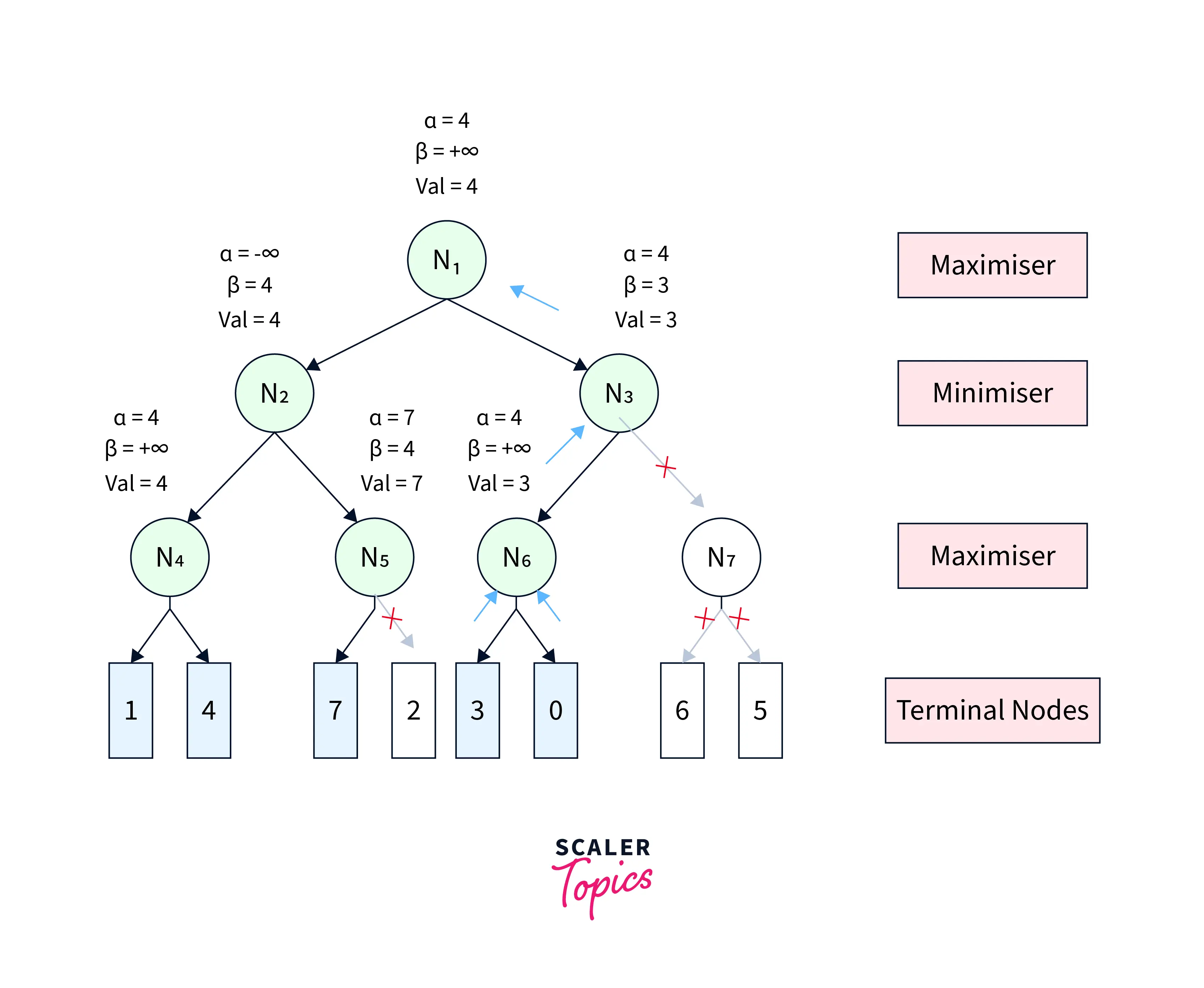

Case-2(Best Performance): If the best sequence of actions is on the left of the game tree, there will be a lot of pruning, and only about half of the nodes will be traversed to get the optimal result. An example game tree can be seen in Figure-8.

|

|---|

| Figure-8 |

Rules to Find Good Ordering

- The best move occurs from the shallowest node.

- The game tree is ordered so that the best nodes are checked first.

- Domain knowledge is used to find the best move.

- Memoization is applied to prevent recomputation in case of repeating states.

Conclusion

In this article, we learned about

- Significance of Alpha Beta Pruning algorithm

- Parameters of Alpha Beta Pruning in AI

- Properties and Conditions of Alpha Beta Pruning in AI

- Pseudo-code of Alpha Beta Pruning algorithm

- Working on Alpha Beta Pruning in AI

- Significance of order of nodes for Alpha Beta Pruning algorithm and rules to decide good orders