The Subsets of Artificial Intelligence

Overview

Artificial intelligence is a hot topic in both business and innovation. Subsets of AI, according to many experts and business researchers, are the future. However, a closer look reveals that it is also our present, not just the future.

Since Alan Turing first investigated the mathematical feasibility of AI, this enigmatic area of computer science has advanced significantly and now plays a crucial role in modern society. This fascinating field is expanding quickly into many facets of contemporary civilization.

Siri, Alexa, smartphones, social media feeds, and media streaming services are just a few of the many ways that we interact with AI. Currently, a growing number of businesses are investing in machine learning. A significant advancement in AI products and applications is also being widely displayed.

Introduction

The study of creating machines or computer programs that can carry out tasks that ordinarily require human intelligence is known as artificial intelligence, which is a subfield of computer science. The creation of algorithms, models, and systems for artificial intelligence (AI) enables machines to process, comprehend, and analyze data, as well as reason, choose, and learn from their mistakes.

AI aims to build machines that can mimic or replicate human intelligence, enabling them to carry out tasks with a level of accuracy, efficiency, and autonomy that is on par with or even better than that of humans.

Several subsets of AI focus on various aspects of AI research and applications within the larger field of AI. Each subset has its distinct problems, approaches, and applications, and together they add to the rich and multifaceted field of AI.

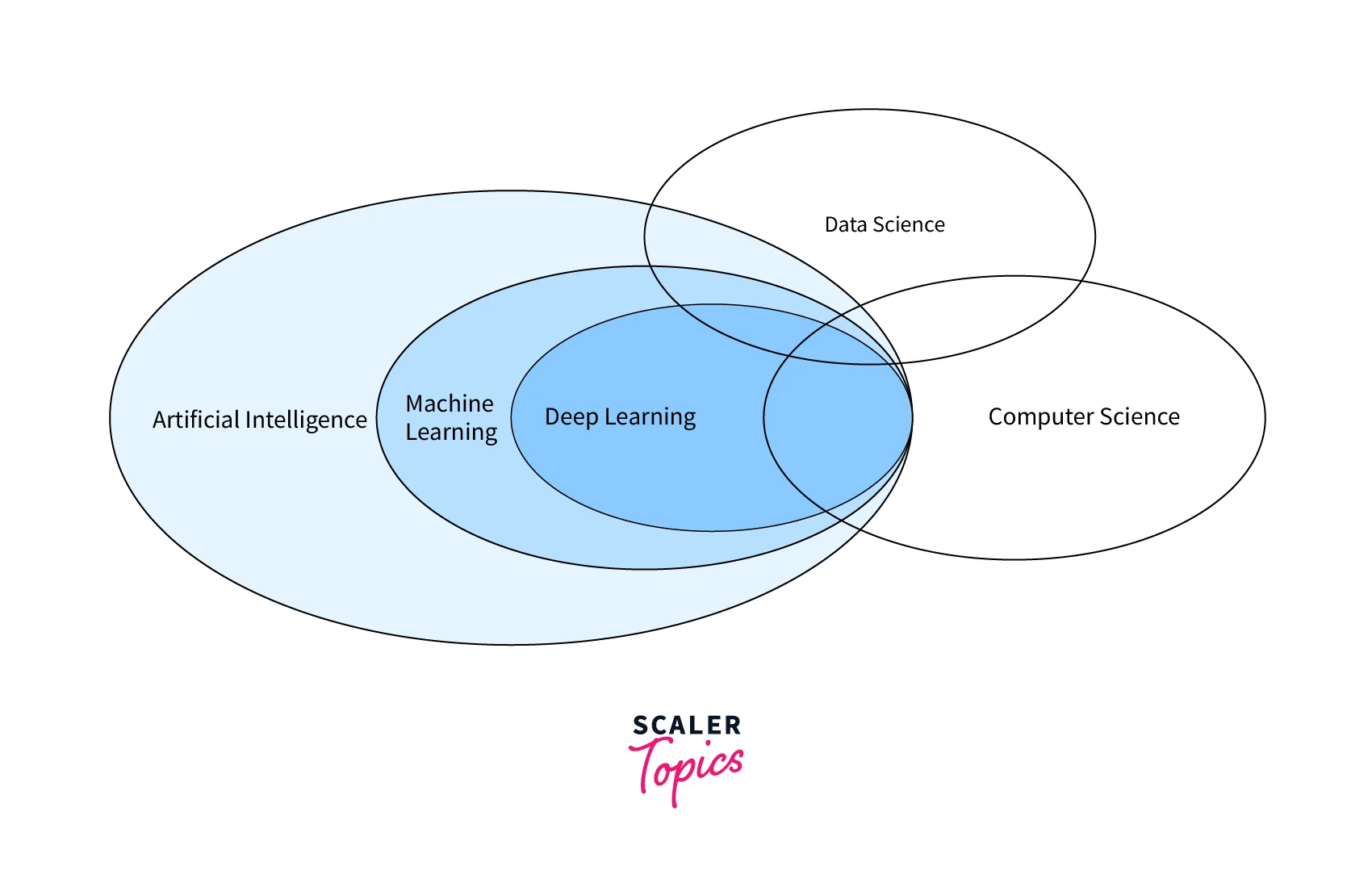

What is AI VS. ML VS. DL?

AI, ML, and DL are related but distinct concepts in the field of artificial intelligence. Here's a brief overview of each term:

-

Artificial Intelligence (AI): The term artificial intelligence (AI) refers to a broad area of computer science that focuses on building tools or software that can carry out tasks that typically require human intelligence. To enable machines to mimic or replicate human intelligence, including problem-solving, decision-making, learning, and perception, artificial intelligence (AI) encompasses a wide range of techniques, methodologies, and applications.

-

Machine Learning (ML): ML is a branch of AI that focuses on creating algorithms and models that let computers learn from data and get better over time without explicit programming. Based on data, ML algorithms can find patterns, forecast the future, and improve performance. Applications like image recognition, natural language processing, recommendation systems, and fraud detection frequently use machine learning (ML).

-

Deep Learning (DL): Neural networks, which are modeled after the composition and operation of the human brain, are the main subject of DL, a branch of machine learning. To process complex and massive amounts of data, deep learning (DL) algorithms are built to automatically learn hierarchical representations of data from multiple layers of interconnected neurons. DL is frequently used in applications like self-driving cars, virtual assistants, and facial recognition because it has been particularly successful in fields like image and speech recognition.

The following can be used to summarize how AI, ML, and DL are interdependent:

- For machines to learn from data and make predictions or decisions, they need the statistical models and algorithms that machine learning (ML) provides.

- With the use of deep neural networks to automatically learn features and representations from data, DL is a subset of machine learning (ML) and a potent method for tasks like image and speech recognition.

- Beyond ML and DL, AI includes a wider range of methodologies, such as rule-based systems, expert systems, and other methods that do not rely on statistical learning from data.

- To train deep neural networks, DL needs a lot of data, which is typically provided by ML techniques that gather, preprocess, and label data.

- AI applications such as natural language processing, computer vision, robotics, recommendation systems, fraud detection, and more use ML and DL techniques.

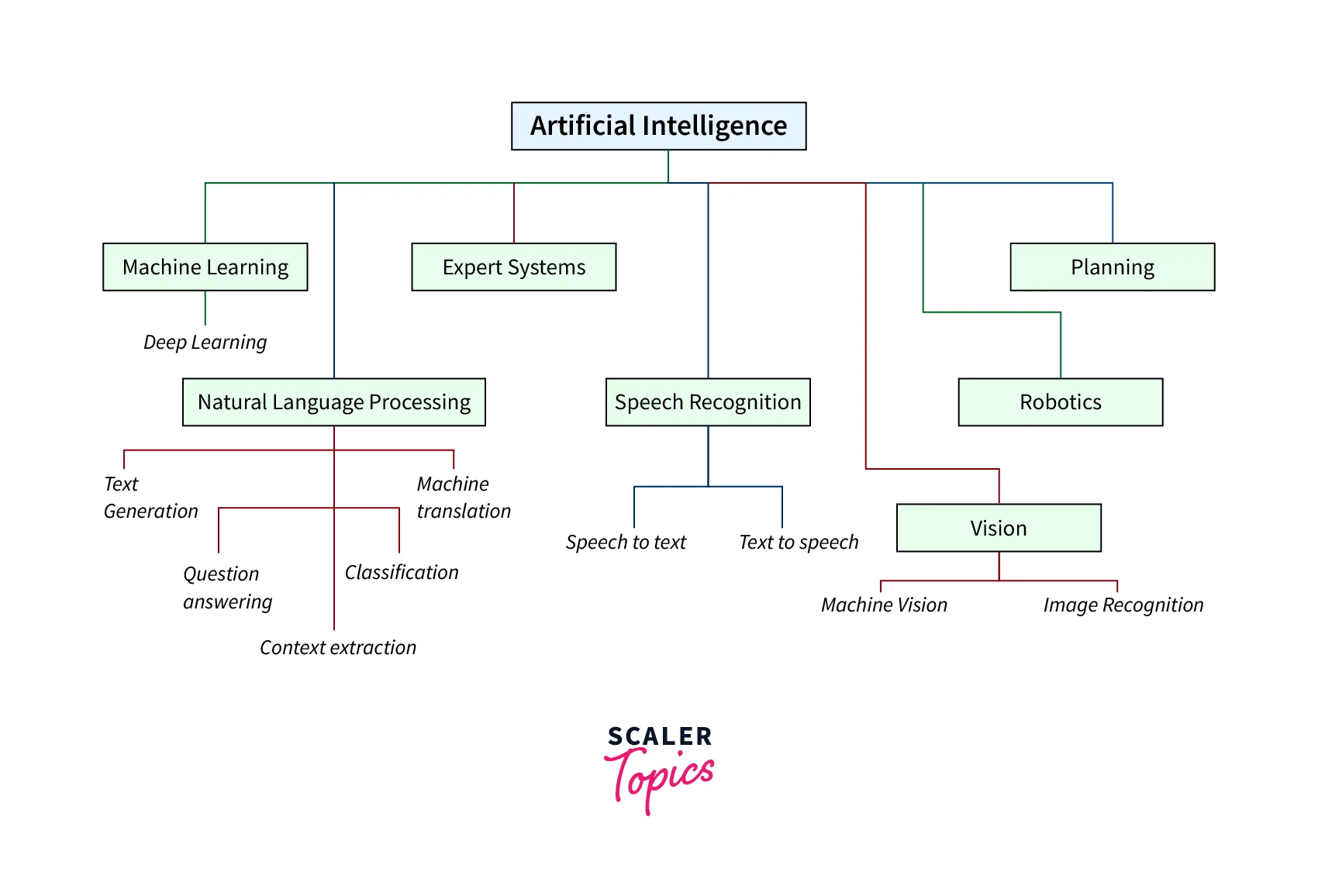

The Subsets of AI

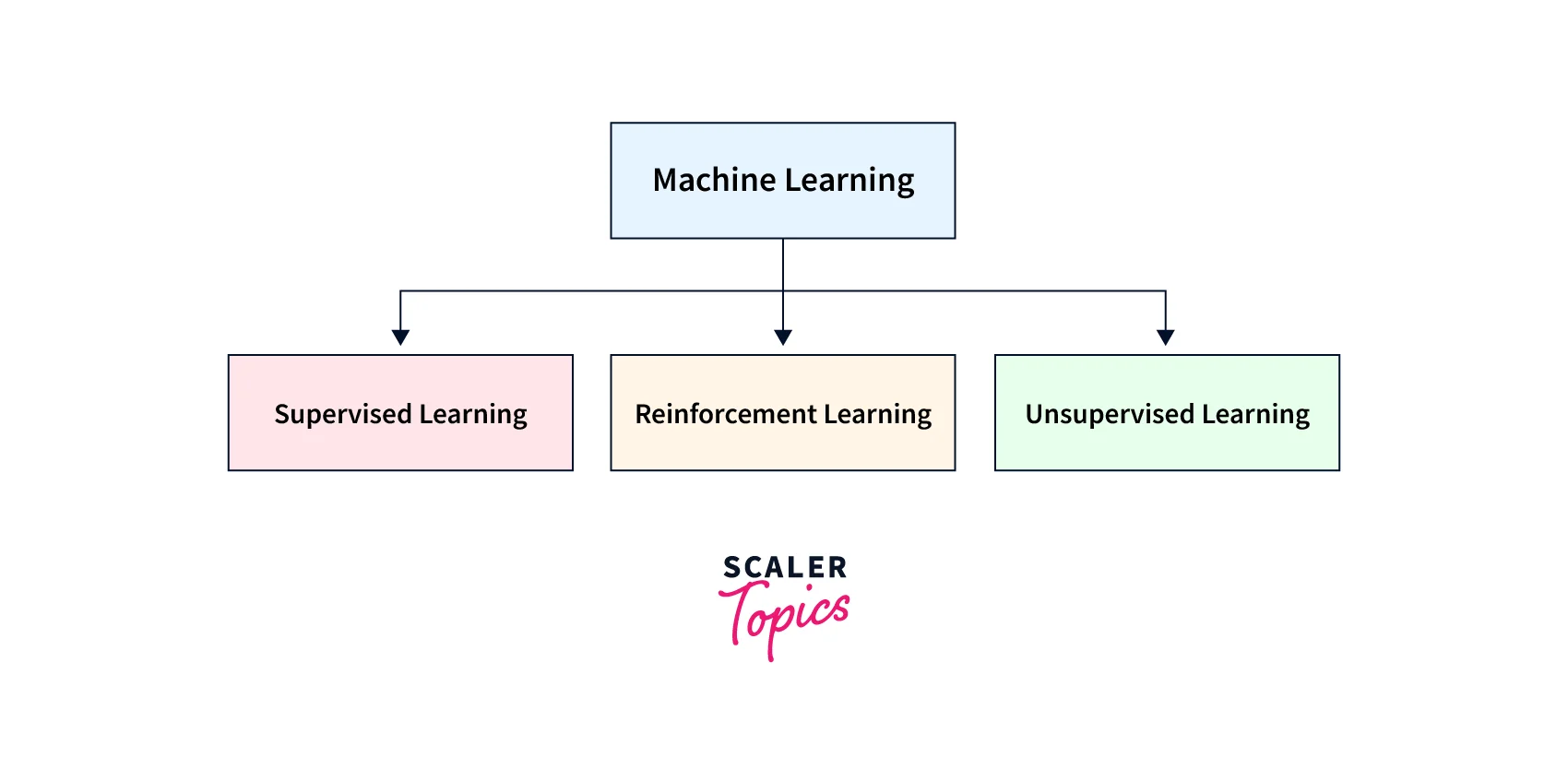

Machine Learning

Machine learning (ML) is a subset of AI that focuses on creating models and algorithms that let computers learn from data on their own and become more efficient. Based on the methods and techniques employed, ML can be further divided into various types. Some common subsets of ML include:

-

Supervised Learning: In supervised learning, input data and corresponding output labels are paired, and the ML model is trained on this labeled data. Based on the patterns it discovers from the labeled data, the model learns to predict or categorize new data. Speech recognition, sentiment analysis, and image classification are a few examples of supervised learning tasks.

-

Unsupervised Learning: Unsupervised learning involves training the ML model on data that has not been labeled and does not have any predetermined output labels. Without any explicit instruction, the model picks up on patterns, relationships, or structures in the data. Tasks like clustering, anomaly detection, and dimensionality reduction are examples of unsupervised learning tasks.

-

Reinforcement Learning: To maximize a cumulative reward signal, a model is trained using reinforcement learning to make decisions or take actions. The learning process involves trial and error, with the model getting feedback in the form of rewards or punishments depending on its actions. Robotics, gaming, and autonomous systems all frequently use reinforcement learning.

Other methods can also be used with machine learning techniques, including deep learning (using deep neural networks for feature extraction and prediction), transfer learning (using previously trained models for new tasks), and semi-supervised learning (a combination of supervised and unsupervised learning).

Numerous industries, including healthcare, finance, marketing, transportation, agriculture, and many more, can benefit from machine learning.

It is utilized for a variety of tasks, including speech and image recognition, natural language processing, fraud detection, personalized medicine, autonomous vehicles, and predictive maintenance.

Example - House Price Prediction

A typical application of machine learning in the real estate and finance industries is the prediction of housing prices. In this illustration, historical data on housing prices is used to train a machine learning model to identify patterns and connections among the various elements that influence housing prices, including location, size, number of rooms, amenities, and neighborhood characteristics. Once trained, the model can be used to predict the cost of a house based on new, unforeseen data.

Predicting housing prices is merely one example of how machine learning can be used in practical situations. To make data-driven predictions and decisions, machine learning techniques can also be used in other industries like marketing, healthcare, fraud detection, and more.

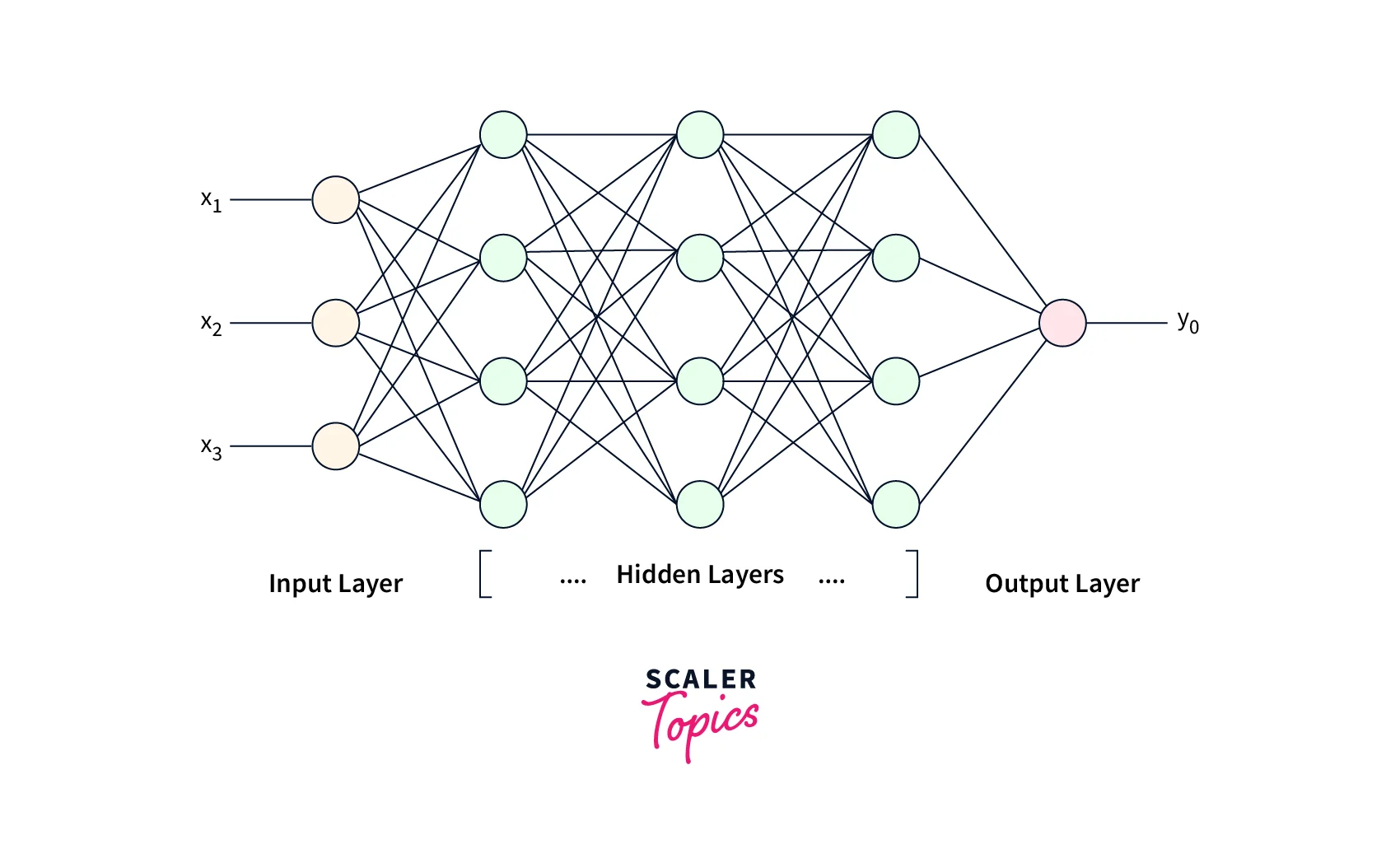

Deep Learning

Deep learning is a subset of AI that focuses on creating artificial neural networks that can learn from data and predict or decide based on that data alone. Deep learning models, also referred to as artificial neurons or perceptrons, are intended to automatically learn hierarchical representations of data through multiple layers of interconnected nodes.

Deep learning models, also known as "deep neural networks," can automatically extract features or representations from unprocessed data without explicitly engineering the features. These models are very good at tasks like image recognition, speech recognition, natural language processing, and many other applications because they can learn to automatically discover patterns, features, and representations from large amounts of data.

The use of deep neural networks, which are made up of multiple layers of connected nodes or neurons, is one of the essential elements of deep learning. A deep neural network's layers are responsible for separating ever-more-abstract features from the input data. While subsequent layers pick up on more intricate and higher-level features, eventually leading to the final prediction or decision, the first layer learns fundamental features like edges and corners.

Many fields, including computer vision, speech recognition, natural language processing, recommendation systems, autonomous vehicles, and many others, have been transformed by deep learning. Deep learning models have surpassed human performance in some tasks, achieving state-of-the-art performance. They have also sparked important developments in fields like reinforcement learning, transfer learning, and image and speech synthesis.

Deep Learning vs. Reinforcement Learning

Deep learning and reinforcement learning are both subfields of machine learning, but they differ in their approaches, objectives, and use cases.

-

Learning Paradigm: Deep learning is a type of machine learning that focuses on training neural networks to learn patterns and representations from large amounts of data. It is primarily supervised learning, where the model is trained on labeled data with known inputs and outputs. On the other hand, reinforcement learning is a type of machine learning that focuses on training agents to make decisions in an environment by taking actions and receiving feedback in the form of rewards or penalties. It is primarily an unsupervised learning paradigm, where the agent learns from interactions with the environment.

-

Goal: The goal of deep learning is to train models to make predictions or classify inputs into predefined categories based on learned patterns. Deep learning models excel in tasks such as image recognition, speech recognition, and natural language processing. On the other hand, the goal of reinforcement learning is to train agents to take actions that maximize a cumulative reward over time in an environment with unknown dynamics. Reinforcement learning is commonly used in applications such as robotics, game playing, and autonomous driving.

-

Feedback Mechanism: In deep learning, the model receives feedback in the form of labeled data during the training process, which is used to update the model's parameters. In reinforcement learning, the agent receives feedback in the form of rewards or penalties based on the actions it takes in the environment. The agent uses this feedback to learn a policy or a strategy that guides its decision-making process. Reinforcement learning involves a trial-and-error process, where the agent explores the environment, takes actions, and learns from the feedback it receives to improve its decision-making capabilities over time.

NLP

The goal of NLP, or "natural language processing," a subset of AI, is to make it possible for computers to meaningfully comprehend, interpret, and produce human language. Computers can now process, examine, and manipulate text or speech data using NLP in a manner that is akin to what people do. NLP techniques involve a range of tasks, including:

-

Text Analysis: This involves processing and analyzing text data, such as tokenization (splitting text into words or phrases), part-of-speech tagging (identifying the grammatical roles of words in a sentence), named entity recognition (identifying and categorizing named entities such as names, dates, and locations), and sentiment analysis (determining the emotional tone of the text, such as positive, negative, or neutral).

-

Language Understanding: This involves enabling computers to understand the meaning of text or speech data, such as syntactic parsing (analyzing the grammatical structure of a sentence), semantic role labeling (identifying the roles of words or phrases in a sentence, such as subject or object), and entity resolution (resolving references to the same entities in different parts of a text).

-

Machine Translation: This involves automatically translating text from one language to another, such as Google Translate and other translation tools.

-

Text Generation: This involves generating text automatically, such as chatbots, language models, and text summarization tools.

-

Question Answering: This involves enabling computers to understand questions posed in natural language and provide relevant and accurate answers, such as virtual assistants like Siri and Alexa.

-

Dialogue Systems: This involves building systems that can engage in interactive conversations with users, such as chatbots, customer service bots, and virtual assistants.

Various methodologies, such as rule-based methods, statistical methods, machine learning algorithms, and deep learning models, are used in NLP techniques.

Information retrieval, sentiment analysis, machine translation, speech recognition, voice assistants, text-to-speech, language generation, chatbots, social media analysis, and many other areas have practical uses for NLP. NLP is still developing, driven by improvements in AI and machine learning, and has a big impact on businesses, industries, and daily life by facilitating more natural and intuitive interactions between people and computers.

Expert System

A type of subset of AI software called an expert system, also referred to as a knowledge-based system, simulates the decision-making capabilities of a human expert in a particular domain or field. To reason, analyze, and offer recommendations for complex problems, expert systems are created to capture and represent human expertise. Expert systems typically consist of three main components:

-

Knowledge Base: This is a repository of knowledge that stores the information, rules, facts, and heuristics relevant to the domain or problem being addressed. The knowledge base is typically created by domain experts and can be represented in various formats, such as a database, rules-based system, ontology, or semantic network.

-

Inference Engine: This is the reasoning component of the expert system that processes the information stored in the knowledge base and uses inference rules and algorithms to conclude, make decisions, and provide solutions or recommendations. The inference engine applies various reasoning mechanisms, such as forward chaining (bottom-up) or backward chaining (top-down), to infer new knowledge from the existing knowledge in the knowledge base.

-

User Interface: This is the interface that allows users to interact with the expert system and input their queries or problems. The user interface can be in the form of a graphical user interface (GUI), command-line interface (CLI), or other forms of input/output mechanisms.

Numerous industries, including healthcare, finance, manufacturing, engineering, customer service, diagnostics, and decision-making in complex domains, have used expert systems.

However, they also have drawbacks, such as relying on the precision and comprehensiveness of the knowledge base, being unable to learn from or adapt to new data, and only being able to use the domain-specific information that is explicitly encoded in the system.

Expert systems are a valuable type of AI technology that can support decision-making, solve problems, and manage knowledge in a variety of domains, but they need careful development, validation, and upkeep to guarantee their efficacy and dependability.

Robotics

The design, manufacture, use, and application of robots are all covered by the engineering and science discipline of robotics. A subset of AI is concerned with creating tools or software that can carry out tasks that would typically require human intelligence. AI can be used to improve the performance and capabilities of various subsets or areas of robotics. Here are some examples:

-

Perception and Sensing: Robotics can benefit from AI by having better perception and sensing abilities. Robots can accurately perceive and interpret visual information from their surroundings, such as object recognition, scene understanding, and depth perception, for instance, thanks to AI-powered computer vision techniques. Robots can navigate and interact with their surroundings more successfully as a result.

-

Planning and Control: AI can be used in robotics for planning and controlling tasks. Robots can use AI algorithms to analyze sensor data and make decisions on how to move and manipulate objects in their environment. Reinforcement learning, a type of machine learning, can be used to train robots to learn optimal actions based on rewards and penalties, enabling them to autonomously plan and control their actions in dynamic and complex environments.

-

Human-Robot Interaction: Robotics can make use of AI to enable intuitive and natural interactions between humans and machines. For instance, robots may be able to comprehend and respond to human commands or request thanks to AI-powered natural language processing techniques. AI is frequently used in social robotics, which focuses on interactions between humans and robots, to help robots recognize and react to human emotions, gestures, and social cues.

-

Autonomous Navigation: AI can be used in robotics to enable autonomous navigation, allowing robots to move and navigate in their environment without human intervention. This can involve using AI algorithms for mapping and localization, path planning, and obstacle avoidance. Autonomous vehicles, such as self-driving cars and drones, rely heavily on AI for navigation and decision-making.

-

Medical Robotics: Robotics can use AI to improve medical procedures and interventions. For example, surgical robots use AI algorithms to manipulate surgical instruments with dexterity and precision, enabling minimally invasive surgeries with increased precision and safety. Medical robotics can also use AI to perform tasks like diagnosis, patient monitoring, and rehabilitation.

-

Swarm Robotics: AI can be used in swarm robotics, which involves the coordination of multiple robots to work together as a team to achieve a common goal. AI algorithms can enable robots to communicate, cooperate, and adapt to changing environments to achieve tasks efficiently and effectively. Swarm robotics finds applications in areas such as disaster response, exploration, and agriculture.

These are just some examples of how AI can be used in subsets of robotics. The integration of AI and robotics has the potential to revolutionize many industries and sectors, enabling robots to perform complex tasks with greater autonomy, adaptability, and efficiency.

Neural Networks

Artificial neural networks (ANNs), also referred to as neural networks, are a category of computational models that take their cues from the structure and operation of the human brain. They are subsets of AI and are frequently used for processing and analyzing data, spotting patterns, and making predictions in a variety of applications.

Interconnected nodes or neurons arranged in layers make up neural networks. These nodes take in data, process it, and then generate output signals that are sent via weighted connections to other nodes. As the neural network learns from labeled data to enhance its performance over time, the connections between nodes are modified during a process known as "training."

Based on their architecture, neural networks can be divided into various types, including feedforward neural networks, recurrent neural networks (RNNs), convolutional neural networks (CNNs), and long short-term memory (LSTM) networks. Each type has unique traits and is appropriate for various kinds of data and tasks.

Numerous applications have made use of neural networks, including financial prediction, autonomous vehicles, natural language processing, drug discovery, image and speech recognition, recommendation systems, and autonomous driving. They continue to be an active area of AI research and development and have demonstrated great success in a variety of fields.

Machine Vision

A subset of AI, machine vision, also referred to as computer vision, uses computers and digital processing to give machines the ability to interpret, examine, and comprehend visual data from the real world. Its main goal is to enable machines to perceive, comprehend, and interpret visual data in a manner that is comparable to human vision.

Cameras or other sensors are typically used by machine vision systems to capture visual data, which is then processed and analyzed using a variety of algorithms and techniques. These systems are capable of a variety of tasks, including 3D reconstruction, object detection, image segmentation, tracking, and image recognition.

Numerous industries, including manufacturing, automotive, healthcare, agriculture, robotics, surveillance, entertainment, and retail, among others, can benefit from machine vision. Machine vision can be used, for instance, in manufacturing to inspect products for flaws, measure dimensions, and direct robotic systems. Machine vision can support surgeries, patient condition monitoring, and diagnostic imaging in the healthcare industry.

Machine vision in agriculture can assist with yield estimation, pest detection, and crop monitoring. Machine vision can be applied in retail for augmented reality, facial recognition, and product recognition.

Machines are now capable of visual perception and understanding on par with or even surpassing that of humans thanks to advancements in machine vision powered by deep learning algorithms and more powerful computers.

Machine vision continues to be an active area of research and development, with numerous applications and the potential for further advancements in the future.

Speech Recognition

Speech recognition technology, also known as automatic speech recognition (ASR), allows computers to convert spoken words and phrases into written text. It is a subset of AI known as natural language processing (NLP), which focuses on how computers and human language interact.

Speech recognition systems process and analyze audio signals using a variety of algorithms and techniques before converting them into text. It is possible to use these systems for a variety of purposes, such as speech-to-text applications, voice assistants, call center automation, transcription services, and more. They can also handle different languages, accents, and speaking styles.

The speech recognition process involves several steps. The system starts by capturing any audio input, such as that from a microphone or other audio source. The audio signal is subsequently preprocessed to eliminate noise, normalize the volume, and make other improvements. The system then performs feature extraction, which involves removing pertinent features from the audio signal, such as spectral and temporal characteristics. These features are then fed into the speech recognition algorithm, which converts the spoken word into text using statistical models, machine learning, or deep learning methods.

The accuracy and performance of speech recognition systems have significantly improved in recent years, thanks in large part to the development of deep learning techniques like recurrent neural networks (RNNs), convolutional neural networks (CNNs), and transformer-based models.

Speech recognition has many uses, including voice-activated smart devices like Siri and Alexa, transcription services, automotive systems, and more. Although there are still ongoing efforts to increase accuracy, robustness, and usability in various real-world scenarios, this field of research and development is still very active.

Conclusion

Here are some key points on subsets in AI:

- A subset in AI refers to a smaller portion or division of a larger set or group of data, algorithms, or techniques.

- Subsets in AI can include specific areas or applications such as natural language processing (NLP), computer vision, machine learning, deep learning, robotics, and more.

- Each subset of AI has its unique characteristics, methodologies, and challenges, and may require specialized expertise for implementation and optimization.

- Subsets in AI are often used in combination to build comprehensive AI systems that can perform tasks, solve problems, or make decisions in various domains such as healthcare, finance, marketing, transportation, and more.

- Researchers, practitioners, and businesses specializing in AI often focus on specific subsets to advance the field and develop specialized solutions for specific use cases.

- Subsets in AI continue to evolve rapidly as new techniques, algorithms, and technologies are developed, contributing to the advancement and innovation in the field of artificial intelligence.