What is Agentic AI? Definition & Key Concepts

The agentic AI definition refers to artificial intelligence systems designed to pursue complex goals autonomously with minimal human intervention. Unlike traditional AI that merely responds to prompts, agentic AI proactively plans, executes actions, learns from environments, and adapts strategies to achieve designated objectives.

Introduction to Agentic Systems

Artificial intelligence has evolved through distinct paradigms, transitioning from rule-based expert systems to statistical machine learning, and recently, to highly capable generative models. However, standard Large Language Models (LLMs) function primarily as reactive text generators. They wait for a prompt, compute a probability distribution over a vocabulary, and output a response based on learned weights. While highly proficient in natural language processing, these reactive models lack the intrinsic ability to execute multi-step workflows or interact directly with external environments.

Agentic AI represents the architectural leap from passive response generation to active problem-solving. By wrapping an underlying foundational model in a sophisticated cognitive architecture—complete with memory, tool access, and reasoning loops—agentic systems bridge the gap between static knowledge retrieval and dynamic task execution. Understanding this shift is essential for software engineers, as building agentic AI requires integrating machine learning components with traditional backend systems, API networks, and state management protocols.

Agentic AI Definition and Meaning

To grasp the full meaning of agentic AI, one must examine the concept of "agency" within the context of computer science. In multi-agent systems and distributed computing, an agent is an autonomous entity that observes its environment through sensors and acts upon it through effectors to achieve specific goals.

When applied to modern neural networks, the agentic AI definition describes an intelligent system that uses a large language model (or a multimodal model) as its central computational brain. This brain is tasked not just with generating text, but with parsing a high-level objective, decomposing that objective into actionable sub-tasks, and orchestrating external tools to complete them.

The meaning of agentic AI is inherently tied to three core pillars:

- Autonomy: The system operates without requiring human intervention for every micro-decision.

- Goal-Orientation: The system evaluates its current state against a target state and continuously seeks paths to close the gap.

- Environmental Interaction: The system can read from and write to external data sources (e.g., executing SQL queries, calling REST APIs, manipulating file systems).

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

How is Agentic AI Different from Generative AI?

The distinction between generative AI and agentic AI is a critical architectural boundary. While generative AI is a subset of deep learning focused on creating new content (text, images, code) from latent space distributions, agentic AI is a broader framework that utilizes generative models as a reasoning engine.

Generative AI operates in a stateless, single-turn capacity unless explicitly provided with a contextual history in the prompt. Conversely, agentic AI maintains its own state, handles its own context windows, and drives the iteration loop.

| Feature | Generative AI | Agentic AI |

|---|---|---|

| Core Objective | To produce coherent outputs based on a single prompt. | To achieve a specified goal over multiple steps. |

| Autonomy | Low. Requires human prompts for every output. | High. Generates its own subsequent prompts/actions. |

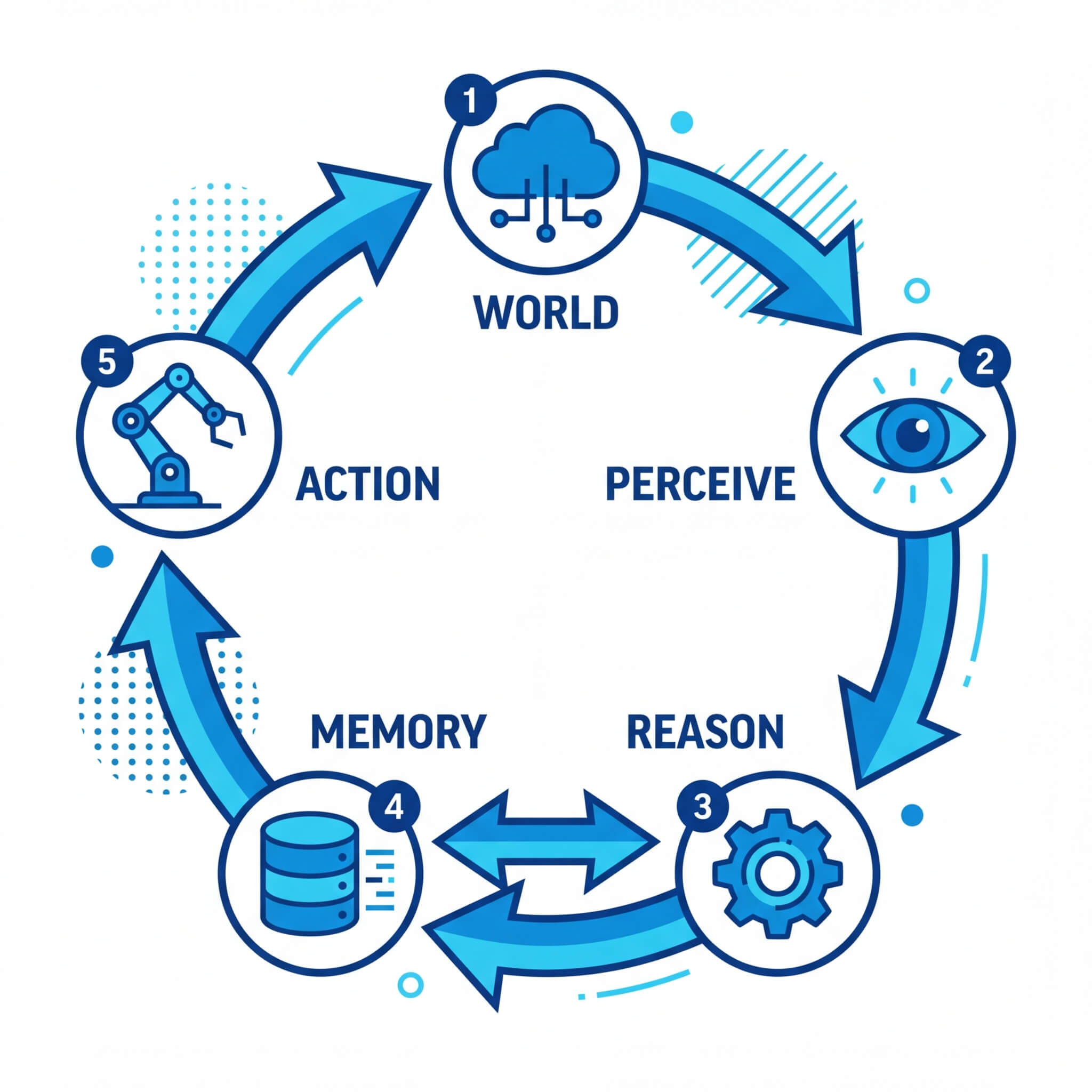

| Execution Flow | Linear (Input → Process → Output). | Cyclic (Observe → Reason → Act → Observe). |

| Tool Integration | Limited or non-existent out-of-the-box. | Native. Designed to trigger APIs, scripts, and databases. |

| State Management | Stateless (relies on the user to supply history). | Stateful (maintains internal memory buffers). |

| Error Handling | Fails silently or hallucinates incorrect answers. | Self-corrects upon receiving error logs from tools. |

Core Characteristics of Agentic AI

Developing an agentic system requires understanding the characteristics that distinguish an autonomous agent from a simple scripting pipeline. These characteristics define the functional capabilities of the software architecture.

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

Reasoning and Planning

An agentic system does not execute actions randomly. It utilizes advanced prompting frameworks—such as Chain of Thought (CoT), Tree of Thoughts (ToT), or the ReAct (Reason and Act) paradigm—to break down complex tasks. The reasoning engine formulates a step-by-step plan, evaluates edge cases, and adjusts its trajectory if an intermediate step fails.

Persistent Memory Mechanisms

Standard LLMs suffer from context window limitations. Agentic AI circumvents this through multi-tiered memory systems. Short-term memory relies on managing the immediate context window, while long-term memory leverages external Vector Databases (such as Pinecone, Milvus, or pgvector). Information is embedded into high-dimensional vectors, and relevance is calculated using mathematical operations like cosine similarity: similarity = (A · B) / (||A|| ||B||) This allows the agent to recall past actions, user preferences, and historical tool outputs.

Action via Tool Invocation

The ability to interact with the world is achieved through "tool calling" or "function calling." The model is instructed on the JSON schemas of available APIs. When the agent reasons that it needs external data, it outputs a structured JSON payload targeting a specific function. A middleware layer parses this JSON, executes the code, and returns the result to the agent's observation window.

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

The Architecture and Framework of Agentic AI

The framework of an agentic AI system is highly modular. It relies on a continuous feedback loop that orchestrates the flow of data between the underlying model and the deployment environment.

A robust agentic architecture typically consists of four main modules:

- The Profiling/Persona Module: This defines the role, constraints, and operational boundaries of the agent. It is established via system prompts and metaprompts, ensuring the agent operates within safe parameters (e.g., "You are an automated DevOps agent. You may query the database but you may not execute DROP or DELETE commands.").

- The Memory Module: As previously noted, this handles both episodic memory (history of the current task) and semantic memory (factual knowledge retrieved via Retrieval-Augmented Generation, or RAG).

- The Planning Module: This is the cognitive routing layer. It uses algorithms like Plan-and-Solve. The agent drafts a list of sequential actions. Upon completing action N, it evaluates the outcome before proceeding to action N+1.

- The Action/Observation Module: This handles the exact I/O interfacing. It converts the model's semantic intent into deterministic code execution and subsequently translates the system's deterministic output (e.g., an HTTP 404 error, or a JSON response) back into natural language or an embedding for the model to "observe."

Real-World Applications and Use Cases

Agentic AI is rapidly moving from theoretical frameworks into production-grade enterprise applications. The integration of agents is reshaping how software is developed, monitored, and secured.

Autonomous Software Engineering

AI agents like AutoGPT, BabyAGI, and specialized models like Devin are capable of contributing to software repositories. These agents can accept a GitHub issue, clone the repository, read the source code, write a patch, run local unit tests, and submit a pull request. They utilize the command line and integrated development environments (IDEs) just as a human developer would.

Cybersecurity and Identity Security

In cybersecurity, the reaction time required to isolate a threat often surpasses human capabilities. Agentic AI is deployed to monitor network traffic and identity access logs continuously. If anomalous behavior is detected (e.g., an unauthorized lateral movement within a cloud environment), the agentic system can reason about the threat, cross-reference the behavior with known CVE databases, and execute a script to revoke user privileges or isolate the compromised server autonomously.

Automated Data Analysis and Business Intelligence

Traditional data analysis requires human analysts to write SQL queries, export data into Python, and use libraries like Pandas or Matplotlib to generate insights. Data-focused AI agents are granted access to database schemas and Python execution environments. When a stakeholder asks, "Why did user retention drop in Q3?", the agent writes the SQL, queries the data, identifies anomalies, generates a visual graph, and writes an executive summary in a single autonomous workflow.

Implementing a Basic Agentic AI System

To understand the mechanics of agentic AI, software engineers must look at the code layer. Below is a conceptual Python implementation of an agent loop utilizing the ReAct (Reason + Act) pattern. This demonstrates how an agent interacts with a theoretical tool (a calculator) to achieve a goal.

This structural loop (while goal is not met, determine action, execute action, append observation to state) is the foundational DNA of all agentic systems.

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

Become the Ai engineer who can design, build, and iterate real AI products, not just demos with an IIT Roorkee CEC Certification

Benefits and Advantages of Agentic AI

The deployment of agentic AI frameworks introduces significant advantages for technical scaling and enterprise operations.

- Asynchronous Problem Solving: Unlike generative models that require a human to sit and wait for a response, agentic systems can be deployed as asynchronous background workers. An engineer can assign a complex refactoring task to an agent and review the pull request hours later.

- Dynamic Resilience: Traditional deterministic automation (like standard CI/CD pipelines or cron jobs) breaks when an API response format changes or a minor unexpected variable is introduced. Agentic AI possesses inherent fault tolerance. If an API returns an error, the reasoning engine reads the error, modifies its payload, and retries dynamically.

- Scalability of Intelligence: Agentic systems enable the parallelization of cognitive labor. Organizations can spawn hundreds of distinct agents simultaneously, each handling individual micro-tasks like code reviews, database optimization, or log monitoring, effectively acting as an infinitely scalable workforce of junior developers.

Challenges, Risks, and Disadvantages

Despite the robust capabilities, integrating agentic AI into production systems carries unique engineering challenges and security vulnerabilities.

Infinite Action Loops

Because agents operate on recursive loops, a failure in the reasoning logic or an unhandled edge case in an API response can cause the agent to become stuck in an infinite loop. It may repeatedly attempt the same failed action, consuming vast amounts of compute and API credits. Implementing strict execution boundaries, maximum step limits, and budget constraints is an absolute necessity.

Alignment and Unintended Consequences

When an AI is given agency, it may find novel, destructive ways to achieve a goal if the reward function or system prompt is poorly defined. For example, if an agent is instructed to "free up disk space on the server," and lacks strict permission boundaries, it might reason that deleting production database logs is the most efficient method to achieve its objective.

Security and Prompt Injection

Agentic AI drastically increases the attack surface of a system. If an agent reads data from untrusted sources (e.g., summarizing user-submitted emails or processing external web pages), it is vulnerable to indirect prompt injection. A malicious payload embedded in a webpage can hijack the agent's instructions, forcing it to use its authorized tools (like a SQL database connector or an email client) to exfiltrate sensitive data or execute malicious commands.

Integrating Agentic AI into Software Systems

For engineering teams looking to implement agentic AI, the integration process should be phased and strictly controlled.

- Define the Domain Boundaries: Do not build a general-purpose agent. Scope the agent to a highly specific domain (e.g., an agent strictly for database index optimization).

- Select the Right Framework: Avoid building the orchestration layer entirely from scratch unless necessary. Utilize established frameworks like LangChain, LlamaIndex, or Microsoft's AutoGen. These libraries handle the complexities of memory formatting, tool binding, and LLM API rate limiting.

- Implement the Principle of Least Privilege: When supplying tools to an agent, ensure the execution environment has minimal permissions. If an agent executes Python code, run it in a sandboxed Docker container or an isolated WebAssembly (Wasm) runtime. Ensure API keys provided to the agent have read-only permissions unless write access is strictly required.

- Establish Human-in-the-Loop (HITL) Protocols: For high-stakes actions (e.g., executing a Git merge, deleting resources, or sending emails to clients), the agentic flow should pause and require a human administrator to approve the execution payload via a webhook or UI prompt.

Frequently Asked Questions (FAQ)

Is Agentic AI the same as Artificial General Intelligence (AGI)?

No. Agentic AI is a structural architecture that gives current, narrow AI models the ability to act autonomously within defined constraints. AGI refers to a hypothetical system capable of understanding, learning, and applying intelligence across any cognitive task at or above a human level. Agentic AI is a step toward greater autonomy, but it is not AGI.

How does Reinforcement Learning apply to Agentic AI?

Reinforcement Learning from Human Feedback (RLHF) is primarily used to train the underlying foundation models to be helpful and safe. However, in advanced agentic systems, Reinforcement Learning (RL) can be used to optimize the agent's planning capabilities. By assigning reward signals to successful task completions, the model learns over time which sequence of tool usage yields the highest probability of success.

How do agentic systems handle hallucinations during tool execution?

Hallucinations (generating plausible but incorrect information) are a critical risk in agentic systems. To mitigate this, developers enforce strict JSON schema parsing and use self-reflection prompts. If the agent hallucinates a tool name or a parameter, the execution environment instantly returns a strict validation error to the agent's context window. The agent is prompted to evaluate its error and generate a corrected payload based on the exact schemas provided in the system instructions.