What is Agentic AI Framework? Complete Guide

What is agentic ai framework? An agentic AI framework is a robust software architecture that enables artificial intelligence systems to operate autonomously. It provides the necessary infrastructure—including memory management, cognitive reasoning, and tool integration—allowing AI agents to plan, execute multi-step tasks, and achieve predefined goals without continuous human intervention.

Introduction to the Agentic AI Paradigm

The artificial intelligence landscape has undergone a profound transformation, evolving from passive, prompt-driven models to autonomous, goal-oriented systems. While traditional generative AI models excel at producing text, code, or images based on direct user input, they lack the inherent ability to act independently, evaluate their own outputs, or iteratively solve complex problems over time. This limitation has catalyzed the development of agentic AI.

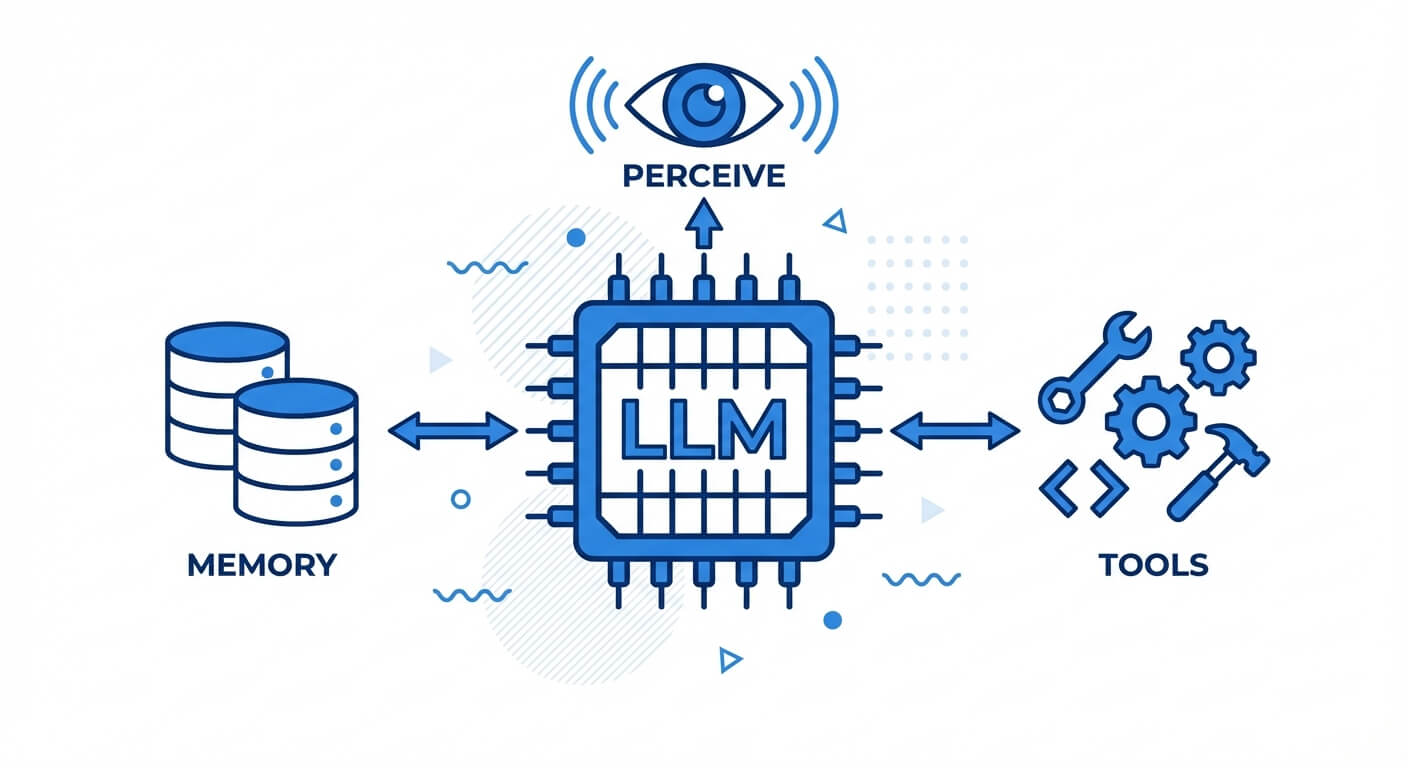

Agentic AI represents a paradigm shift where AI systems are designed not just to answer questions, but to take agency. By leveraging underlying large language models (LLMs) as cognitive engines, these systems can perceive their environment, formulate execution plans, utilize external tools, and adapt to new information autonomously. Understanding this transition is critical for modern software engineers, as the integration of autonomous agents is rapidly becoming the standard for complex enterprise architectures, ranging from autonomous software development to intelligent financial analysis.

The Evolution from Generative AI to Autonomous Agents

Historically, LLMs operated as stateless functions: mapping an input sequence to a probable output sequence. However, real-world engineering problems are rarely solved in a single probabilistic leap. They require a recursive process of trial, error, environment feedback, and correction. The agentic paradigm wraps the stateless LLM in a stateful control loop. Instead of merely predicting the next token in a conversational thread, the system predicts the next logical action required to satisfy a higher-level objective.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

What is an Agentic AI Framework?

An agentic AI framework is the underlying software infrastructure and orchestration layer that facilitates the creation, deployment, and management of autonomous AI agents. Building an autonomous agent from scratch requires solving several non-trivial engineering challenges: maintaining conversational context, parsing model outputs into executable code, handling API authentication, managing rate limits, and orchestrating communication between multiple independent agents.

Frameworks abstract these complexities away. They provide standardized abstractions for connecting LLMs to external environments. A robust framework dictates how an agent processes inputs, how it retrieves historical data from vector databases, how it decides which external tool to invoke, and how it handles execution failures. Without a unified framework, developers would be forced to write custom state machines, memory handlers, and parsing logic for every new agentic application.

The Orchestration Layer

At the heart of the framework is the orchestration layer. This layer operates as the central nervous system of the agent. It manages the execution loop, typically implemented as a "while" loop that continues until a specific termination condition is met (e.g., the goal is achieved, or a maximum number of steps is reached). The orchestration layer ensures that the agent's internal state is accurately updated after every tool execution and that the LLM is continually re-prompted with the most up-to-date environmental context.

Core Components of an AI Agent Architecture

Understanding the internal mechanics of an AI agent architecture is fundamental to designing robust autonomous systems. An agent is not a monolithic entity; rather, it is a composite system of interacting modules that handle perception, cognition, memory, and actuation. When designing these systems, software engineers must carefully select the right technologies for each component to ensure the agent operates efficiently and deterministically within its designated domain.

The architecture fundamentally relies on separating the "brain" (the reasoning engine) from the "body" (the tools and memory). This modularity allows developers to swap out the underlying LLM or upgrade external tools without rewriting the entire agentic logic. Let us examine the four foundational pillars that constitute a standard AI agent architecture.

1. The Cognitive Engine (Reasoning and Planning)

The cognitive engine is typically powered by a state-of-the-art LLM. However, raw generation capabilities are insufficient for agency. The engine must employ advanced prompting techniques to simulate reasoning. The most prevalent technique is ReAct (Reasoning and Acting). In a ReAct framework, the agent alternates between generating a reasoning trace (a "thought") and executing an action.

This process forces the LLM to explicitly state its logic before interacting with the environment. Other advanced planning architectures include:

- Chain of Thought (CoT): Breaking down complex problems into intermediate logical steps.

- Tree of Thoughts (ToT): Exploring multiple reasoning paths simultaneously and using a heuristic function to evaluate the most promising node before proceeding.

- Reflection: The agent analyzes its previous actions and outcomes, generating self-critiques to improve future execution.

2. Memory Systems

For an agent to operate autonomously over extended periods, it requires a sophisticated memory hierarchy, much like a standard operating system.

- Short-Term Memory: This is the immediate context window of the LLM. It contains the current system prompt, recent conversational history, and immediate tool outputs. Because LLMs have strict token limits (e.g., 128k or 256k tokens), short-term memory must be heavily optimized and pruned.

- Long-Term Memory: To recall information across multiple sessions, agents utilize external databases, predominantly Vector Databases. Information is embedded into dense mathematical vectors. When the agent needs historical context, it performs a similarity search. For example, the cosine similarity between the current query vector A and a stored document vector B is calculated using the formula: Cosine Similarity(A, B) = (A · B) / (|A| × |B|).

- Episodic Memory: A specific type of long-term memory where the agent records its past actions, the states it encountered, and the rewards or failures received, allowing it to avoid repeating previous mistakes.

3. Tool Use and Actuation

An agent confined to text generation has limited utility. Actuation is the process of the agent interacting with external systems via tools. A tool is essentially a wrapped API call, a Python execution environment, a web browser, or a database query interface. The AI agent architecture defines tools with strict schemas (often using JSON Schema or OpenAPI specifications).

When the cognitive engine decides to use a tool, it outputs a structured payload (e.g., a JSON object containing the function name and arguments). The framework intercepts this payload, executes the actual code (actuation), and feeds the resulting output or error trace back into the agent's short-term memory for the next reasoning cycle.

4. Environment and Perception

The environment is the domain in which the agent operates, whether it is a Linux terminal, a simulated video game, or the global financial markets. Perception is the mechanism by which the agent ingests environmental state changes. In complex architectures, perception involves parsing unstructured data (like raw HTML from a web scraper) into structured formats that the cognitive engine can easily digest without exhausting its context window.

Popular Agentic AI Frameworks in the Ecosystem

The rapid proliferation of agentic use cases has led to the development of several open-source frameworks. Each framework tackles the complexities of agent orchestration differently. Some focus on building single, highly capable agents via complex state graphs, while others focus on multi-agent collaboration where specialized agents communicate to solve distributed problems.

Choosing the right framework depends heavily on the specific requirements of the application, such as the need for cyclical execution, human-in-the-loop interventions, or parallel processing.

LangGraph (by LangChain)

LangGraph is an extension of the popular LangChain library, designed explicitly for building stateful, multi-actor applications with LLMs. Unlike standard LangChain chains, which are typically directed acyclic graphs (DAGs), LangGraph allows developers to define execution loops. It models the agent as a state machine. Developers define "nodes" (Python functions or LLM calls) and "edges" (conditional logic that dictates routing). The framework maintains a global "state" object that is updated as execution passes from node to node, making it highly fault-tolerant and ideal for complex, recursive tasks.

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

Microsoft AutoGen

AutoGen is a framework developed by Microsoft that specializes in multi-agent conversations. Instead of building one monolithic agent, developers create multiple specialized agents (e.g., a "Coder Agent", a "Reviewer Agent", and a "Project Manager Agent"). AutoGen orchestrates how these agents pass messages back and forth. It natively supports human-in-the-loop interactions, allowing a human developer to act as one of the nodes in the conversation, approving code or providing guidance when the autonomous agents reach an impasse.

CrewAI

CrewAI is built on top of LangChain but provides a higher-level abstraction focused on role-playing and team dynamics. In CrewAI, agents are assigned specific "Roles," "Goals," and "Backstories." These agents are then organized into "Crews" and given "Tasks." The framework manages the sequential or hierarchical execution of these tasks, ensuring that the output of one agent seamlessly becomes the input of another. It is highly favored for applications requiring collaborative content creation, complex research, and automated data analysis pipelines.

Agentic AI vs Generative AI: Key Technical Differences

While generative AI models form the foundational layer of agentic AI, the two concepts represent vastly different approaches to system architecture and execution. The distinction lies primarily in autonomy, statefulness, and environmental interaction.

Below is a technical comparison outlining the exact differences between these two paradigms:

| Feature / Dimension | Generative AI | Agentic AI |

|---|---|---|

| Core Function | Passive generation of text, code, or media based on explicit user prompts. | Goal-oriented execution, multi-step reasoning, and autonomous decision-making. |

| Execution Flow | Single-turn or multi-turn conversational (Stateless mapping of input to output). | Continuous control loop (Stateful, recursive execution until objective is met). |

| Environmental Interaction | None. Completely isolated from external systems unless manually provided context. | High. Capable of invoking APIs, reading/writing files, and interacting with databases. |

| Memory Architecture | Limited to the immediate context window of the user's active session. | Utilizes hierarchical memory (Short-term context + Long-term Vector DB retrieval). |

| Error Handling | Relies on human users to identify hallucinations and provide corrective prompts. | Self-correcting. Can parse error logs, reflect on failures, and attempt alternate approaches. |

Building an Agentic AI System: A Technical Walkthrough

To practically illustrate the concept of an agentic AI framework, we can build a rudimentary agent using Python. The following implementation demonstrates a single-agent architecture capable of utilizing a custom tool to answer questions that the underlying LLM would not natively know.

In this example, we will define a simple tool that fetches current server metrics, bind it to an LLM, and execute an agent loop.

In a production environment, an agentic framework like LangGraph handles this exact while loop, but with added resilience—managing API rate limits, parsing malformed JSON payloads from the LLM, and handling maximum recursion depths to prevent infinite loops.

Real-World Agentic AI Applications

The deployment of agentic AI frameworks is rapidly moving out of experimental environments and into enterprise production. Because these systems can autonomously execute workflows, they are fundamentally altering software engineering, data analytics, and security operations.

Autonomous Software Engineering

Projects like Devin and open-source alternatives like SWE-agent utilize agentic frameworks to operate as autonomous software engineers. Given a GitHub issue, an agentic system will clone the repository, read the existing codebase using standard terminal tools, reproduce the bug, write a patch, run unit tests, and submit a pull request. The framework orchestrates a seamless loop between reading code, executing bash commands, and analyzing the compilation errors to iteratively fix the code.

Algorithmic Trading and Financial Analysis

In the financial sector, multi-agent frameworks are deployed for continuous market analysis. One agent serves as a data scraper, pulling real-time SEC filings and news articles. A secondary agent, acting as a quantitative analyst, digests this data and writes Python scripts to run statistical models. A final "Decision Agent" reviews the statistical outputs against pre-defined risk parameters to execute trades via a brokerage API.

Cybersecurity Incident Response

Agentic AI frameworks excel in Security Operations Centers (SOC). When an intrusion detection system flags an anomaly, an autonomous agent can immediately ingest the alert. It can then autonomously query the SIEM (Security Information and Event Management) system, fetch firewall logs, check the reputation of an IP address via external threat intelligence APIs, and generate an incident summary. If authorized, the agent can even update firewall rules to isolate the compromised endpoint, vastly reducing the Mean Time to Respond (MTTR).

Challenges and Future Scope of Agentic AI

Despite the rapid advancements, building reliable agentic AI frameworks poses significant technical hurdles. Ensuring deterministic behavior in non-deterministic systems is a core engineering challenge that must be addressed before mass enterprise adoption can occur.

Infinite Loops and Hallucinations

Because agents operate in autonomous loops, a hallucination by the LLM can result in catastrophic failure cascades. If the agent misinterprets the output of a tool, it may repeatedly call the same tool with identical flawed arguments, resulting in an infinite execution loop. Frameworks attempt to mitigate this by implementing hard limits on execution steps and injecting "timeout" or "reflection" prompts, forcing the agent to evaluate if it is stuck in a loop.

Context Window Limitations and Memory Bloat

As an agent performs actions, the framework must continually append tool outputs to the LLM's short-term memory. Large tool outputs (such as fetching a massive database schema) can quickly exhaust the LLM's token limit. Future developments in agentic AI heavily focus on "memory compression" techniques—using smaller, secondary LLMs to summarize environmental feedback before injecting it back into the primary agent's context window.

Become the Ai engineer who can design, build, and iterate real AI products, not just demos with an IIT Roorkee CEC Certification

FAQs

1. What is the difference between ReAct and standard LLM prompting? Standard prompting asks an LLM for a direct answer. ReAct (Reasoning and Acting) is a specific framework where the LLM is prompted to first generate a step-by-step logical thought about what it needs to do, and then explicitly output an action/tool call based on that thought.

2. Why are vector databases essential for AI agent architecture? Vector databases provide long-term semantic memory. Because LLMs have strict token limits for their context windows, they cannot hold massive amounts of historical data. Vector databases allow the agent to convert a query into an embedding and perform mathematical similarity searches to quickly retrieve only the exact relevant context needed for a task.

3. How does an AI agent interact with external APIs? The AI agent is given a "tool definition," usually in JSON schema, detailing the API's required inputs. The LLM processes this schema and, when necessary, generates a JSON object matching the parameters. The agentic framework parses this output, executes the actual HTTP request, and returns the API's response back to the LLM as text.

4. What prevents an autonomous AI agent from executing malicious code? Production agentic frameworks rely on heavily sandboxed environments. When an agent requires execution capabilities (like Python scripting or bash commands), the framework executes the code inside ephemeral, isolated Docker containers or WebAssembly (Wasm) runtimes. Additionally, strict role-based access control (RBAC) is applied to the APIs the agent is permitted to call.