What is Gen AI and Agentic AI? Complete Guide

Generative AI (Gen AI) refers to deep learning models designed to create new content—such as text, images, or code—based on user prompts by predicting statistical patterns. Agentic AI, conversely, refers to autonomous systems that utilize these generative models as reasoning engines to plan, execute multi-step actions, utilize external tools, and achieve specific goals without continuous human intervention.

The Evolution of Artificial Intelligence Architectures

The artificial intelligence landscape has undergone a profound architectural shift over the past decade. For years, the industry focused heavily on analytical and predictive AI—models trained to classify data, predict numerical outcomes, or detect anomalies. While mathematically sophisticated, these systems were inherently constrained to specific, narrow tasks. The subsequent breakthrough was Generative AI, which shifted the paradigm from prediction to creation, utilizing massive foundation models to synthesize novel outputs.

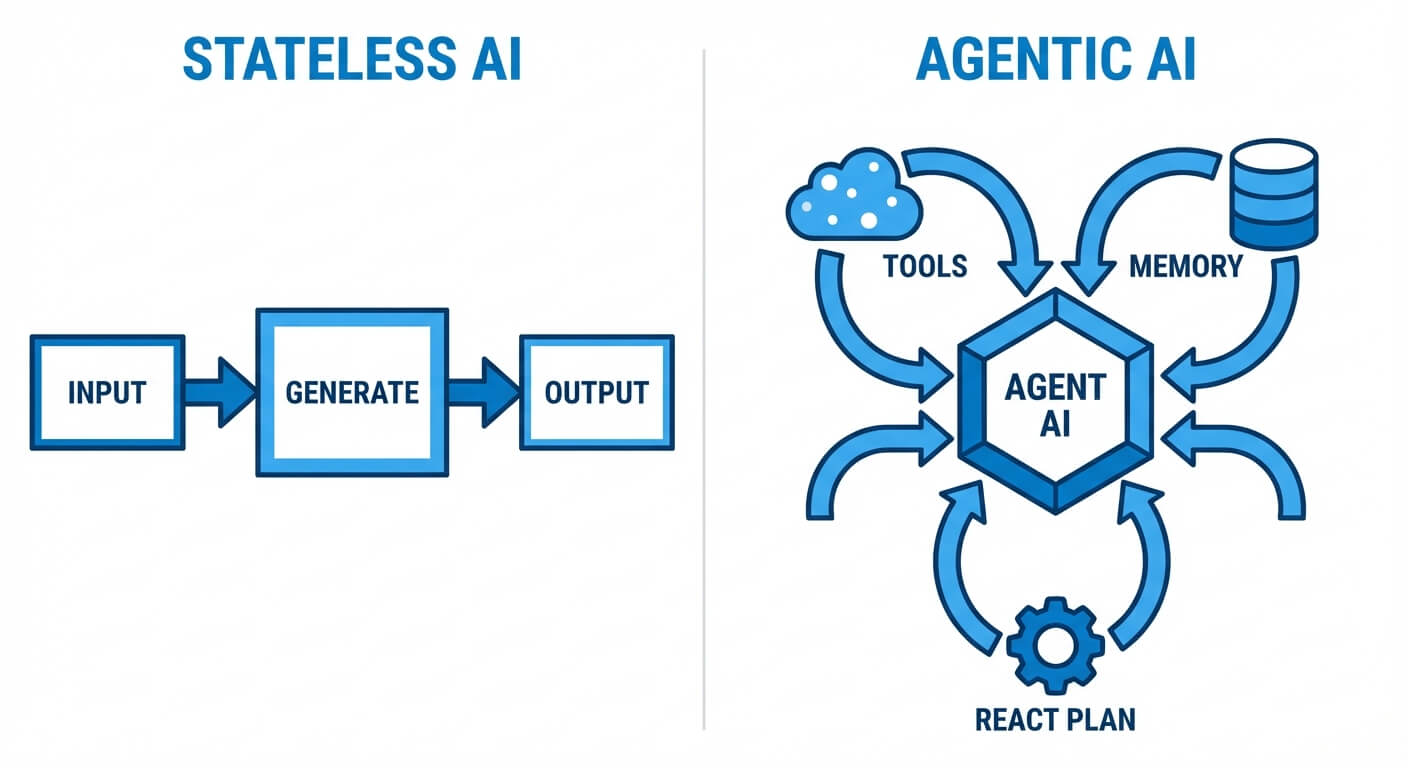

However, standard Generative AI architectures suffer from a critical limitation: they are fundamentally reactive and stateless. They require a human-in-the-loop to provide a prompt, evaluate the output, and iteratively refine the request. Agentic AI represents the next evolutionary leap. By wrapping foundation models in cognitive architectures that support memory, planning, and tool execution, engineers are transforming static text generators into dynamic, autonomous agents capable of interacting with external environments. Understanding the exact technical distinction between these two paradigms is crucial for modern software engineers designing intelligent distributed systems.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

What is Generative AI?

Generative AI encompasses a class of machine learning models designed to generate novel data distributions that mimic the training data. Unlike discriminative models, which learn the boundary between classes (modeling the conditional probability P(Y|X)), generative models learn the joint probability distribution P(X, Y), or just P(X), allowing them to sample from this distribution to create new instances.

At the core of modern Gen AI—particularly Large Language Models (LLMs)—is the Transformer architecture. Introduced in 2017, the Transformer relies heavily on the self-attention mechanism, which allows the model to weigh the importance of different tokens in a sequence regardless of their positional distance.

The standard self-attention computation is represented as: Attention(Q, K, V) = softmax((Q · K^T) / √d_k) · V

Here, Q (Queries), K (Keys), and V (Values) are matrices derived from the input embeddings, and d_k is the dimension of the key vectors. This mechanism enables the model to capture complex contextual dependencies, making it exceptionally proficient at next-token prediction tasks. Other generative architectures include Diffusion Models (which progressively remove Gaussian noise from a signal) and Generative Adversarial Networks (GANs).

Key Characteristics of Generative AI

- Stateless Nature: In their raw form, generative models do not possess persistent memory. Each API call is an independent transaction. Context must be explicitly passed in the prompt for the model to maintain conversational coherence.

- Probabilistic Output: Outputs are generated by sampling from a probability distribution. This stochasticity is controlled by hyperparameters like temperature (T), where T < 1 sharpens the distribution (more deterministic), and T > 1 flattens it (more random).

- Reactive Execution: The model performs a single pass of computation. It receives an input, processes it through its neural network layers, and produces an output. It cannot iteratively pause, reflect, or execute external code during this forward pass.

Generative AI Use Cases in Software Engineering

- Code Synthesis and Boilerplate Generation: Automatically generating standard class definitions, REST API scaffolding, or SQL schemas based on natural language descriptions.

- Documentation: Converting complex algorithmic implementations into readable docstrings and README files.

- Unit Test Generation: Parsing existing functions and writing corresponding test cases in frameworks like PyTest or JUnit.

Generative AI Pros and Cons

Pros:

- Highly versatile across multiple modalities (text, code, image).

- Extremely fast inference times for single-turn queries.

- Pre-trained foundation models reduce the need for specialized, task-specific training data.

Cons:

- Prone to "hallucinations" (generating syntactically correct but factually incorrect or illogical statements).

- Incapable of taking action outside of its conversational interface.

- Limited by its training data cutoff date unless augmented with external systems.

What is Agentic AI?

Agentic AI systems are composite architectures where an LLM functions as the central reasoning engine ("brain") within a larger framework designed for autonomy. Rather than merely returning text to a user, an Agentic AI parses a high-level goal, breaks it down into sequential steps, determines which tools are necessary to complete those steps, executes them, and evaluates the results before proceeding.

This represents a shift from a simple input-output function to a persistent control loop. Agentic architectures typically rely on paradigms such as ReAct (Reasoning and Acting). In the ReAct framework, the model generates reasoning traces and task-specific actions in an interleaved manner. This allows the system to perform dynamic reasoning to adjust plans based on intermediate observations.

Core Components of Agentic Architectures

- The LLM Core: The foundational generative model used for natural language understanding, logical deduction, and decision-making.

- Memory Systems:

- Short-term memory: Handled via the context window, capturing the immediate execution loop and scratchpad reasoning.

- Long-term memory: Implemented via Vector Databases (e.g., Pinecone, Milvus). The agent stores past experiences or retrieved documents as high-dimensional vectors, accessing them via cosine similarity search.

- Planning and Orchestration: Frameworks like LangChain or LlamaIndex provide the scaffolding for planning algorithms. This includes techniques like Chain of Thought (CoT), Tree of Thoughts (ToT), or multi-agent debate.

- Tools and Actuators: The defining feature of an agent is its ability to interface with external APIs. This includes executing Python code in a secure sandbox, querying SQL databases, making HTTP requests, or interacting with a CI/CD pipeline.

Agentic AI Use Cases in Software Engineering

- Autonomous Bug Fixing: An agent reads a Jira ticket, clones the relevant repository, searches for the bug using grep-like tools, modifies the source code, runs unit tests to verify the fix, and automatically opens a Pull Request.

- Database Optimization: An agent continuously monitors slow query logs, formulates optimized SQL statements, tests the query execution plan, and applies indexing recommendations dynamically.

- End-to-End Test Automation: An agentic system navigates a live web application, autonomously identifying DOM elements, writing Cypress or Selenium scripts, and adapting the scripts when the UI layout changes.

Agentic AI Pros and Cons

Pros:

- High degree of autonomy, drastically reducing human intervention for multi-step tasks.

- Capable of interacting with real-world, real-time data through APIs.

- Self-correcting mechanisms allow the agent to recover from intermediate errors during execution.

Cons:

- High latency and API inference costs due to the numerous LLM calls required within a single reasoning loop.

- Non-deterministic execution paths make debugging and auditing difficult.

- Risk of infinite loops if the reasoning engine fails to formulate a valid escape condition.

Generative AI vs Agentic AI: Key Differences

When evaluating the architectural and functional distinctions between these two systems, it is vital to understand that Agentic AI is an extension of Generative AI. Understanding generative ai vs agentic ai requires examining how the latter augments the former with control logic and state management.

Below is a technical comparison detailing the core differences:

| Feature / Dimension | Generative AI | Agentic AI |

|---|---|---|

| Core Function | Content synthesis and next-token prediction based on statistical probability. | Goal achievement through autonomous reasoning, planning, and task execution. |

| System Architecture | Monolithic neural network (e.g., Transformer, Diffusion model). | Composite system (LLM + Vector DB + API Tools + Orchestration layer). |

| Execution Paradigm | Single-turn, reactive forward pass. Output generated immediately. | Multi-turn, cyclic execution loop (Observe → Reason → Act). |

| State and Memory | Stateless by default. Context must be re-injected per request. | Stateful. Utilizes persistent memory stores to maintain long-term context. |

| Error Handling | Fails silently by outputting hallucinations or incorrect data. Requires human correction. | Capable of self-correction. Parses error logs and retries with an altered strategy. |

| Human Interaction | Continuous. Human acts as the orchestrator ("prompt engineering"). | Minimal. Human provides the initial goal and acts as an overseer/supervisor. |

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

Architectural Deep Dive: Under the Hood

To fully comprehend what is gen ai and agentic ai from an engineering perspective, we must examine the codebase differences. A Generative AI application typically involves a straightforward REST payload sent to an inference server. The response is the final product.

Consider a standard Generative AI implementation in Python using the OpenAI API:

In contrast, an Agentic AI system requires a complex control loop. The agent must parse the prompt, determine what tools it has available, decide on an action, parse the observation from that action, and loop until a terminal condition is met.

Below is a conceptual illustration of an Agentic execution loop using a simplified ReAct paradigm:

This structural divergence is what makes Agentic AI significantly more powerful—and significantly more complex to build and maintain. The system must account for API failures, unexpected tool outputs, and the LLM's tendency to lose focus over long execution chains.

When to Use Gen AI vs Agentic AI

Choosing between standard Generative AI and Agentic AI is a critical architectural decision that heavily impacts system complexity, operational costs, and latency.

Opt for Generative AI When:

- The task is highly scoped and single-turn: Tasks like translating text, summarizing a static document, or generating an image do not require iterative planning.

- Latency is a strict constraint: Because Gen AI requires only a single network round-trip to the model, it is suitable for real-time user-facing features like chatbots and autocomplete engines.

- The environment is static: If the required output relies purely on the internal knowledge embedded within the model's weights (or a single retrieved context chunk via standard RAG), agents add unnecessary overhead.

- Cost predictability is essential: A single LLM call has a highly predictable token usage.

Opt for Agentic AI When:

- The task requires multi-step deductive reasoning: If a problem requires the model to "research," analyze the findings, and adjust its subsequent steps based on that analysis, an agent is required.

- Interaction with external state is mandatory: If the system needs to mutate state in an external environment (e.g., executing a database transaction, sending an email, or deploying code via a CI pipeline), an agentic architecture with tool-calling capabilities is strictly necessary.

- Self-correction is highly valued: In complex coding tasks where a syntax error might occur, an agent can read the compiler trace, recognize its mistake, and rewrite the code autonomously. Latency is traded for accuracy and autonomy.

The Future: Trends in Generative and Agentic AI

The trajectory of artificial intelligence points definitively toward increasingly capable agentic systems. We are moving away from monolithic, single-agent architectures toward Multi-Agent Systems (MAS). In frameworks like Microsoft's AutoGen or CrewAI, multiple agents with distinct personas and tools collaborate, debate, and verify each other's work. For example, a Software Engineer Agent writes code, while a distinct QA Agent simultaneously reviews it and writes unit tests, passing feedback back to the engineer autonomously.

Furthermore, we are witnessing the rise of neuro-symbolic AI within agent architectures. While generative models excel at pattern matching (neural), they often struggle with rigorous mathematics and formal logic. By hooking LLMs up to symbolic solvers (like Wolfram Alpha or Python interpreters) via agentic tool use, engineers can combine the fluid natural language capabilities of Gen AI with the absolute deterministic accuracy of symbolic logic.

Memory architectures are also rapidly evolving. Rather than relying solely on simplistic semantic search over vector databases, next-generation agents use graph databases (Knowledge Graphs) to maintain complex relational state over time, allowing the agent to continuously learn and adapt to a specific enterprise's internal context.

Understanding generative ai vs agentic ai is no longer just a theoretical exercise; it is the blueprint for the next generation of software engineering. While Generative AI unlocked the ability for machines to communicate and create, Agentic AI is unlocking the ability for machines to independently act, reason, and solve complex operational problems at scale.

Frequently Asked Questions (FAQ)

What is an AI agent?

An AI agent is a software system that uses a generative foundation model as its cognitive engine to perceive its environment, formulate a plan, and execute actions via external tools to achieve a predefined goal autonomously.

Is Agentic AI replacing Generative AI?

No, Agentic AI is not replacing Generative AI; it builds upon it. Generative AI models (like GPT-4, Claude 3, or Llama 3) serve as the fundamental reasoning and natural language processing core within an Agentic AI architecture. Without Gen AI, modern AI agents would lack the ability to understand instructions or generate dynamic code to interact with APIs.

How do you prevent an Agentic AI from executing harmful API calls?

Securing agentic systems requires strict boundaries. Engineers implement "Human-in-the-loop" (HITL) checkpoints for destructive actions (e.g., dropping a database or authorizing a payment). Additionally, agents should operate within isolated sandbox environments (like Docker containers for code execution) and be granted the principle of least privilege regarding API access tokens.