Disjoint Set (Union Find Algorithm)

The Disjoint-Set data structure, also known as Union-Find, manages elements in non-overlapping subsets. It's primarily used for graph connectivity problems, offering two operations: Union, to merge sets, and Find, to identify a set's leader. This structure excels in tasks involving elements initially represented as separate sets, enabling efficient set combination and connectivity checks between elements. It's defined by disjoint sets (non-intersecting) and the Union-Find algorithm, which determines subset membership and merges subsets. The Disjoint-Set structure is crucial for problems requiring frequent set merges and connectivity queries, due to its near-constant-time operations.

How to Combine Two Sets?

Whenever we have been given a problem that contains n elements represented as separate sets initially and we need to the perform following operations:

- Combine two sets.

- Find the connectivity of two given elements i.e. whether they belong to the same set or not. Then it is advisable to use the Disjoint-Set data structure to pose an efficient solution. Disjoint Set Union is sometimes also referred to as Union-Find because of its two important operations -

Disjoint Set: Two sets are called disjoint set if the intersection of two sets is ϕ i.e. NULL. A Disjoint-Set data structure that keeps records of a set of elements partitioned into several non-overlapping (Disjoint) subsets.

Union Find: Union-Find algorithm performs two very useful operations -

Find: To find the subset a particular element 'k' belongs to. It is generally used to check if two elements belong to the same subset or not.

Union: It is used to combine two subsets into one. A union query, say Union(x, y) combines the set containing element x and the set containing element y."

Example of Disjoint Set

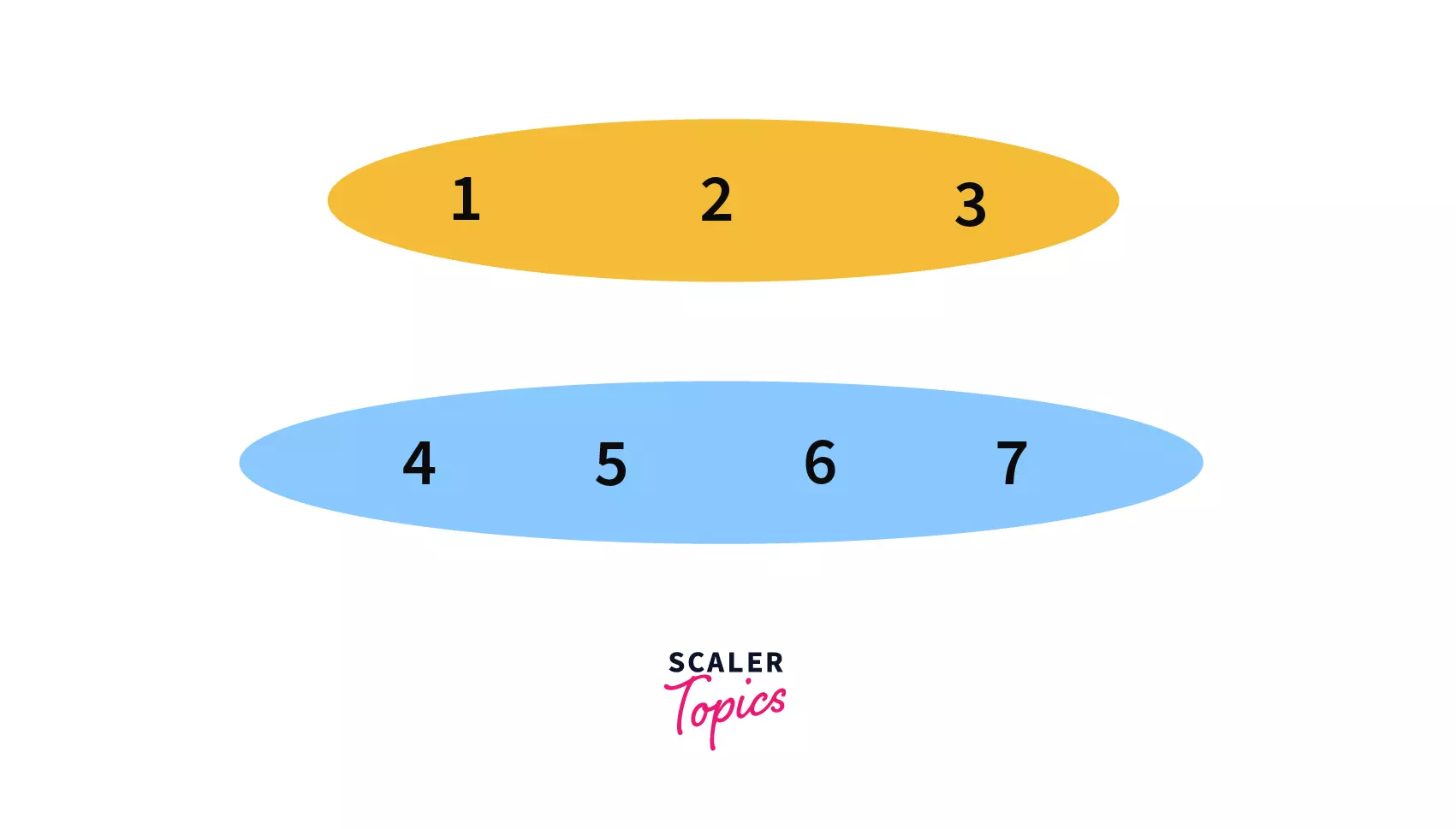

In this example, we are going to see how the union queries (series of Union operations) work in a Disjoint Set.

Let's assume we have 7 nodes initially, we will store them in the form of trees.Where each tree corresponds to one set and the root of the tree will be the parent/leader of the set.

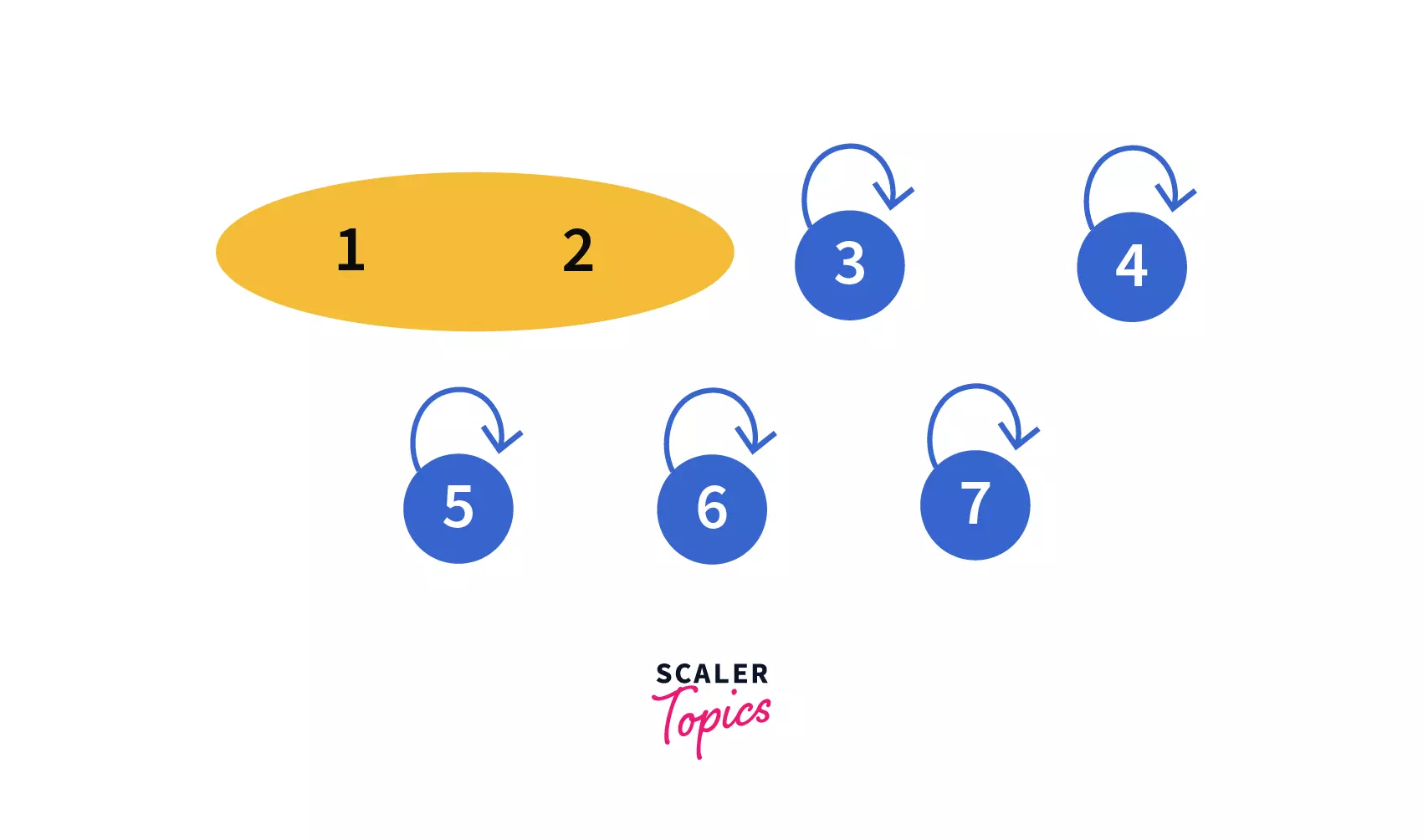

At first, you can see that all the seven nodes are parents of themselves. In simple words we can say, we have seven different trees containing 1 element each i.e. the root of the tree.

Now we will perform the following union queries one by one on them --

- Union(1, 2)

- Union(2, 3)

- Union(4, 5)

- Union(6, 7)

- Union(5, 6)

- Union(2, 6)

-

In our first query Union(1, 2) we need to join two sets i.e. 1 and 2 into one.

After performing the query we can see that, 1 and 2 are in the same set with parent/leader as 1 so our disjoint set will look like -

-

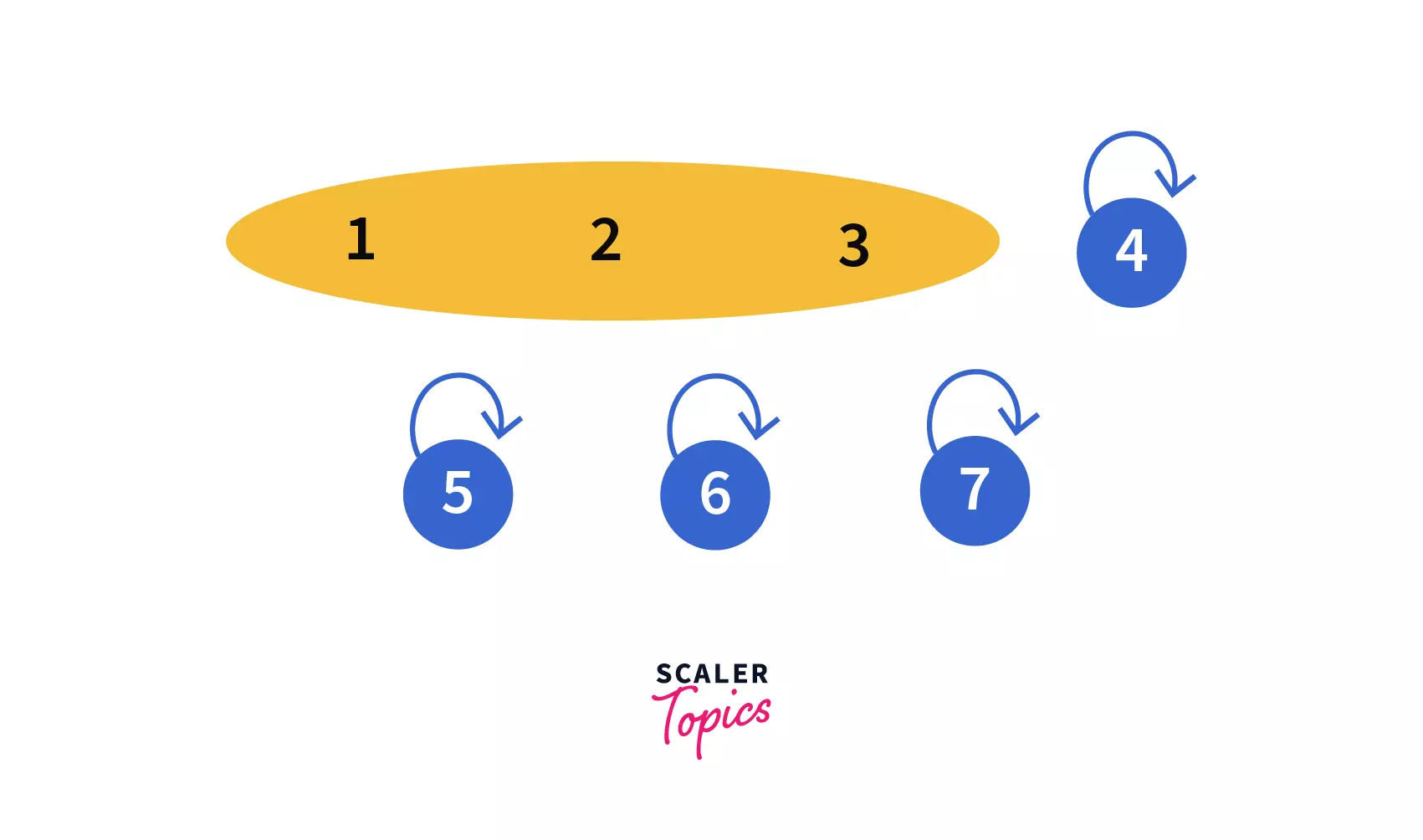

In our second query, Union(2, 3) we need to join the sets which contain elements 2 and 3. After performing the query we can see that, 1, 2, and 3 are clubbed into one set so our disjoint set will look like -

-

In our third query, Union(4, 5) we need to join the sets which contain the elements 4 and 5. After performing the query we can see that, 4 and 5 are in the same set now with parent/leader as 4, making our disjoint set like -

-

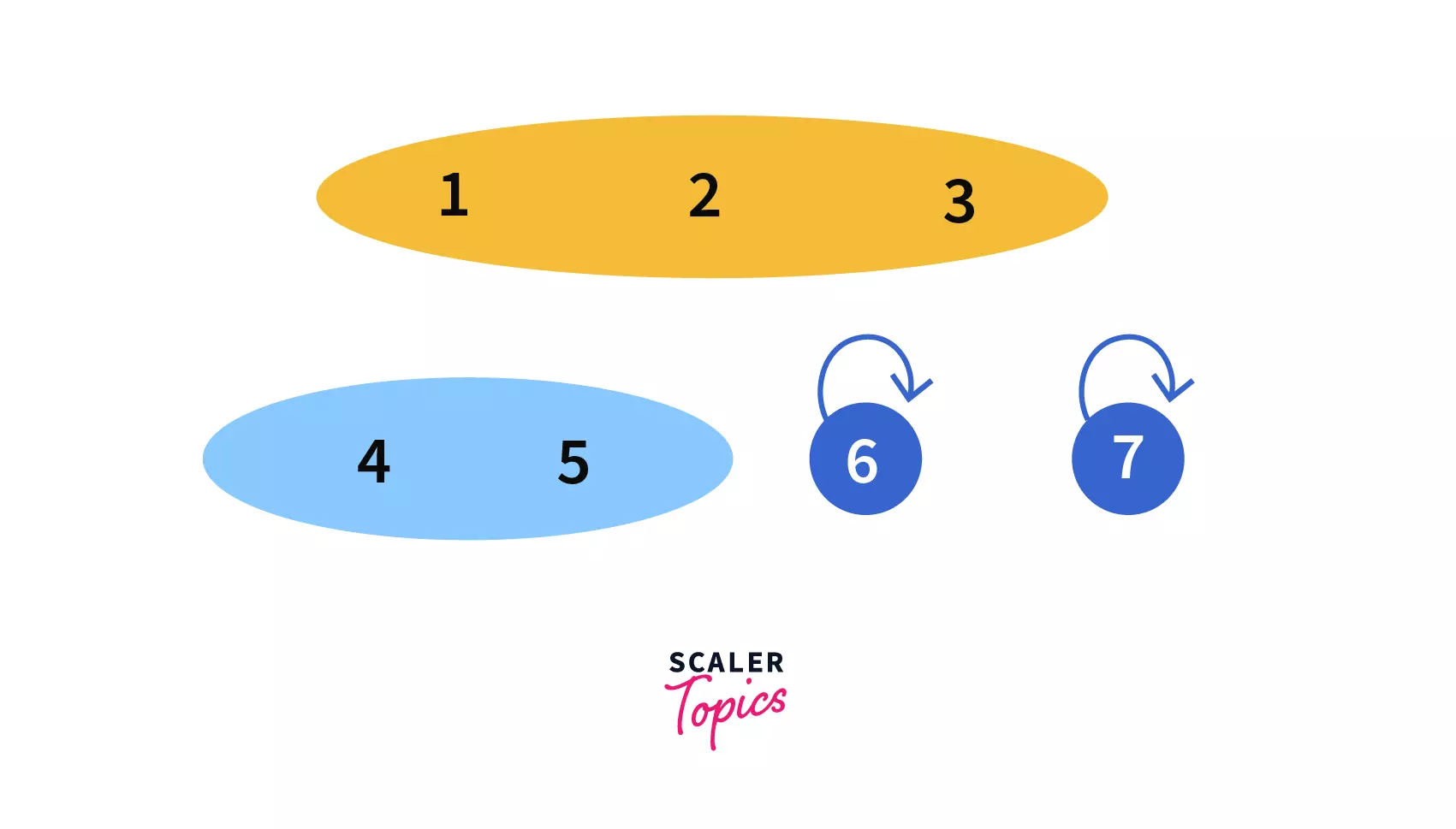

In our fourth query, Union(6, 7) we need to join the sets which contain the elements 6 and 7. After performing the query we can see that, 6 and 7 are in the same set now with parent/leader as 6, making our disjoint set like -

-

In our fifth query, Union(5, 6) we need to join the sets which contain the elements 5 and 6. After performing the query we can see that, 6 and 7 are in the same set now with parent/leader as 4, making our disjoint set like -

-

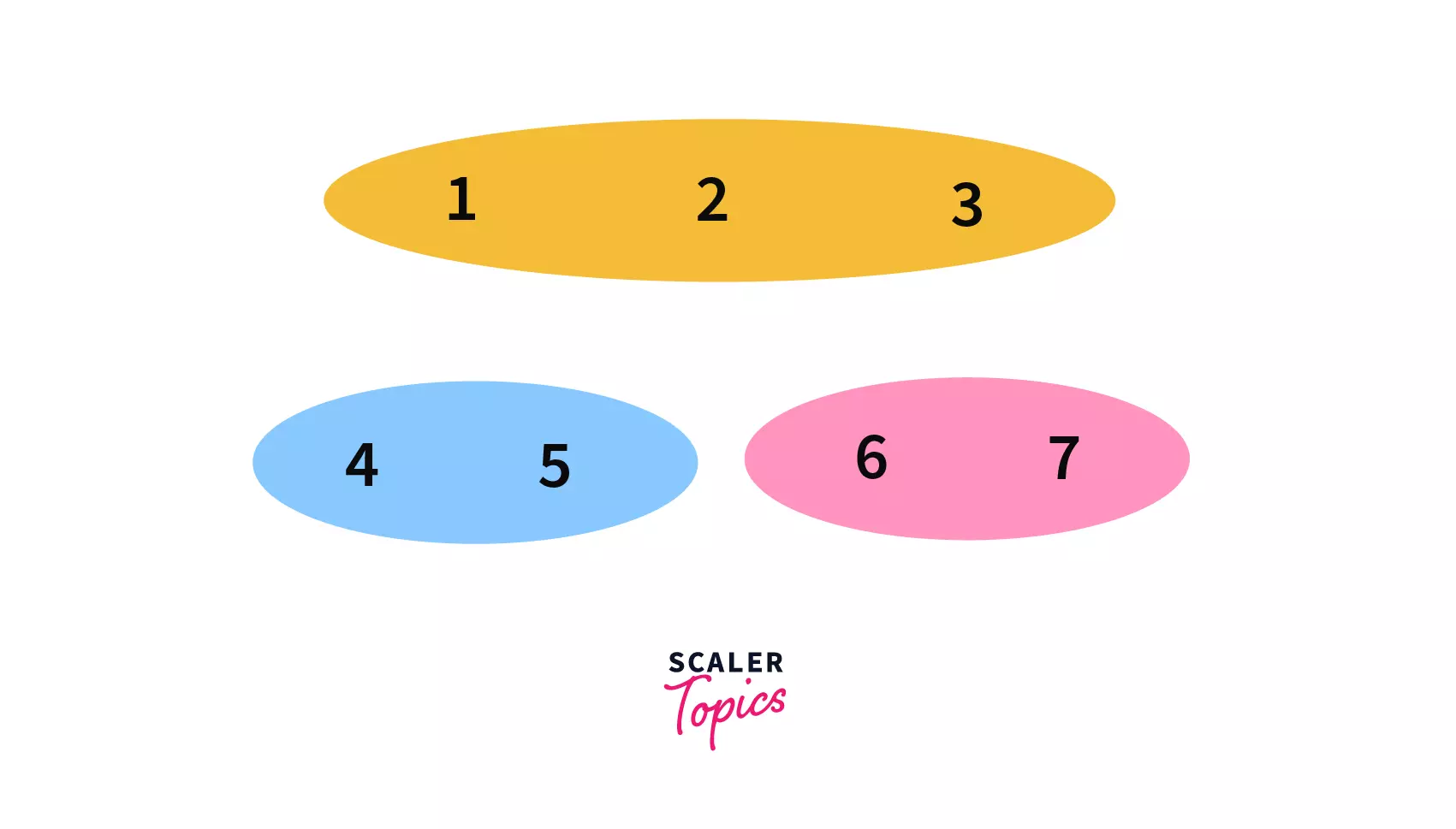

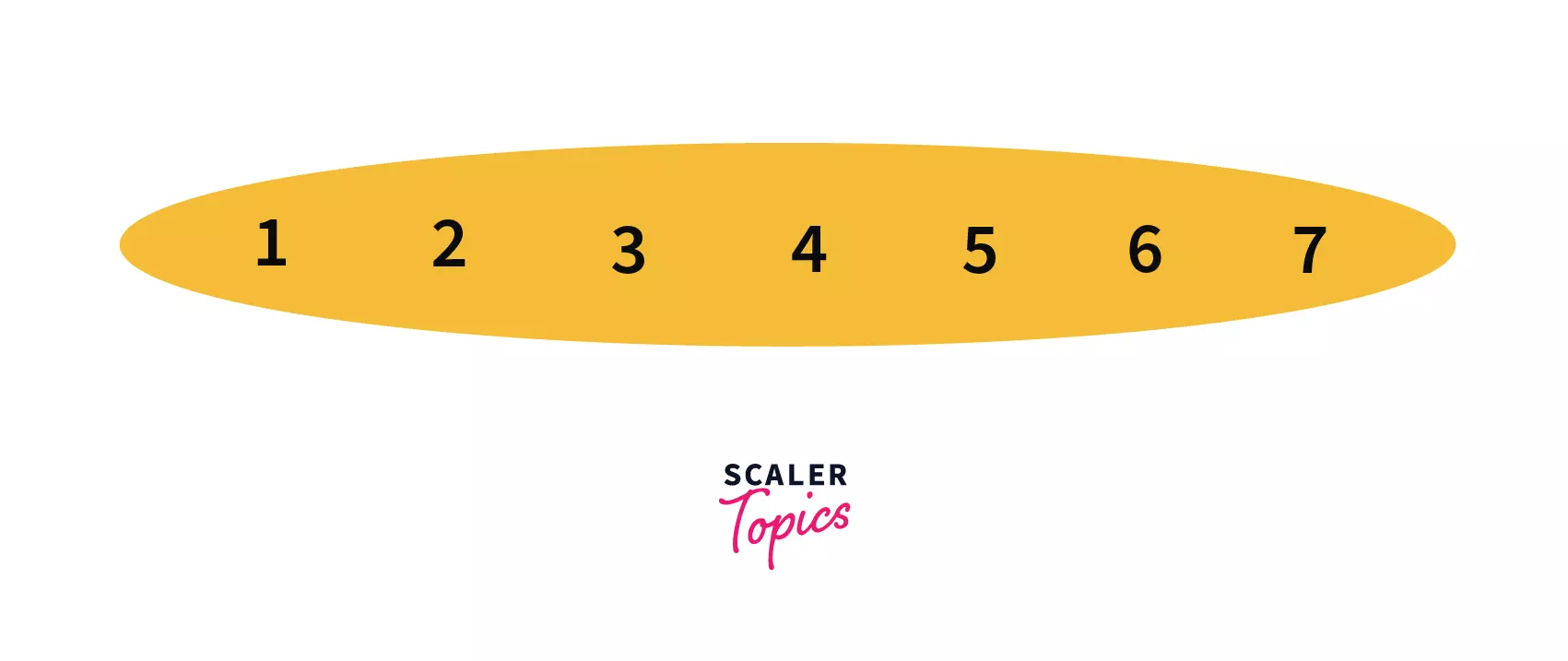

In our sixth query, Union(2, 6) we need to join the sets which contain elements 2 and 6. After performing the query we can see that, all the elements i.e. 1 to 7 are in the same set now with parent/leader as 1, making our disjoint set like -

Algorithms

Naive Implementation:

The most basic implementation of the Disjoint set one can think of is to keep a track of the parent of every element present in a list/array.

We will have a global array/list of size n where 'n' is the number of elements in the set.

Finding the parent of an element 'u' is very easy, since we are keeping track of parent of every element. If parent[u]=u then we will return parent[u] in the other case we will recursively find the parent of parent[u] until the condition parent[u]=u satisfies.

For merging two sets, Let we need to perform Union(u, v) then

-

We will find parents of u and v.

-

If they found different (which means u and v belongs to different sets) then we would merge them by making parent[u]=v or parent[v]=u.

Pseudocode:

And that's it, yes you read it right this is the pseudocode for implementing Disjoint Set data structure, but it is not efficient for large inputs.

Complexity Analysis:

- Find - Time complexity of Find operation is in the worst case (consider the case when all the elements are in the same set and we need to find the parent of a given element then we may need to make n recursive calls).

- Union - For union query (say Union(u, v)) we need to find the parents of u and v making its time complexity to be .

This approach is not much efficient, so to get the full-fledged advantage of Disjoint Set Union we will look for some minor modifications that can make our code efficient.

Optimization 1 (Path Compression):

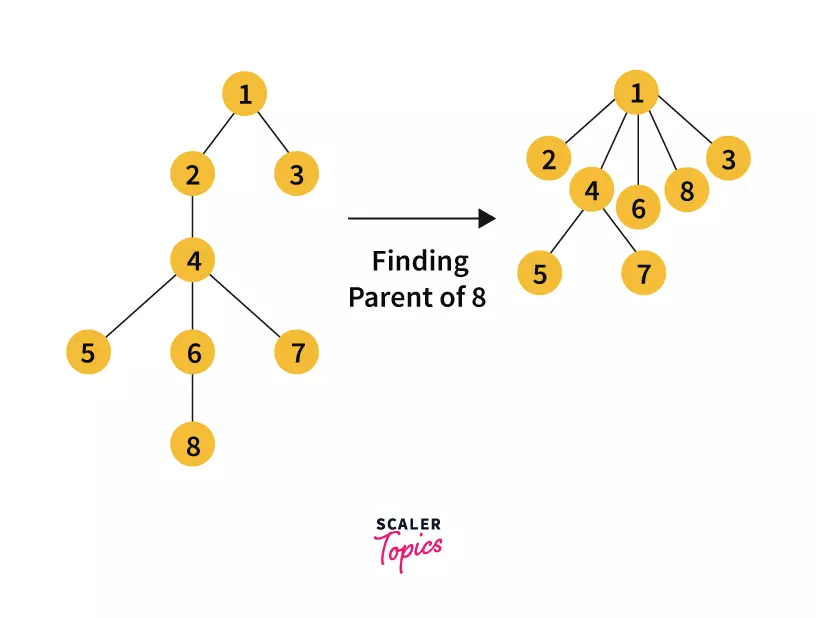

Let u be a node and p be its parent. When we are finding parent of u recursively, we are also finding parent of all those intermediate nodes which we visit in the path u → p.

The trick here is to shorten the path to the parent of all those intermediate nodes by directly connecting them to the leader of the set.

You can see that in the process of finding the parent of node 8 we have attached all the intermediate nodes to 1.

To achieve this, we just need to modify our find function a bit and that minor modification is - During the recursive call of finding parent of parent[u] we will also pass the result to parent[u] (please refer to the last line of underlying pseudocode for better understanding)

This is firstly finding the root of the set and in the process of stack unwinding, we are attaching all the intermediate nodes to their representative. Yes by modifying that line we reduced the time complexity from to

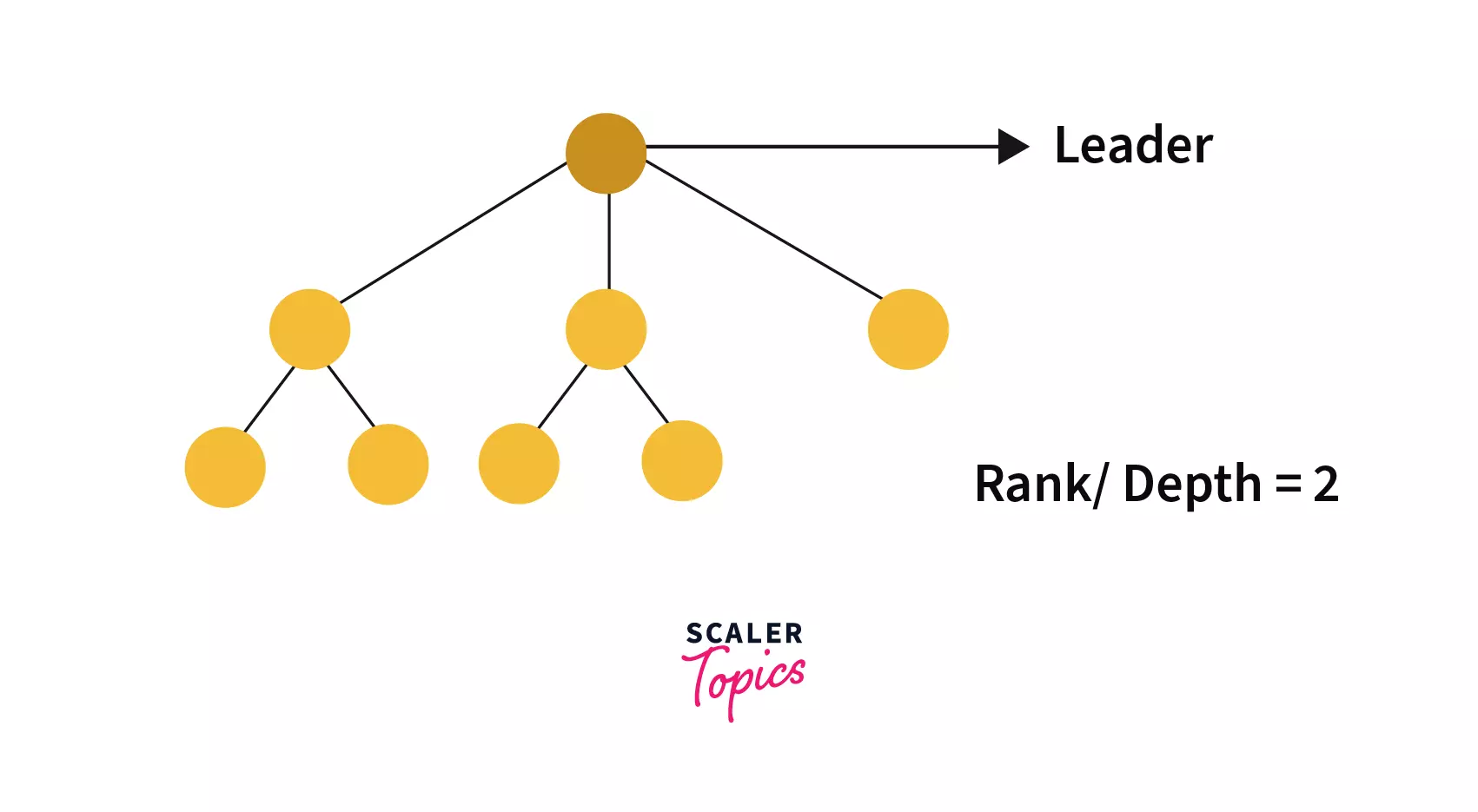

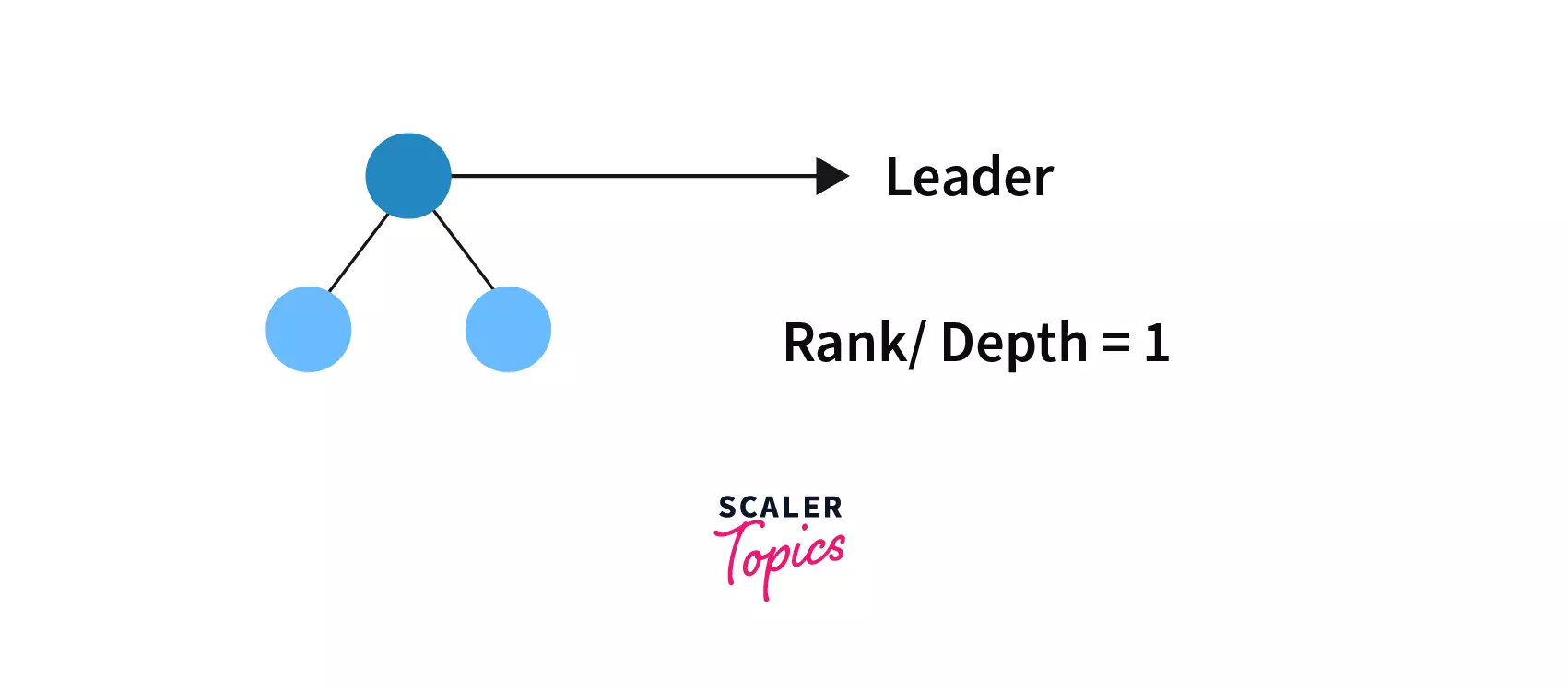

Optimization 2(Union by Rank):

In this optimization, we would be very much careful about which tree is getting attached to the other one. In the naive implementation, the second tree is always getting attached to the first one which could lead the structure to become like the skewed tree which would have linear chains of length O(n).

So what we will do is, we will maintain a record of the depth of each tree (Rank) and during the union operation we will attach the tree with lower rank to the tree with higher the rank thus Rank of the final tree (after union operation) will remain the same.

In case, if the Rank of both trees is equal we will join any one of them with the other and will increase the rank by 1 of the tree to which another one is joined.

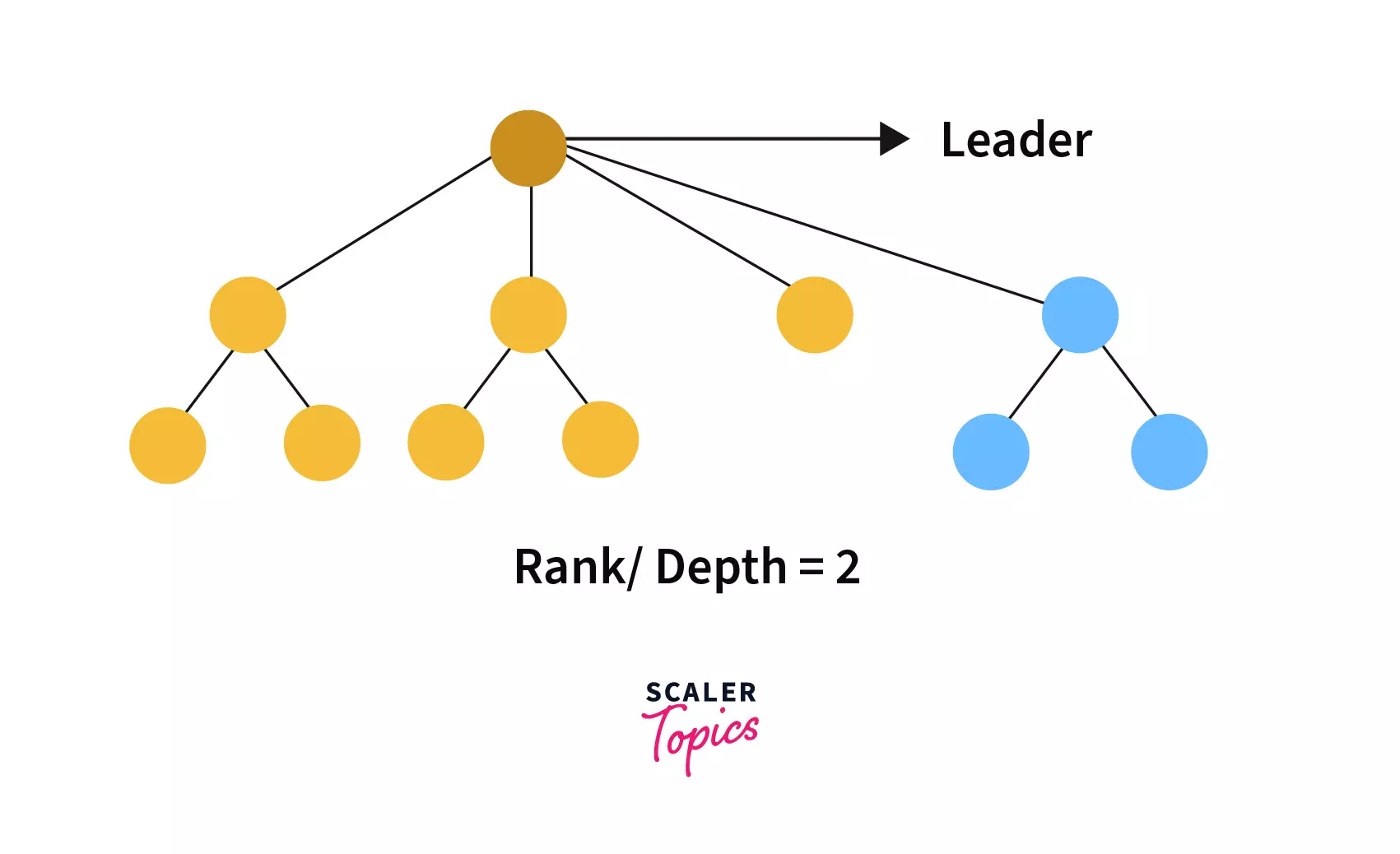

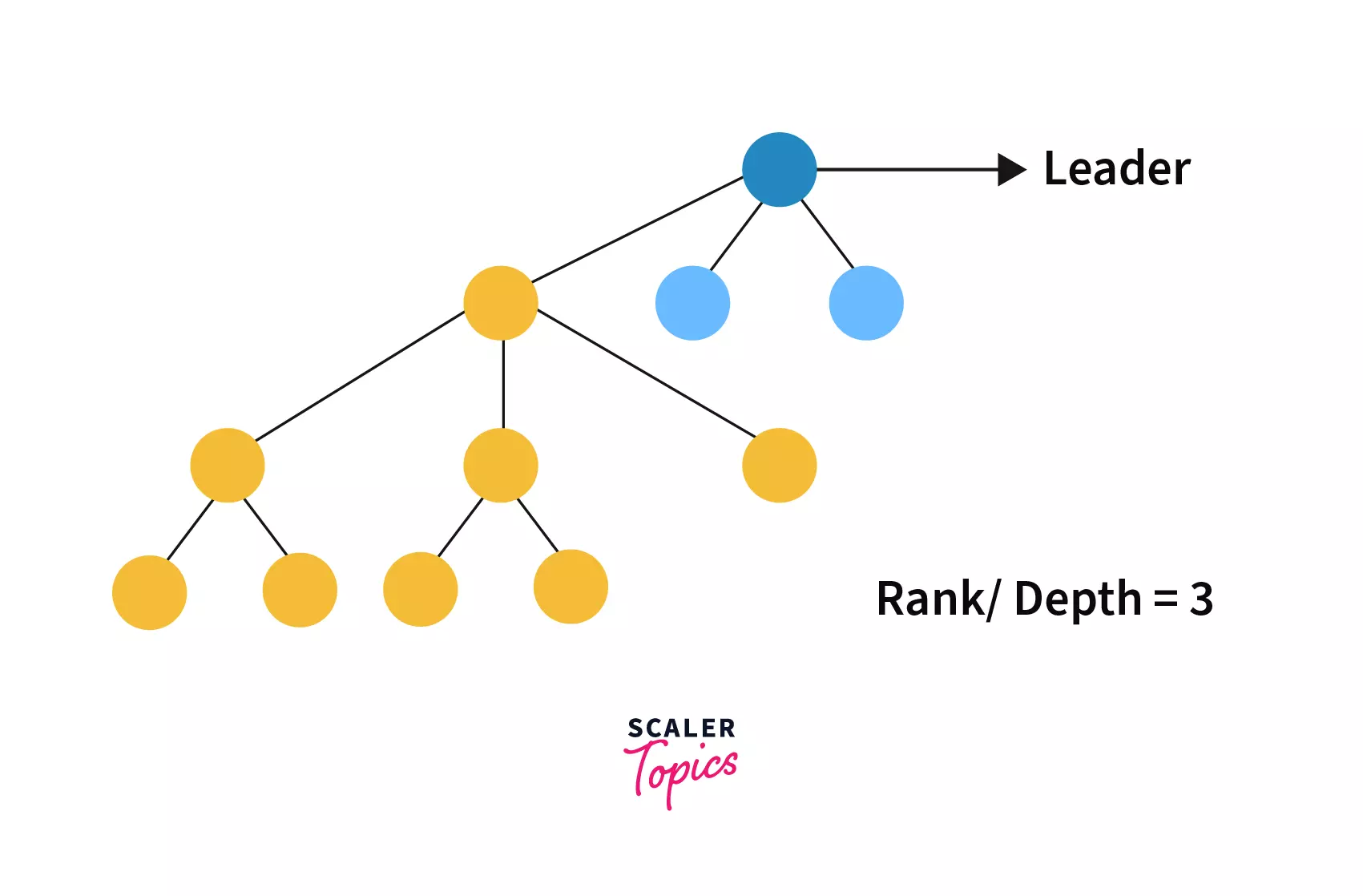

For example -

Suppose we need to perform on give two trees -

Tree 1 -

Tree 2 -

If we combine them by checking their rank then we would find rank [tree1]=2 and rank[tree2]=1 then, the combined tree would be -

We can see that since tree 2 is attached to tree 1 so, the rank of the combined tree is same as tree 1.

We can see that since tree 2 is attached to tree 1 so, the rank of the combined tree is same as tree 1.

If combine Tree 1 and Tree 2 without checking their rank then the combined tree would look like -

Now we can observe attaching tree 1 to tree 2 is not a good idea as the depth of the combined tree has been increased by 1 which would cost us more time to find the leader of the tree.

The change in algorithm of union function is as follows:

- When merging two sets, we will check which set has a greater rank then we will attach a lower rank tree to the tree with a higher rank.

- In case both trees have equal rank then we will attach anyone of them to other but now we need to increase the rank of the tree to which other is joined by one.

By doing this modification alone (i.e. without Path compression) we can reduce the time complexity to .

Most Optimized Approach:

In optimization 1, we have seen how can we compress the path by directly attaching all the intermediate nodes to the leader of the set during the process of finding the parent of node u the process is termed as "Path Compression".

In optimization 2, we have seen that how can we minimize the depth of trees by attaching lower rank trees to trees with higher ranks while merging them. The process is termed as "Union by Rank"

So merging optimization 1 and 2 seems to be a good idea, isn't it? The most optimal approach is the same only i.e. merging Optimization 1 and 2. If we implement the Find function using Path Compression and the Union function using Union by rank we can achieve the most optimized algorithm.

For implementing we just need two global arrays i.e. parent and rank of size n each to maintain parent and rank of every node present. For Find function we will use the pseudocode used in Optimization 1 and for Union function we will use pseudocode used in Optimization 2.

PesudoCode:

Code:

To make our code more modular let's make a class to implement with following data members and methods -

Data Members

- - It is an integer type array of size , where is the number of nodes present. It holds information about parent of each node. Initially,

- - It is also an integer type array of size to hold information about rank of a node. Initially, .

Methods -

- - It is an integer type function which takes one argument . Its main aim is to find leader of set to which belongs. It is implemented according to the logic discussed in Path compression section.

- - It is a void type function which takes two arguments and . It merge the set to which belongs and the set to which belongs. It is implemented as per the logic discussed in Union by rank section.

-

C/C++:

-

Java:

-

Python:

Complexity Analysis of Most Optimized Approach:

If we combine both optimizations i.e. Path compression and Union by rank then we can achieve almost constant time complexity.

The final amortized time complexity is calculated to be O((n)), where (n) is inverse Ackermann function which is defined as -

Where is the Ackermann funcntion it is an extremely rapid growing function and hence it's inverse i.e. is extraordinarily slow-growing function is defined by -

Some sample values of Ackermann function are --

| Function | Value |

|---|---|

| 1 | |

| 3 | |

| 7 | |

| 2047 | |

| >>1080 |

The growth of function is too slow and hence the value of would not exceed 5 for any n 10600 as otherwise, n would be more than the total number of atoms present in the universe.

Applications of Disjoint Set

- It is used to find Cycle in a graph as in Kruskal's algorithm, DSUs are used.

- Checking for connected components in a graph.

- Searching for connected components in an image.

Boost your C++ proficiency with our expertly designed Data Structures in C++ Certification Course. Get started now!

Conclusion

- In this blog, we have discussed one of the important data structures i.e. Disjoint Set & Union which is used to find the connectivity of different components in a graph efficiently.

- We have seen the naive approach to implement DSU then we have seen several optimizations viz. Path compression and Union by rank. Also, we have seen the most optimized approach with almost constant time complexity.

- It can also be used for several other types of problems where we need to process many queries in which we have to check whether two objects are connected directly or indirectly.