CPU Scheduling in Operating System

Overview

CPU scheduling is the task performed by the CPU that decides the way and order in which processes should be executed. There are two types of CPU scheduling - Preemptive, and non-preemptive. The criteria the CPU takes into consideration while "scheduling" these processes are - CPU utilization, throughput, turnaround time, waiting time, and response time.

What is CPU Scheduling?

Before we get to CPU scheduling, let's define a process. A process is essentially just a set of instructions or a program in execution.

As you can see in the diagram above, we have processes that come from the job queue to the ready queue (in primary memory) that are one by one, in some manner given resources, and then their execution is completed.

Say you have a uni programming system (like MS-DOS) and a process that requires I/O is being executed. While it waits for the I/O operations to complete, the CPU remains idle. This is wrong, because we're wasting the resources of the CPU, as well as time, and might result in some processes waiting too long for their execution.

In multiprogramming systems, however, the CPU does not remain idle whenever a process currently executing waits for I/O. It starts the execution of other processes, making an attempt to maximize CPU utilization. How does the CPU decide which process should be executed next from the ready queue for maximum utilization of the CPU? This procedure of "scheduling" the processes, is called CPU scheduling.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

What are the Types of CPU Scheduling?

There are essential 4 conditions under which CPU scheduling decisions are taken:

- If a process is making the switch between the running state to the waiting state (could be for an I/O request, or invocation of wait() for terminating one of its child processes)

- If a process is making the switch from the running state to the ready state (on the occurrence of an interrupt, for example)

- If a process is making the switch between waiting and ready state (e.g. when its I/O request completes)

- If a process terminates upon completion of execution.

So in the case of conditions 1 and 4, the CPU does not really have a choice of scheduling, if a process exists in the ready queue the CPU's response to this would be to select it for execution. In cases 2 and 3, the CPU has a choice of selecting a particular process for executing next.

There are mainly two types of CPU scheduling:

Non-Preemptive Scheduling

In the case of non-preemptive scheduling, new processes are executed only after the current process has completed its execution. The process holds the resources of the CPU (CPU time) till its state changes to terminated or is pushed to the process waiting state. If a process is currently being executed by the CPU, it is not interrupted till it is completed.

Once the process has completed its execution, the processer picks the next process from the ready queue (the queue in which all processes that are ready for execution are stored).

For Example:

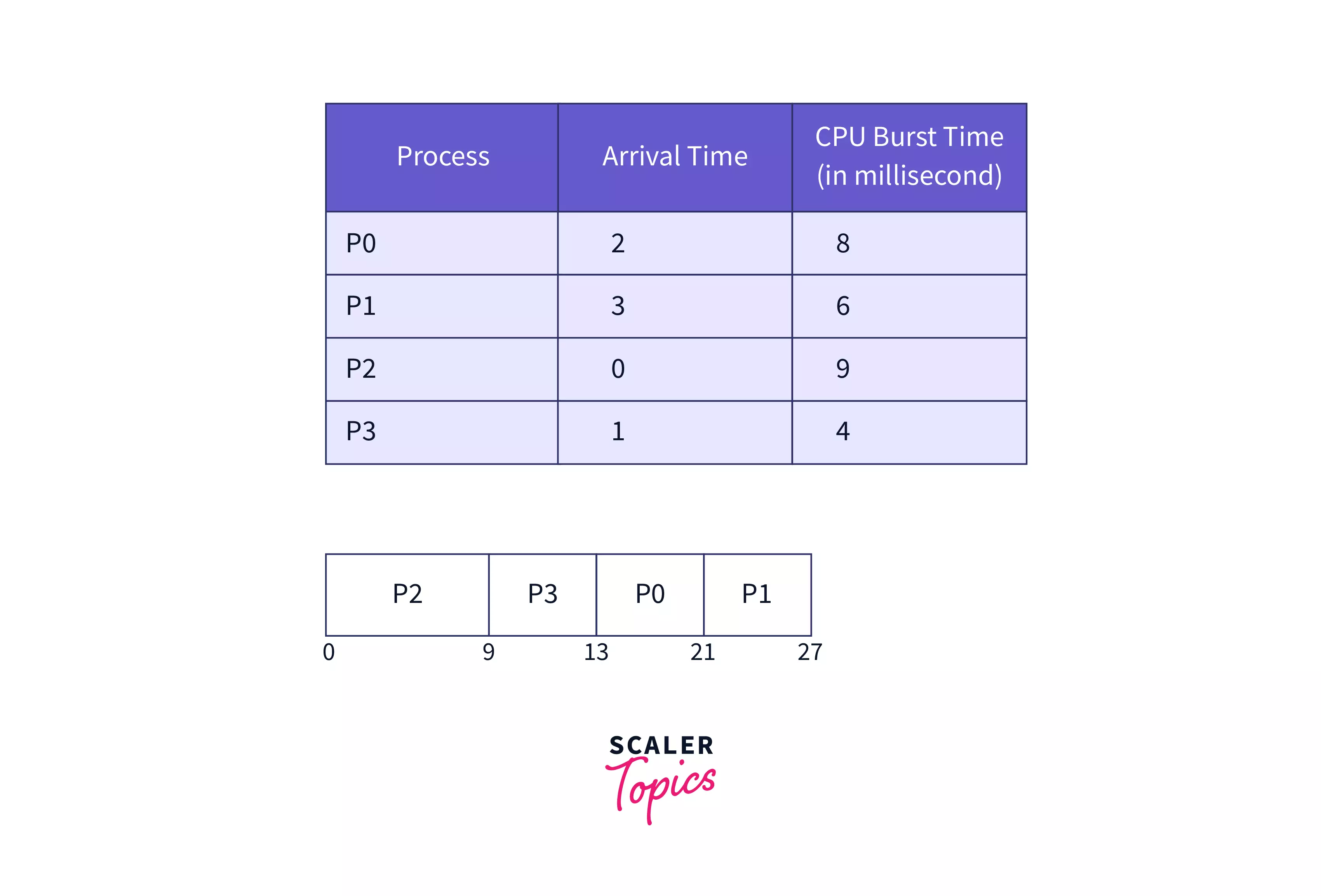

In the image above, we can see that all the processes were executed in the order in which they appeared, and none of the processes were interrupted by another, making this a non-preemptive, FCFS (First Come, First Served) CPU scheduling algorithm. P2 was the first process to arrive (arrived at time = 0), and was hence executed first. Let's ignore the third column for a moment, we'll get to that soon. Process P3 arrived next (at time = 1) and was executed after the previous process - P2 was done executing, and so on.

Some examples of non-preemptive scheduling algorithms are - Shortest Job First (SJF, non-preemptive), and Priority scheduling (non-preemptive).

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

Preemptive Scheduling

Preemptive scheduling takes into consideration the fact that some processes could have a higher priority and hence must be executed before the processes that have a lower priority.

In preemptive scheduling, the CPU resource is allocated to a process for only a limited period of time and then those resources are taken back and assigned to another process (the next in execution). If the process was yet to complete its execution, it is placed back in the ready state, where it will remain till it gets a chance to execute once again.

So, when we take a look at the conditions under which CPU scheduling decisions are taken on the basis of which CPU provides its resources to processes, we can see that there isn't really a choice in making a decision when it comes to condition 1 and 4. If we have a process in the ready queue, we must select it for execution.

However, we do have a choice in conditions 2 and 3. If we opt to make the choice of scheduling only if a process terminates (condition 4) or if the current process execution is waiting for I/O (condition 1) then we can say that our scheduling is non-preemptive, however, if we make scheduling decisions in other conditions as well, we can say that our scheduling process is preemptive.

Important CPU Scheduling Terminologies

Let's now discuss some important terminologies that are relevant to CPU scheduling.

- Arrival time: Arrival time (AT) is the time at which a process arrives at the ready queue.

- Burst Time: As you may have seen the third column is 'burst time', It is the time required by the CPU to complete the execution of a process, or the amount of time required for the execution of a process. It is also sometimes called the execution time or running time.

- Completion Time: As the name suggests, completion time is the time at when a process completes its execution. It is not to be confused with burst time.

- Turn-Around Time: Also written as TAT, turn-around time is simply the difference between completion time and arrival time (Completion time - arrival time).

- Waiting Time: The Waiting time (WT) of a process is the difference between turn around time and burst time (TAT - BT), i.e. the amount of time a process waits to get CPU resources in the ready queue.

- Response Time: Response time (RT) of a process is the time after which any process gets CPU resources allocated after entering the ready queue.

CPU Scheduling Criteria

Now if the CPU needs to schedule these processes, it must definitely do it wisely. What are the wise decisions it should make to create the "best" scheduling?

- CPU Utilization: It would make sense if the scheduling was done in such a way that the CPU is utilized to its maximum. If a scheduling algorithm does not waste any CPU cycle or makes the CPU work most of the time (100% of the time, ideally), then the scheduling algorithm can be considered good.

- Throughput: Throughput by definition is the total number of processes that are completed (executed) per unit of time or, in simpler terms, it is the total work done by the CPU in a unit of time. Now, of course, an algorithm must work to maximize throughput.

- Turn Around Time: The turnaround time is essentially the total time that it took for a process to arrive in the ready queue and complete. A good CPU scheduling criteria would be able to minimize this time taken.

- Waiting Time: A scheduling algorithm obviously cannot change the time that is required by a process to complete its execution, however, it can minimize the waiting time of the process.

- Response Time: If your system is interactive, then taking into consideration simply the turnaround time to judge a scheduling algorithm is not good enough. A process might produce results quicker, and then continue to compute new results while the outputs of previous operations are being shown to the user. Hence, we have another CPU scheduling criteria, which is the response time (time taken from submission of the process until its first 'response' is produced). The goal of the CPU would be to minimize this time.

Become the Ai engineer who can design, build, and iterate real AI products, not just demos with an IIT Roorkee CEC Certification

What is the Need for CPU Scheduling Algorithm?

CPU (Central Processing Unit) scheduling algorithm is needed to efficiently allocate the available processing time of a CPU among multiple processes that are competing for the CPU's resources.

The need for CPU scheduling arises because modern operating systems allow multiple processes to execute concurrently on a single CPU. When multiple processes are running, they contend for the CPU's resources, and the CPU must choose which process to execute at any given moment.

The CPU scheduling algorithm determines the order in which processes are executed and how much CPU time each process is allocated. A good CPU scheduling algorithm should ensure that each process gets a fair share of the CPU time, while also maximizing overall system throughput and minimizing response time.

Types of CPU scheduling Algorithms

There are several types of CPU scheduling algorithms, each with its own strengths and weaknesses. Some of the most common types of CPU scheduling algorithms include:

-

First-Come, First-Served (FCFS): The simplest CPU scheduling algorithm, in which processes are executed in the order in which they arrive.

-

Shortest Job First (SJF): This algorithm schedules processes based on their expected CPU burst time. The process with the shortest expected burst time is executed first.

-

Priority Scheduling: This algorithm assigns a priority value to each process, and processes with higher priority values are executed first.

-

Round Robin (RR): This algorithm allocates a fixed time slice (quantum) to each process in a cyclic order. If a process has not completed its execution within its allotted time slice, it is preempted, and the next process in the queue is executed.

-

Multilevel Queue Scheduling: This algorithm divides the processes into separate queues based on their characteristics. Each queue has its own scheduling algorithm, and processes are moved between queues based on their priority or other criteria.

-

Multilevel Feedback Queue Scheduling: This algorithm is an extension of multilevel queue scheduling that allows processes to move between queues based on their behavior. For example, a process that uses a lot of CPU time may be moved to a lower-priority queue to make room for other processes.

Comparison between Various CPU Scheduling Algorithms

Here is a comparison between various CPU scheduling algorithms:

First-Come, First-Served (FCFS): Simple and easy to implement. This may result in long waiting times for processes with longer CPU burst times, causing poor response times. Shortest Job First (SJF): Results in shorter waiting times for processes with shorter CPU burst times. Difficult to predict CPU burst times accurately, which can lead to starvation for longer processes. Priority Scheduling: Can ensure that high-priority processes are executed first. This may result in lower-priority processes being starved of CPU time. Round Robin (RR): Ensures that all processes get an equal share of CPU time. This may result in high overhead due to frequent context switching. Multilevel Queue Scheduling: Allows for the separation of processes based on their characteristics, improving overall system performance. Requires more complex implementation and management. Multilevel Feedback Queue Scheduling: Allows for processes to be moved between queues based on their behavior, preventing starvation and ensuring that processes are executed fairly. More complex and difficult to implement compared to other scheduling algorithms. Overall, there is no one-size-fits-all solution when it comes to CPU scheduling algorithms. The choice of algorithm depends on the specific needs of the system being used, such as the nature of the processes, the desired response times, and the overall system performance requirements.

Want to master the Operating System? Enroll in our Operating System free course now and get certified!

Conclusion

- CPU scheduling is the task performed by the CPU that decides the way the process would be executed.

- There are two types of CPU scheduling

- Preemptive

- CPU resources are allocated to a process for only a limited period of time and then those resources are taken back. A running process could be interrupted to execute a higher priority process.

- Non-preemptive

- New processes are executed only after the current executing process has completed its execution.

- Preemptive

- Some important terminologies relevant to CPU scheduling are:

- Arrival Time

- Burst Time

- Completion Time

- Turn Around Time

- Waiting Time

- Response Time

- CPU scheduling has certain criteria that it uses to schedule processes in the most efficient manner:

- CPU utilization (maximize)

- Throughput (maximize)

- Turn Around Time (minimize)

- Waiting Time (minimize)

- Response Time (minimize)