What is Agents in AI? Complete Guide

Answering the core question of "what is agents in ai" requires looking beyond static machine learning models. In artificial intelligence, an agent is an autonomous system that perceives its environment through sensors, processes that information to make reasoned decisions, and acts upon the environment through actuators to achieve predefined goals.

The Evolution of Artificial Intelligence Agents

The concept of artificial intelligence agents represents a paradigm shift from passive computational models to dynamic, goal-oriented systems. Historically, machine learning architectures were designed as sophisticated pattern recognition engines. You provide an input, and the model generates an output—whether that is classifying an image, predicting a continuous variable, or generating the next token in a sequence. However, these traditional models lack agency; they do not interact with the world, maintain long-term state across iterative actions, or correct their own mistakes autonomously.

Agents bridge the gap between inference and action. By wrapping a reasoning engine—often a Large Language Model (LLM) or a reinforcement learning policy—with memory, planning capabilities, and access to external tools, software systems can now execute complex, multi-step workflows. Understanding artificial intelligence agents requires dissecting how these systems maintain context, decompose large tasks into manageable sub-tasks, and interface with external application programming interfaces (APIs) to alter their environment.

Core Architecture of an Intelligent Agent

To build or deploy an AI agent effectively, one must understand its foundational architecture. Every intelligent agent, regardless of whether it is a classical rule-based system or a modern generative AI autonomous agent, operates on a continuous feedback loop governed by four primary components: the environment, percepts, the agent function, and actions.

This architecture is best understood through the mathematical concept of the agent function. The agent function is a mapping from the sequence of all historical percepts (P*) to a specific action (A). Formally, this is represented as f: P* → A. In practical software engineering, implementing this function requires a robust architecture capable of handling state, logic, and asynchronous execution.

1. Environment and Percepts

The environment is the external system with which the agent interacts. This could be a physical space for a robotics agent, a financial market for an algorithmic trading agent, or a web browser and operating system for a software agent. Percepts are the raw data inputs the agent receives from this environment at any given time (t). For software agents, percepts typically consist of API responses, user prompts, system logs, or HTTP status codes.

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

2. Sensors

Sensors are the mechanisms through which the agent collects percepts. In a traditional software context, sensors are event listeners, webhooks, API polling mechanisms, or direct user input interfaces. They translate the raw state of the environment into a structured format (like JSON or XML) that the agent's core reasoning engine can parse and compute.

Stop learning AI in fragments—master a structured AI Engineering Course with hands-on GenAI systems with IIT Roorkee CEC Certification

3. Actuators and Actions

Actuators are the tools an agent uses to manipulate its environment. While percepts represent the input, actions represent the output. In modern AI agents, actuators are typically functional tool calls. If an agent determines it needs to send an email, the actuator is the SMTP library or REST API call executed to send that email. The agent does not just output the text of the email; it triggers the execution of the code.

4. The Reasoning Engine

At the center of the architecture is the reasoning engine. In classical AI, this might be a complex search algorithm (like A* or Minimax) or a decision tree. In contemporary artificial intelligence agents, the reasoning engine is usually a highly capable LLM. The LLM processes the percepts, contextualizes them against its system instructions and memory, and decides which actuator to invoke and with what parameters.

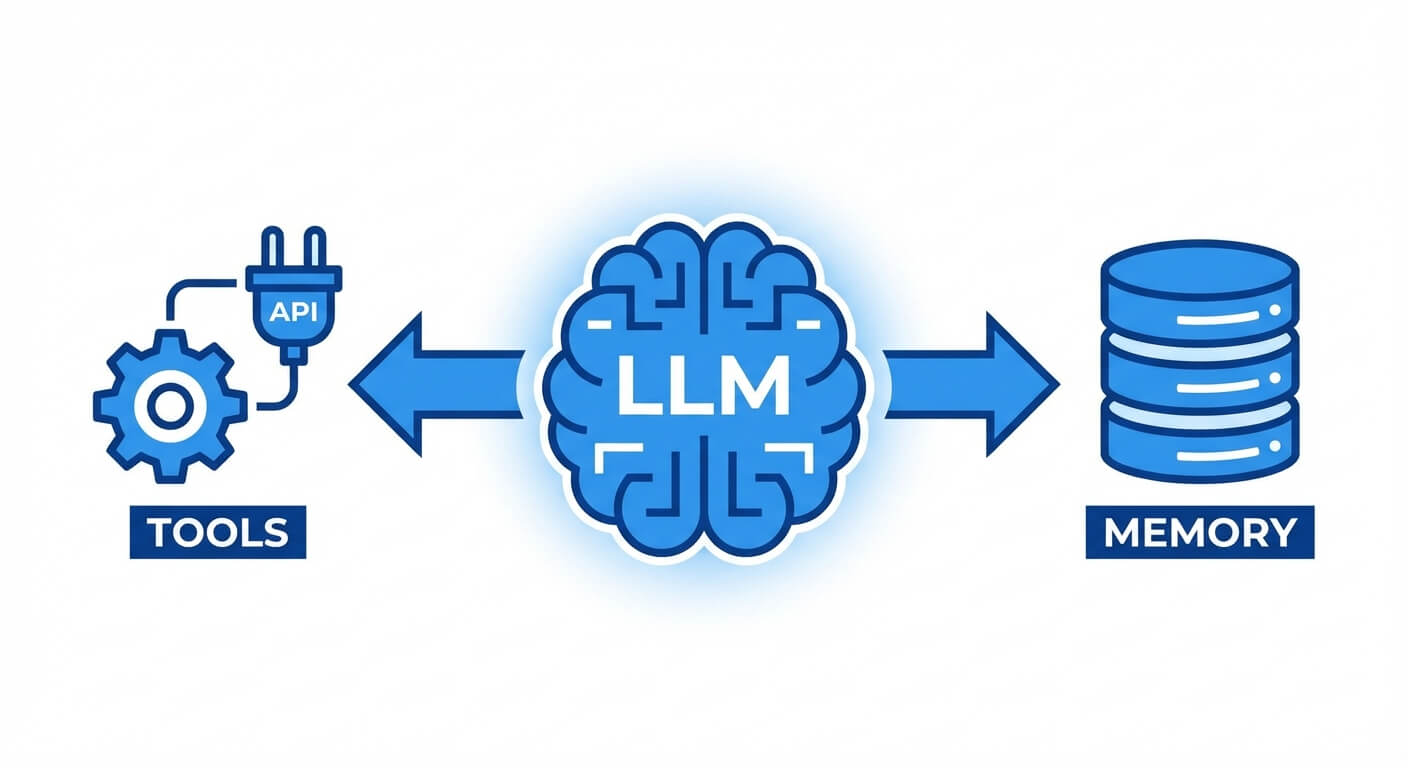

Modern Agent Components: Memory, Planning, and Tools

Modern artificial intelligence agents are distinguished from basic generative models by their scaffolding. An LLM alone is stateless; it predicts the next word based purely on the immediate context window. To elevate an LLM into an autonomous agent, developers must wrap it in a framework that provides memory, advanced planning algorithms, and tool-use integration.

Understanding these three pillars is critical for software engineers tasked with building effective agents. Without memory, an agent cannot handle complex, ongoing tasks. Without planning, it will hallucinate or get stuck in infinite execution loops. Without tools, it remains trapped in a text-in/text-out paradigm, unable to affect change in the real world.

Memory Systems

Memory allows an agent to maintain state across multiple execution cycles. Memory in AI agents is generally categorized into two distinct types:

- Short-Term Memory: This is analogous to a computer's RAM. It is constrained by the LLM’s context window (e.g., 128k or 200k tokens). Short-term memory holds the immediate conversational history, the current task decomposition, and the results of recent tool calls. Once the session ends or the context limit is reached, this memory is cleared or summarized.

- Long-Term Memory: This acts as the agent's hard drive. It utilizes Vector Databases (such as Pinecone, Milvus, or pgvector) to store information indefinitely. When an agent needs to recall past interactions or reference massive documentation, it converts its query into a dense vector embedding. It then performs a cosine similarity search against the database to retrieve relevant context, a process known as Retrieval-Augmented Generation (RAG).

Planning and Reasoning Protocols

Autonomous execution requires the agent to break down abstract goals into a deterministic sequence of operations. Advanced prompt engineering paradigms serve as the planning frameworks for these agents:

- Chain of Thought (CoT): The agent is instructed to "think step-by-step," generating intermediate reasoning traces before outputting an action. This significantly reduces logical errors in complex problem-solving.

- ReAct (Reasoning and Acting): This framework interleaves reasoning traces with task-specific actions. The agent loops through a structured sequence: Thought (analyzing the current state), Action (invoking a specific tool), and Observation (parsing the tool's output). This loop continues until the agent determines the final goal is met.

- Tree of Thoughts (ToT): For highly complex environments, agents can explore multiple reasoning paths simultaneously, evaluating the viability of each branch before committing to an action, similar to a Monte Carlo tree search.

Master structured AI Engineering + GenAI hands-on, earn IIT Roorkee CEC Certification at ₹40,000

Tool Use (Function Calling)

Tool use is the defining characteristic of modern AI agents. Instead of simply generating human-readable text, the reasoning engine outputs a structured data payload (typically JSON) that specifies a function name and its required arguments. The scaffolding framework parses this JSON, executes the local Python script, SQL query, or external API call, and feeds the resulting data back into the agent's context window as a new observation.

Classes of Intelligent Agents (The Theoretical Hierarchy)

In the academic study of artificial intelligence, particularly defined by Stuart Russell and Peter Norvig, intelligent agents are categorized into five distinct classes based on their complexity, degree of autonomy, and capability to handle uncertainty. Understanding this hierarchy helps engineers map business requirements to the correct architectural pattern.

Designing the right agent requires understanding the state space of your environment. If the environment is fully observable and deterministic, a simple rule-based agent suffices. However, in environments that are partially observable, stochastic, and dynamic (like the internet or financial markets), higher-level utility or learning agents are strictly required.

1. Simple Reflex Agents

Simple reflex agents represent the most basic level of autonomous intelligence. They operate strictly on condition-action rules (if-then logic). These agents only consider the current percept and completely ignore the history of past percepts.

- Mechanism: If condition C is met, execute action A.

- Limitations: They possess no memory and operate successfully only in fully observable environments. If the environment is partially observable, simple reflex agents will inevitably fail due to infinite loops or lack of context.

2. Model-Based Reflex Agents

Model-based agents improve upon simple reflex agents by maintaining an internal state, which allows them to handle partially observable environments.

- Mechanism: The agent maintains an internal model of how the world works and how its own actions affect the world. It tracks the parts of the environment it cannot currently see by updating its internal state based on percept history.

- Application: A robotic vacuum cleaner that remembers which rooms it has already cleaned, rather than relying solely on its current bump sensors.

3. Goal-Based Agents

While model-based agents know the state of the world, they do not inherently know what they are trying to achieve. Goal-based agents incorporate "goal information" that describes desirable situations.

- Mechanism: These agents utilize search algorithms and planning logic to find a sequence of actions that transition the environment from its current state to the goal state. They ask, "What will happen if I do this action, and does that bring me closer to my goal?"

- Application: Pathfinding algorithms in GPS systems where the goal is reaching a specific coordinate.

Become the Ai engineer who can design, build, and iterate real AI products, not just demos with an IIT Roorkee CEC Certification

Scaler Placement Report and Statistics

Scaler learners achieved 2.5x salary growth with average post-Scaler CTC reaching ₹23L.

4. Utility-Based Agents

Goals provide a binary success/failure state, but they do not account for efficiency, safety, or cost. Utility-based agents measure the "goodness" of a state using a utility function.

- Mechanism: The agent maps a state to a real number representing the degree of usefulness or satisfaction. When dealing with stochastic environments where outcomes are uncertain, the agent calculates the Expected Utility (EU) of an action. Formally: EU(a|e) = Σ P(s'|a,e) × U(s'), where P is the probability of reaching state s' given action a and current evidence e, and U is the utility of state s'.

- Application: Algorithmic trading bots that must weigh the expected financial return against the risk (variance) of a trade.

5. Learning Agents

The ultimate class of artificial intelligence agents is the learning agent, which can operate in unknown environments and improve its performance over time without manual reprogramming.

- Mechanism: It consists of four conceptual components: the Learning Element (responsible for making improvements), the Performance Element (the core agent making decisions), the Critic (which provides feedback on how well the agent is doing against a fixed performance standard), and the Problem Generator (which suggests exploratory actions to discover new optimal strategies).

LLMs vs. Copilots vs. AI Agents

A common point of confusion in modern software engineering is distinguishing between bare Language Models, AI Copilots, and autonomous AI Agents. While they share underlying neural network architectures (typically Transformers), their operational workflows, autonomy levels, and software integrations are fundamentally different.

To architect scalable AI systems, engineers must select the right paradigm. A system that requires continuous human oversight should be built as a Copilot, whereas a system designed for asynchronous, unattended execution must be built as an Agent. The table below outlines the precise technical distinctions.

| Feature / Characteristic | Large Language Model (LLM) | AI Copilot | Autonomous AI Agent |

|---|---|---|---|

| Core Definition | A foundational statistical model trained to predict text sequences. | An integrated AI assistant designed to collaborate with a human user. | An autonomous system that plans, executes, and iterates on tasks independently. |

| Autonomy Level | None. Stateless processing. | Low to Medium. Requires human prompts to proceed step-by-step. | High. Executes multi-step workflows without human intervention. |

| State & Memory | Stateless (relies entirely on the provided context window). | Maintains session state and integrates with user workspace context. | Maintains long-term state via Vector DBs and sophisticated memory management. |

| Execution Paradigm | Text-in, Text-out. | Human-in-the-loop (HITL). Drafts code or text for human approval. | Agentic loop (Thought → Action → Observation). Executes APIs autonomously. |

| Error Handling | Fails silently or hallucinates with confidence. | Relies on the human user to spot and correct errors via reprompting. | Self-corrects by evaluating environmental feedback and altering its plan. |

Building Effective AI Agents: Workflows and Frameworks

Building robust artificial intelligence agents requires moving beyond simple linear scripts. Senior engineers must implement sophisticated orchestration patterns to manage state, handle exceptions, and route logic effectively. As applications scale, single-agent architectures often become bottlenecks or suffer from prompt degradation due to bloated context windows.

Modern agent development relies on standardized workflows and orchestration frameworks. These workflows dictate how an agent transitions between states, when it triggers external actions, and how it evaluates its own output before returning a result to the user.

Advanced Agentic Workflows

- Prompt Chaining: The simplest agentic workflow. The output of one LLM call is programmatically passed as the input to the next LLM call. This is highly effective for deterministic, sequential tasks like extracting data, formatting it, and then generating a summary.

- Routing: A classifier agent receives the initial percept (user prompt) and routes it to specialized, smaller models or specific tool sets based on intent. This saves compute and reduces the risk of generic models making errors on specialized tasks.

- Evaluator-Optimizer Loop: An iterative workflow where a "Generator" agent produces a result (e.g., a block of code), and an "Evaluator" agent reviews it against a set of constraints or test cases. If the evaluator finds errors, it feeds the critique back to the generator, looping until a passing threshold is met.

- Orchestrator-Worker (Multi-Agent System): A central managerial agent breaks down a massive task and delegates sub-tasks to specialized worker agents in parallel. Once all workers return their results, the orchestrator synthesizes the final output.

Agent Development Frameworks

To implement these workflows, engineers utilize several open-source frameworks designed to manage agent state and tool execution:

- LangChain: A highly popular framework providing abstractions for memory, tool integration, and prompt templating. It excels in standard RAG applications.

- LangGraph: Built on top of LangChain, LangGraph treats agent workflows as cyclic graphs (Directed Cyclic Graphs). It allows engineers to build highly controllable state machines where agents can loop iteratively based on precise conditionals.

- Microsoft AutoGen: A powerful framework optimized for building Multi-Agent conversations. AutoGen allows multiple agents, each with unique system prompts and tool access, to converse and collaborate to solve complex engineering tasks.

- LlamaIndex: Initially designed as a data framework for LLMs, LlamaIndex has evolved to support rich agentic behaviors, particularly excelling in intelligent routing and semantic search integrations.

Challenges, Limitations, and Risks in Agentic AI

While artificial intelligence agents offer unprecedented automation capabilities, deploying them in production environments introduces significant engineering and security challenges. Unlike deterministic software, agents operate probabilistically. This non-determinism means that an agent might succeed in testing 99 times but fail catastrophically on the 100th execution due to subtle variations in the environment or prompt interpretation.

Engineering teams must architect safety guardrails, strict API scoping, and comprehensive monitoring observability (such as LangSmith or Phoenix) to track agent behavior in real-time. Failure to secure an agent can lead to compromised databases, unauthorized financial transactions, or the exposure of sensitive proprietary logic.

1. Hallucinations and Cascading Errors

LLMs are inherently prone to hallucinations—generating plausible but factually incorrect information. In a standard LLM chat, this is a minor inconvenience. In an autonomous agent, a hallucination can be disastrous. If an agent hallucinates a tool's API endpoint or argument, the execution will fail. Worse, if the agent misinterprets the result of an action, it may build subsequent plans on false premises, leading to a cascade of logical errors.

2. Infinite Execution Loops

Because agents operate on loops (like the ReAct framework), they are susceptible to getting stuck. If an agent triggers an API that returns a vague error message, the agent might repeatedly try the exact same action, or try minor variations that continue to fail, rapidly burning through API credits and compute resources. Implementing strict "max iterations" limits and hard timeouts is an absolute requirement in agent architecture.

3. Security and Prompt Injection

Agents connected to external tools represent a massive attack surface. The most critical threat is Prompt Injection. If an agent processes untrusted external data (e.g., reading an incoming email or scraping a webpage), a malicious actor can embed hidden instructions in that data. If the agent incorporates that data into its context window, it may interpret the malicious instructions as its new system prompt—potentially commanding the agent to exfiltrate data, delete files, or execute unauthorized code.

4. Latency and Compute Costs

Agentic workflows require multiple sequential calls to Large Language Models. A single user request might trigger a routing call, a task decomposition call, multiple tool execution calls, and a final synthesis call. This inevitably leads to high system latency (often taking 10 to 30 seconds for a response) and significant token costs. Engineers must balance the use of expensive, highly capable models (like GPT-4 or Claude 3.5 Sonnet) with smaller, faster open-weights models (like Llama 3) for simpler routing or formatting tasks.

Frequently Asked Questions (FAQs)

What is the difference between an AI agent and a standard machine learning model?

A standard machine learning model processes input data and returns a prediction or classification statelessly. An AI agent wraps a machine learning model (usually an LLM) with memory, planning logic, and access to external tools, allowing it to autonomously interact with its environment and execute multi-step workflows to achieve a goal.

How do artificial intelligence agents handle memory?

Agents utilize two forms of memory. Short-term memory is managed within the LLM’s context window, storing the immediate conversation history and task state. Long-term memory is managed externally using Vector Databases, where vast amounts of text are converted into vector embeddings. The agent queries this database using semantic search (RAG) to retrieve relevant information when needed.

Can AI agents operate without human intervention?

Yes, high-level autonomous agents are specifically designed to operate without human-in-the-loop intervention. Once provided with an initial goal, they use frameworks like ReAct to continuously analyze their environment, execute tool calls, observe the results, and self-correct their logic until the predefined objective is successfully met.

What is a Multi-Agent System (MAS)?

A Multi-Agent System is an architecture where multiple distinct AI agents interact or collaborate to solve a problem that is too complex for a single agent. Each agent in the system is typically assigned a specific persona, toolset, or domain of expertise. They communicate with each other, delegate sub-tasks, and synthesize their findings to produce a highly accurate final outcome.